FirstSimVR: Evaluating Future Tools Using Today's VR

NextGen Interactions

Next-generation first-responder tools and their interfaces have the potential to significantly enhance public safety. However, many such tools are still at an early experimental stage and are not yet ready to be used or fully tested. Even when the tools come to fruition, it can be difficult to evaluate and optimize their use in the context for which they will be deployed. To propel tool development, evaluation, and usage, we are leveraging virtual reality (VR) technologies to efficiently test early prototypes of those new tools in virtual environments that simulate the context in which they will be used. Whereas consumer VR systems can support scenarios that are quite visually and aurally realistic, most of today’s VR hardware is lacking when it comes to physical touch. This shortfall is especially critical when simulating real-world user interfaces and the real physical world first responders work in. For FirstSimVR, we focus on adding (and evaluating) realistic physical cues to VR interfaces and the environment the system is simulating. - July 2019

Meet the team

Principal Investigator: NextGen Interactions*

JJ Farantatos, Hazmat Instructor*

Regis Kopper, Research Scientist*

Raleigh Fire Department, public safety partner*

* Team involved in 2021-2022 Demonstration Project

HazVR: Hazardous Material Training with Virtual Reality: Demonstration Project with the Raleigh Fire Department

NextGen Interactions was awarded a separate, one-year award to complete a demonstration project to further develop and evaluate in classroom settings their HazVR, a hazmat training project with the Raleigh Fire Department. This demonstration project seeks to validate their hazmat training module, which reinforces concepts taught in classrooms through the use of virtual reality. The ultimate goal is for hazmat trainees to be able to practice, learn, discuss, and reflect upon working in the full context of hazardous situations, which are high-risk, low-frequency occurrences. This will allow public safety to safely obtain experiential training of hazardous incidents, which previously was not possible without working in actual dangerous environments.

FirstSimVR project overview

We propose FirstSimVR, a versatile multimodal VR platform that simulates interfaces and environments to more effectively prototype, evaluate, and improve first-responder interfaces. We will target both Goal 1 (Development & Prototyping) & Goal 2 (Effectiveness & Transferability of AR/VR Simulations).

Objectives:

- Create a versatile framework to evaluate future first-responder interfaces by building upon SteamVR hardware/software with Unity and offering different configuration options for different scenarios.

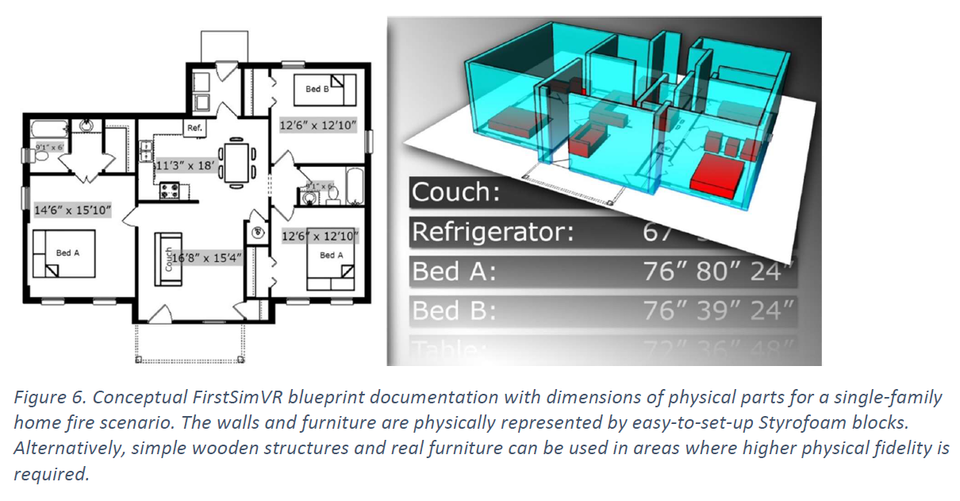

- Simulate scenarios that are normally difficult to simulate by utilizing virtual reality multimodal cues such as visual effects, spatialized audio, and passive haptics.

- Improve simulations based on extensive feedback from first responders by hiring two first responders part time and obtaining feedback from a larger number of first-responder volunteers.

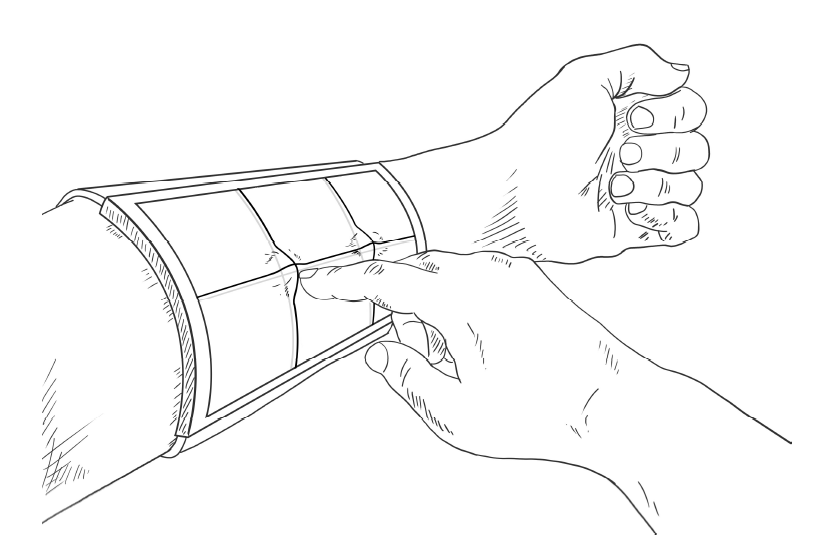

- Augment virtual interactions with real physical touch by using tracked and untracked physical objects that correspond to visual representations.

- Create an example next-generation tactile interface specific to first-responder needs by listening carefully to the needs of first responders and taking advantage of our body tracking and passive haptics.

- Minimize motion sickness and maximize safety by minimizing sensory conflict, maintaining high tracking accuracy, matching visual representations with physical objects, and not using cables.

- Integrate with existing systems for easy access to the public safety stakeholder community by having our base-level configuration only require the standard HTC Vive that is available at consumer prices.

- Evaluate how effectively simulating physical touch transfers to real-world first-responder tasks by collecting qualitative and quantitative data. Our final evaluations will consist of three user studies.

- Disseminate research findings by publishing a white paper, releasing anonymized data sets, presenting/demoing at conferences, and publishing papers.

Other information:

Problem statement:

- Experimental tools are not ready to be used or fully tested

- Difficult to evaluate and optimize interfaces in the context which they will be deployed

- Today’s best VR is designed for entertainment, not public safety

- Current simulations are not able to effectively convey physical touch

Concept:

- Configuration options range from a standard HTC Vive to an enhanced HTC Vive that integrates physical objects with the virtual

- Work closely with first responders

- Evaluation ranges from UX processes to formal user studies

Potential impact:

- Improved evaluation of interfaces and processes

- Increased safety through the use of better-designed tools

- Decreased cognitive load as a result of tactile interaction

- Better training transfer

- Faster response to critical incidents

Deliverables:

- software

- 3D printed objects with embedded sensors

- documentation

- a white paper summarizing our findings

- anonymized datasets

<< Back to PSIAP - User Interface Page