Summary

This project aims to develop and evaluate a coherent set of methods to understand behavior in complex information systems, such as the Internet, computational grids and computing clouds. Such large distributed systems exhibit global behavior arising from independent decisions made by many simultaneous actors, which adapt their behavior based on local measurements of system state. Actor adaptations shift the global system state, influencing subsequent measurements, leading to further adaptations. This continuous cycle of measurement and adaptation drives a time-varying global behavior. For this reason, proposed changes in actor decision algorithms must be examined at large spatiotemporal scale in order to predict system behavior. This presents a challenging problem.

Description

What are complex systems? Large collections of interconnected components whose interactions lead to macroscopic behaviors in:

- Biological systems (e.g., slime molds, ant colonies, embryos)

- Physical systems (e.g., earthquakes, avalanches, forest fires)

- Social systems (e.g., transportation networks, cities, economies)

- Information systems (e.g., Internet and compute clouds)

What is the problem? No one understands how to measure, predict or control macroscopic behavior in complex information systems: (1) threatening our nation's security and (2) costing billions of dollars.

"[Despite] society's profound dependence on networks, fundamental knowledge about them is primitive. [G]lobal communication ... networks have quite advanced technological implementations but their behavior under stress still cannot be predicted reliably.... There is no science today that offers the fundamental knowledge necessary to design large complex networks [so] that their behaviors can be predicted prior to building them."

above quote from Network Science 2006, a National Research Council report

What is the new idea? Leverage models and mathematics from the physical sciences to define a systematic method to measure, understand, predict and control macroscopic behavior in the Internet and distributed software systems built on the Internet.

What are the technical objectives? Establish models and analysis methods that (1) are computationally tractable, (2) reveal macroscopic behavior and (3) establish causality. Characterize distributed control techniques, including: (1) economic mechanisms to elicit desired behaviors and (2) biological mechanisms to organize components.

Why is this hard? Valid computationally tractable models that exhibit macroscopic behavior and reveal causality are difficult to devise. Phase-transitions are difficult to predict and control.

Who would care? All designers and users of networks and distributed systems with a 25-year history of unexpected failures:

- ARPAnet congestion collapse of 1980

- Internet congestion collapse of Oct 1986

- Cascading failure of AT&T long-distance network in Jan 1990

- Collapse of AT&T frame-relay network in April 1998 ...

Businesses and customers who rely on today's information systems:

- "Cost of eBay's 22-Hour Outage Put At $2 Million", Ecommerce, Jun 1999

- "Last Week's Internet Outages Cost $1.2 Billion", Dave Murphy, Yankee Group, Feb 2000

- "...the Internet "basically collapsed" Monday", Samuel Kessler, Symantec, Oct 2003

- "Network crashes...cost medium-sized businesses a full 1% of annual revenues", Technology News, Mar 2006

- "costs to the U.S. economy...range...from $65.6 M for a 10-day [Internet] outage at an automobile parts plant to $404.76 M for ... failure ...at an oil refinery", Dartmouth study, Jun 2006

Designers and users of tomorrow's information systems that will adopt dynamic adaptation as a design principle:

- DoD to spend $13 B over the next 5 yrs on Net-Centric Enterprise Services initiative, Government Computer News, 2005

- Market derived from Web services to reach $34 billion by 2010, IDC

- Grid computing market to exceed $12 billion in revenue by 2007, IDC

- Market for wireless sensor networks to reach $5.3 billion in 2010, ONWorld

- Revenue in mobile networks market will grow to $28 billion in 2011, Global Information, Inc.

- Market for service robots to reach $24 billion by 2010, International Federation of Robotics

Hard Issues & Plausible Approaches

| Hard Issues | Plausible Approaches |

|---|---|

| H1. Model scale | A1. Scale-reduction techniques |

| H2. Model validation | A2. Sensitivity analysis & key comparisons |

| H3. Tractable analysis | A3. Cluster analysis and statistical analyses |

| H4. Causal analysis | A4. Evaluate analysis techniques |

Model scale – Systems of interest (e.g., Internet and compute grids) extend over large spatiotemporal extent, have global reach, consist of millions of components, and interact through many adaptive mechanisms over various timescales. Scale-reduction techniques must be employed. Which computational models can achieve sufficient spatiotemporal scaling properties? Micro-scale models are not computable at large spatiotemporal scale. Macro-scale models are computable and might exhibit global behavior, but can they reveal causality? Meso-scale models might exhibit global behavior and reveal causality, but are they computable? One plausible approach is to investigate abstract models from the physical sciences. e.g., fluid flows (from hydrodynamics), lattice automata (from gas chemistry), Boolean networks (from biology) and agent automata (from geography). We can apply parallel computing to scale to millions of components and days of simulated time. Scale reduction may also be achieved by adopting n-level experiments coupled for orthogonal fractional factorial (OFF) experiment designs.

Model validation – Scalable models from the physical sciences (e.g., differential equations, cellular automata, nk-Boolean nets) tend to be highly abstract. Can sufficient fidelity be obtained to convince domain experts of the value of insights gained from such abstract models? We can conduct sensitivity analyses to ensure the model exhibits relationships that match known relationships from other accepted models and empirical measurements. Sensitivity analysis also enables us to understand relationships between model parameters and responses. We can also conduct key comparisons along three complementary paths: (1) comparing model data against existing traffic and analysis, (2) comparing results from subsets of macro/meso-scale models against micro-scale models and (3) comparing simulations of distributed control regimes against results from implementations in test facilities, such as the Global Environment for Network Innovations.

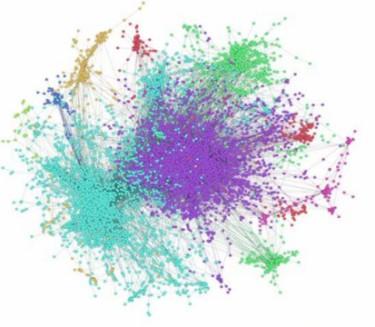

Tractable analysis – The scale of potential measurement data is expected to be very large – O(10**15) – with millions of elements, tens of variables, and millions of seconds of simulated time. How can measurement data be analyzed tractably? We could use homogeneous models, which allow one (or a few) elements to be sampled as representative of all. This reduces data volume to 10**6 – 10**7, which is amenable to statistical analyses (e.g., power-spectral density, wavelets, entropy, Kolmogorov complexity) and to visualization. Where homogeneous models are inappropriate, we can use clustering analysis to view relationships among groups of responses. We can also exploit correlation analysis and principal components analysis to identify and exclude redundant responses from collected data. Finally, we can construct combinations of statistical tests and multidimensional data visualization techniques tailored to specific experiments and data of interest.

Causal analysis – Tractable analysis strategies yield coarse data with limited granularity of timescales, variables and spatial extents. Coarseness may reveal macroscopic behavior that is not explainable from the data. For example, an unexpected collapse in the probability density function of job completion times in a computing grid was unexplainable without more detailed data and analysis. Multidimensional analysis can represent system state as a multidimensional space and depict system dynamics through various projections (e.g., slicing, aggregation, scaling). State-space dynamics can segment system dynamics into an attractor-basin field and then monitor trajectories. Markov models providing compact, computationally efficient representations of system behavior can be subjected to perturbation analyses to identify potential failure modes and their causes.

Controlling Behavior – Large distributed systems and networks cannot be subjected to centralized control regimes because the system consists of too many elements, too many parameters, too much change, and too many policies. Can models and analysis methods be used to determine how well decentralized control regimes stimulate desirable system-wide behaviors? Use price feedback (e.g., auctions, present-value analysis or commodity markets) to modulate supply and demand for resources or services. Use biological processes to differentiate function based on environmental feedback, e.g., morphogen gradients, chemotaxis, local and lateral inhibition, polarity inversion, quorum sensing, energy exchange and reinforcement.

Major Accomplishments

Apr 2018 The project defined five potential run-time predictors of network congestion collapse, and evaluated the predictors under two traffic scenarios (increasing and steady loads) for three network models with varying degrees of realism. The project demonstrated that the simplest predictor provided best accuracy and also provided significant warning time. The project also showed that two complicated predictors (autocorrelation and variance) were unreliable, giving many false alerts under steady load.

Dec 2017 The project developed a layered and aggregated queuing network simulation model that can represent behaviors associated with individual packets while achieving increased computational efficiency over discrete-event simulation and also bounding error to a known level. The project demonstrated the application of the layered and aggregated queuing network simulation model to represent behaviors associated with distributed denial of service attacks, showing how such behaviors could be detected with known monitoring techniques, as had been demonstrated previously for discrete-event simulations.

Jul 2017 The project defined new sampling methods to improve the scalability of computation for systems with high dimensional uncertainties. The project demonstrated the application of the sampling methods to determine optimal control decisions and adaptive controls using reinforcement learning. For the demonstration problems, the new sampling methods achieved high accuracy while requiring limited computational resources.

Apr 2016 The project demonstrated that the degree of realism in network simulations influences evolution of network-wide congestion, and also identified key realistic factors that must be included in network simulations in order to draw valid conclusions about spreading network congestion, breakdown in network connectivity, probability of packet delivery, and latencies for successfully delivered packets.

Mar 2015 The project demonstrated that results from a previous study of virtual-machine placement algorithms in computational clouds would not be changed by the injection of asymmetries, dynamics, and failures. This demonstration increased confidence in findings from the previous study.

Dec 2014 The project delivered an effective and scalable method for uncertainty estimation in large-scale simulation models. The method, described in a paper in the proceedings of the 2014 Winter Simulation Conference, can be applied to provide accurate estimation of the value of model responses. The estimation algorithm requires a minimum of computation.

Sep 2014 The project delivered an experiment design and analysis method to determine effective settings for control parameters in evolutionary computation algorithms. The method was documented in a journal article accepted for publication by Evolutionary Computation, MIT Press, which is the leading journal in the field.

Aug 2014 The project delivered a proposal and oral presentation outlining research into methods to provide early warning of network catastrophes. The proposal and oral presentation were part of the FY 2015 NIST competition seeking innovations in measurement science.

Oct 2013 The project delivered an evaluation of a method combining genetic algorithms and simulation to search for failure scenarios in system models. The method was applied to a case study of the Koala cloud computing model. The method was able to discover a known failure cause, but in a novel setting, and was also able to discover several unknown failure scenarios. Subsequently, the method and evaluation were presented at an international workshop on simulation methods, and in two invited lectures, one at Mitre and one at George Mason University.

Dec 2012 In the fall of 2012, Dr. Mills contributed methods from this project to a DoE Office of Science Workshop on Computational Modeling of Big Networks (COMBINE). Dr. Mills also coauthored the report, which was published in December of 2012. The main NIST contributions are documented in Chapter 5 of the report, which outlines effective methods and best practices for experiment design and validation & verification of simulation models.

Nov 2011 In the fall of 2009, this project started investigating large scale behavior in Infrastructure Clouds. The project produced three related papers during 2011, and all three papers were accepted at the two major IEEE cloud computing conferences held during the year. The rapid success of the project in this new domain illustrates the general applicability of the methods we developed, as well as the ease with which those methods can be applied.

Nov 2010 Developed and demonstrated Koala, a discrete-event simulator for Infrastructure Clouds. Completed a sensitivity analysis of Koala to identify unique response dimensions and significant factors driving model behavior. Created multidimensional animations to visualize spatiotemporal variation in resource usage and load for cores, disks, memory and network interfaces in clouds with up to O(10**5) nodes.

May 2010 NIST Special Publication 500-282: Study of Proposed Internet Congestion Control Mechanisms

Sep 2009 Draft NIST Special Publication: Study of Proposed Internet Congestion-Control Mechanisms

Apr 2009 Demonstrated applicability of Markov model perturbation analysis to communication networks.

Sep 2008 Developed a Markov model for a global, computational grid and demonstrated the feasibility of applying perturbation analysis to predict conditions that could lead to performance degradation. Currently, perturbation analysis is a theoretical topic for which we show applications to large distributed systems.

Aug 2008 Developed and demonstrated multidimensional visualization software to explore relationships among complex data sets derived from simulations of large distributed systems. Currently, there are no widely used visualization techniques to explore multidimensional data from simulations of large distributed systems.

Jun 2008 Developed and demonstrated an analytical framework to understand relationships among pricing, admission control and scheduling for resource allocation in computing clusters. Currently, resource-allocation mechanisms for computing clusters rely on heuristics.

Apr 2008 Developed and validated MesoNetHS, which adds six proposed replacement congestion-control algorithms to MesoNet and allows the behavior of the algorithms to be investigated in a large topology. Currently, these congestion-control algorithms are explored in simulated and empirical topologies of small size.

Sep 2007 Developed and demonstrated a methodology for sensitivity analysis of models of large distributed systems. Currently, sensitivity analysis of models for large distributed systems is considered infeasible.

Apr 2007 Developed and verified MesoNet, a mesoscopic scale network simulation model that can be specified with about 20 parameters. Currently, specifying most network simulations requires hundreds to thousands of parameters.

Additional Technical Details

Related Presentations

- C. Dabrowski and K. Mills, "Evaluating Predictors of Congestion Collapse in Communication Networks", IEEE/IFIP Network Operations & Management Symposium, Taipei, Taiwan, April 24, 2018.

- C. Dabrowski, K. Mills, Y. Wan, and J. Xie, "Developing Predictors of Failure Regimes in Real Communication Networks", poster presented at ITL Science Day, Gaithersburg, MD, November 2, 2017.

- K. Mills, "Combining Genetic Algorithms & Simulation to Search for Failure Scenarios in System Models", Computer Science & Engineering Seminar, University of North Texas, Denton, Texas, June 28, 2016.

- K. Mills and C. Dabrowski, "The Need for Realism when Simulating Network Congestion", Computer Networking Symposium, SpringSim, Pasadena, CA, April 4, 2016.

- K. Mills and C. Dabrowski, "The Influence of Realism on Congestion in Network Simulations", C4I Seminar, George Mason University, Fairfax, VA, February 1, 2016.

- K. Mills and C. Dabrowski, "The Influence of Realism on Congestion in Network Simulations", Applied & Computational Mathematics Seminar Series, NIST, Gaithersburg, MD, December 1, 2015.

- K. Mills, "Validating Simulations of Large-scale Computer Networks", ITL Science Day, Gaithersburg, MD, October 27, 2015.

- C. Dabrowski and K. Mills, "The influence of realism on congestion in network simulations", poster presented at ITL Science Day, Gaithersburg, MD, October 27, 2015.

- K. Mills, "Predicting Global Failure Regimes in Complex Information Systems", NIST Cloud Computing Forum and Workshop 8, Gaithersburg, MD, July 9, 2015.

- J. Xie, Y. Wan, Y. Zhou, K. Mills, J. Filliben, and Y. Lei, "Effective and Scalable Uncertainty Evaluation for Large-Scale Complex System Applications", Winter Simulation Conference, Savannah, GA, December 10, 2014.

- K. Mills, C. Dabrowski, J. Filliben, F. Hunt, and B. Rust, "Early Warning of Network Catastrophes", FY2015 IMS Oral Presentation, Gaithersburg, MD, August 28, 2014.

- K. Mills, M. Mijic and S. Morgan, "Cloud Reliability Breakout", NIST Workshop and Forum on Cloud Computing and Mobility, Gaithersburg, MD, March 25-27, 2014.

- K. Mills, "Combining Genetic Algorithms & Simulation to Search for Failure Scenarios in System Models", Computer Science Interdisciplinary Seminar, George Mason University, February 19, 2014.

- K. Mills, "Predicting the Unpredictable in Complex Information Systems", Joint University of Maryland and NIST Network Science Symposium, College Park, Maryland, January 24, 2014.

- K. Mills, "Combing Genetic Algorithms & Simulation to Search for Failure Scenarios in System Models", SIMUL 2013 paper presentation, Venice, Italy, October 29, 2013.

- K. Mills, "Predicting the Unpredictable in Complex Information Systems", SIMUL 2013 Keynote, Venice Italy, October 28, 2013.

- K. Mills, "Combining Genetic Algorithms & Simulation to Search for Failure Scenarios in System Models", Mitre Cyber Security Technical Center Distinguished Lecture, McLean, Virginia, October 16, 2013.

- K. Mills, "Combining Genetic Algorithms & Simulation to Search for Failure Scenarios in System Models", presentation for the NIST Cloud Computing Security Working Group, July 17, 2013.

- K. Mills, "Understanding Behavior and Improving Reliability in Complex Information Systems", keynote presentation at the 4th PI meeting for the DARPA Mission-Resilient Cloud program, Park Ridge, NJ, May 8-10, 2013.

- K. Mills, J. Filliben and C. Dabrowski, "Using Genetic Algorithms to Search for Failure Scenarios", poster presentation at Cloud Computing & Big Data Forum & Workshop, NIST, January 15-17, 2013.

- Mills, "Predicting the Unpredictable in Complex Information Systems", keynote presentation at the IEEE/ACM 5th International Conference on Utility & Cloud Computing, Chicago, Illinois, November 5-8, 2012.

- K. Mills, J. Filliben and C. Dabrowski, "Predicting Global Failure Regimes in Complex Information Systems", presentation at the DoE COMBINE Worskhop, Washington, DC, September 11-12, 2012.

- C. Dabrowski, J. Filliben, K. Mills, S. Ressler and B. Rust, "Mitigating Global Failure Regimes in Large Distributed Systems", poster presented at the Lawrence Livermore Workshop on Current Challenges in Computing 2012: Network Science, Napa, CA, August 28-29, 2012.

- A. Haines, "Determining Important Control Parameters of a Genetic Algorithm", Summery University Research Fellow Plenary Presentation, NIST, Gaithersburg, MD, August 7, 2012.

- C. Dabrowski, J. Filliben and K. Mills, "Predicting Global Failure Regimes in Complex Information Networks", Santa Fe Institute Workshop on Measurement of Complex Information Networks, Mitre, McLean, Virginia, July 12, 2012.

- C. Dabrowski, J. Filliben and K. Mills, "Predicting Global Failure Regimes in Complex Information Systems", NetONets 2012, Systemic Risk and Infrastructural Interdependencies, Northwestern University, June 19, 2012.

- K. Mills, J. Filliben and C. Dabrowski, "Improving Cloud Reliability", NIST Cloud Computing Forum & Workshop V, Department of Commerce, Washington, D.C., June 5-7, 2012.

- C. Dabrowski, J. Filliben, K. Mills, S. Ressler and B. Rust, "Poster on Mitigating Global Failure Regimes in Large Distributed Systems", presented at the NIST Cloud Computing Forum & Workshop V, Department of Commerce, Washington, D.C., June 5-7, 2012.

- K. Mills, J. Filliben and C. Dabrowski, "Comparing VM-Placement Algorithms for On-Demand Clouds", Large-Scale Networking Working Group, Arlington, VA, Feb. 14, 2012.

- C. Dabrowski and K. Mills, "VM Leakage & Orphan Control in Open-Source Clouds", IEEE CloudCom 2011, Athens, Dec. 1, 2011.

- K. Mills, J. Filliben and C. Dabrowski, "Comparing VM-Placement Algorithms for On-Demand Clouds", IEEE CloudCom 2011, Athens, Nov. 30, 2011.

- K. Mills, C. Dabrowski, J. Filliben and F. Hunt, "Posters Presented at NIST Cloud Computing Forum & Workshop IV", Gaithersburg, MD, Nov. 3-4, 2011.

- J. Filliben and K. Mills, "Comparison of Two Dimension-Reduction Methods for Network Simulation Models", Statistical Engineering Division Seminar, NIST, Gaithersburg, MD, Sept. 22, 2011.

- C. Dabrowski and F. Hunt, "Using Markov Chain and Graph Theory Concepts to Analyze Behavior in Complex Distributed Systems", 23rd European Modeling and Simulation Symposium, Rome, Sept. 13, 2011.

- K. Mills, J. Filliben, D.-Y. Cho and E. Schwartz, "Predicting Macroscopic Dynamics in Large Distributed Systems", American Society of Mechanical Engineers 2011 Conference on Pressure Vessels & Piping, Baltimore, MD, July 21, 2011.

- C Dabrowski and F. Hunt, "Identifying Failure Scenarios in Complex Systems by Perturbing Markov Chain Models", American Society of Mechanical Engineers 2011 Conference on Pressure Vessels & Piping, Baltimore, MD, July 21, 2011.

- K. Mills, J. Filliben and C. Dabrowski, "An Efficient Sensitivity Analysis Method for Large Cloud Simulations", IEEE Cloud 2011, Washington, D.C., July 8, 2011.

- K. Mills, J. Filliben, D.-Y. Cho and E. Schwartz, "Predicting Macroscopic Dynamics in Large Distributed Systems", LSN Seminar on Complex Networks and Information Systems, Gaithersburg, Maryland, June 30, 2011.

- K. Mills, J. Filliben, C. Dabrowski and S. Ressler, "Posters Presented NIST Work on Measurement Science for Complex Systems, as Applied to Cloud Computing Systems", NIST Cloud Computing Forum & Workshop III, Gaithersburg, Maryland, April 7-8, 2011.

- K. Mills, E. Schwartz and J. Yuan, "How to Model a TCP/IP Network using only 20 Parameters", Winter Simulation Conference (WSC 2010), Baltimore, Maryland, Dec. 8, 2010.

- K. Mills and J. Filliben, "Using Sensitivity Analysis to Identify Significant Parameters in a Network Simulation", Winter Simulation Conference (WSC 2010), Baltimore, Maryland, Dec. 6, 2010.

- K. Mills and J. Filliben, "Comparing Two Dimension-Reduction Methods for Network Simulation Models", Winter Simulation Conference (WSC 2010), Baltimore, Maryland, Dec. 6, 2010.

- K. Mills, "Study of Proposed Internet Congestion Control Algorithms", Internet Congestion Control Research Group (ICCRG) of the Internet Research Task Force (IRTF) at the 77th Internet Engineering Task Force (IETF) meeting at Anaheim, California, March 24, 2010.

- K. Mills and J. Filliben, "An Efficient Sensitivity Analysis Method for Mesoscopic Network Models", Complex Systems Study Group, NIST, February 2, 2010

- K. Mills, "Study of Proposed Internet Congestion Control Algorithms", seminar sponsored by the Computer Science Department and the C4I Center at George Mason University, Fairfax, Virginia, January 29, 2010.

- K. Mills, "How to model a TCP/IP network using on 20 parameters", Complex Systems Study Group, NIST, November 17, 2009

- K. Mills, "Measurement Science for Complex Information Systems", invited presentation to the Internet Congestion-Control Research Group (ICC-RG) of the Internet Research Task Force (IRTF) at Tokyo, Japan, May 20, 2009.

- K. Mills, "Measurement Science for Complex Information Systems", seminar sponsored by the Computer Science Department and the C4I Center at George Mason University, Fairfax, Virginia, March 27, 2009.

- K. Mills, "Measurement Science for Complex Information Systems", AOL Network Architecture Group, Dulles, Virginia, March 18, 2009.

- K. Mills, "Measurement Science for Complex Information Systems", NITRD Large-Scale Networking Working Group, Ballston, Virginia, March 10, 2009.

- K. Mills, "Progress Report on Measurement Science for Complex Information Systems", Complex Systems Lecture Series, NIST Information Technology Laboratory, Gaithersburg, Maryland, January 27, 2009.

- J. Filliben, "Sensitivity Analysis Methodology for a Complex System Computational Model", 39th Symposium on the Interface: Computing Science and Statistics, Philadelphia, PA, May 26, 2007.

- C. Dabrowski and K. Mills, "A Program of Work for Understanding Emergent Behavior in Global Grid Systems", Global Grid Forum 16, Athens, Greece, February 13, 2006.

Associated Product(s)

Related Publications

- J. Xie, Y. Wan, K. Mills, J. Filliben, Y. Lei and Z. Lin, "M-PCM-OFFD: An effective output statistics estimation method for systems of high dimensional uncertainties subject to low-order parameter interactions", Mathematics and Computers in Simulation, 159 (2019) 93-118. https://doi.org/10.1016/j.matcom.2018.10.010

- V.S. Mai, A. Battou and K. Mills, "Distributed Algorithm for Suppressing Epidemic Spread in Networks", (to appear) in IEEE Control Systems Letters, 2(3), 2018.

- C. Dabrowski and K. Mills, "Evaluating Predictors of Congestion Collapse in Communication Networks", Proceedings of the 2018 IEEE/IFIPS Network Operations and Management Symposium, April 24-26, 2018.

- J. Xie, C. He, Y. Wan, K. Mills, C. Dabrowski, "A Layered and Aggregated Queuing Network Simulator for Detection of Abnormalities", Proceedings of the Winter Simulation Conference, December 2017.

- J. Xie, Y. Wan, K. Mills, J. J. Filliben, F. L. Lewis, “A Scalable Sampling Method to High-dimensional Uncertainties for Optimal and Reinforcement Learning-based Controls”, IEEE Control Systems Letters, Volume 1, No. 1, Pages:98-103, July, 2017. Online ISSN 2475-1456, IEEE. DOI: 10.1109/LCSYS.2017.2708598

- C. Dabrowski and K. Mills, "Using Realistic Factors to Simulate Catastrophic Congestion Events in a Network", Computer Communications 112 (2017) 93-108. DOI: 10.1016/j.comcom.2017.08.006

- K. Mills and C. Dabrowski, The Need for Realism when Simulating Network Congestion, Proceedings of the Spring Simulation Multi-Conference, Pasadena, CA, April 2016, pp. 228-235.

- C. Dabrowski and K. Mills, The Influence of Realism on Congestion in Network Simulations, NIST Technical Note 1905, January 2016, 62 pages.

http://dx.doi.org/10.6028/NIST.TN.1905 - C. Dabrowski, Catastrophic Event Phenomena in Communication Networks: A Survey, Computer Science Review, Vol. 18, November 2015, pp. 10-45. doi:10.1016/j.cosrev.2015.10.001

- K. Mills, J. Filliben, and C. Dabrowski, Assessing Effects of Asymmetries, Dynamics, and Failures on a Cloud Simulator, NIST Technical Note 1857, March 2015, 64 pages, http://dx.doi.org/10.6028/NIST.TN.1857.

- K. Mills, J. Filliben, and A. Haines, "Determining Relative Importance and Effective Settings for Genetic Algorithm Control Parameters", Evolutionary Computation, MIT Press, 23:2, published online Sept. 25, 2014. (doi: 10.1162/EVCO_a_00137)

- J. Xie, Y. Wan, Y. Zhou, K. Mills, J. Filliben, and Y. Lei, "Effective and Scalable Uncertainty Evaluation for Large-Scale Complex System Applications", Proceedings of the 2014 Winter Simulation Conference, pp. 733-744.

- K. Mills, C. Dabrowski, J. Filliben and S. Ressler, "Combining Genetic Algorithms and Simulation to Search for Failure Scenarios in System Models", Proceedings of the 5th International Conference on Advances in Simulation, Venice, Italy, October 2013.

- K. Mills, et al., "Workshop Report on Computational Modeling of Big Networks (COMBINE)", C. Dovrolis, D. Nicol, and G. Riley (eds.), Department of Energy, Office of Science, December 2012.

- K. Mills, C. Dabrowski and D. Santay, "Practical Issues When Implementing a Distributed Population of Cloud-Computing Simulators Controlled by a Genetic Algorithm", NIST Publication # 912474, November, 28, 2012.

- C. Dabrowski, J. Filliben and K. Mills, "Predicting Global Failure Regimes in Complex Information Systems", NetONets 2012: Networks of Networks: Systemic Risk and Infrastructural Interdependencies, Northwestern University, June 19, 2012.

- C. Dabrowski and K. Mills, "VM Leakage and Orphan Control in Open-Source Clouds", Proceedings of IEEE CloudCom 2011, Nov. 29-Dec. 1, Athens, Greece, pp. 554-559.

- K. Mills, J. Filliben and C. Dabrowski, "Comparing VM-Placement Algorithms for On-Demand Clouds", Proceedings of IEEE CloudCom 2011, Nov. 29-Dec. 1, Athens, Greece, pp. 91-98.

- C. Dabrowski and K. Mills, "Extended Version of VM Leakage and Orphan Control in Open-Source Clouds", NIST Publication 909325; an abbreviated version of this paper was published in the Proceedings of IEEE CloudCom 2011, Nov. 29-Dec. 1, Athens, Greece.

- K. Mills and J. Filliben, "Comparison of Two Dimension-Reduction Methods for Network Simulation Models", Journal of Research of the National Institute of Standards and Technology, 116-5, September-October 2011, pp. 771-783.

- C. Dabrowski and F. Hunt, "Using Markov Chains and Graph Theory Concepts to Analyze Behavior in Complex Distributed Systems", Proceedings of the 23rd European Modeling and Simulation Symposium, September 12-14, 2011. Rome Italy. In press.

- K. Mills, J. Filliben, D-Y. Cho and E. Schwartz, "Predicting Macroscopic Dynamics in Large Distributed Systems", Proceedings of ASME 2011 Conference on Pressure Vessels & Piping, Baltimore, MD, July 17-22, 2011.

- C. Dabrowski and F. Hunt, "Identifying Failure Scenarios in Complex Systems by Perturbing Markov Chain Models", Proceedings of ASME 2011 Conference on Pressure Vessels & Piping, Baltimore, MD, July 17-22, 2011.

- K. Mills, J. Filliben and C. Dabrowski, "An Efficient Sensitivity Analysis Method for Large Cloud Simulations", Proceedings of the 4th International Cloud Computing Conference, IEEE, Washington, D.C., July 5-9, 2011. http://dx.doi.org/10.1109/CLOUD.2011.50

- F. Hunt, K. Morrison and C. Dabrowski, "Spectral Based Methods that Streamline the Search for Failure Scenarios in Large-Scale Distributed Systems", Proceedings of the IASTED International Conference in Applied Simulation and Modeling, June 22-24, 2011.

- C. Dabrowski, F. Hunt and K. Morrison, Improving the Efficiency of Markov Chain Analysis of Complex Distributed Systems, NIST Inter-Agency Report 7744, November 2010.

- K. Mills, E. Schwartz and J. Yuan, "How to Model a TCP/IP Network using only 20 Parameters", Proceedings of the 2010 Winter Simulation Conference (WSC 2010), Dec. 5-8, Baltimore, MD. http://dx.doi.org/10.1109/WSC.2010.5679106

- K. Mills and J. Filliben, "An Efficient Sensitivity Analysis Method for Network Simulation Models", presented at the 2010 Winter Simulation Conference (WSC 2010), Dec. 5-8, Baltimore, MD.

- K.Mills, J. Filliben, D. Cho, E. Schwartz and D. Genin, Study of Proposed Internet Congestion Control Mechanisms, NIST Special Publication 500-282, May 2010, 534 pages.

- D. Genin and V. Marbukh, "Bursty Fluid Approximation of TCP for Modeling Internet Congestion at the Flow Level", Proceedings of the 47th Annual Allerton Conference on Communication, Control, and Computing, Sept 30-Oct 2, 2009.

- V. Marbukh, "From Network Microeconomics to Network Infrastructure Emergence", Proceedings of the 1st IEEE International Workshop on Network Science for Communication Networks (NetSciCom 2009), held in conjunction with IEEE Infocom 2009, April 24, 2009 - Rio de Janeiro, Brazil.

- F. Hunt and V. Marbukh, "Measuring the Utility/Path Diversity Tradeoff in Multipath Protocols", Proceedings of the 4th International Conference on Performance Evaluation Methodologies and Tools, Pisa, Italy, October 20-22, 2009.

- C. Dabrowski, "Reliability in grid computing systems", in Concurrency and Computation: Practice and Experience, John Wiley & Sons, 21/8, pp. 927-959, 2009.

- C. Dabrowksi and F. Hunt, "Using Markov Chain Analysis to Study Dynamic Behaviour in Large-Scale Grid Systems", Proceedings of the 7th Australasian Symposium on Grid Computing and e-Research, Wellington, New Zealand, Jan. 2009.

- C. Dabrowski and F. Hunt, Markov Chain Analysis for Large-Scale Grid Systems, NIST Inter-Agency Report 7566, January 2009.

- D. Genin and V. Marbukh, "Toward Understanding of Metastability in Cellular CDMA Networks: Emergence and Implications for Performance." GLOBECOM 2008, New Orleans, Nov. 31 - Dec. 4.

- K. Mills and C. Dabrowski, "Can Economics-based Resource Allocation Prove Effective in a Computation Marketplace?", Journal of Grid Computing, 6/3, September 2008, pp. 291-311.

- F. Hunt and V. Marbukh, "Dynamic Routing and Congestion Control Through Random Assignment of Routes", Proceedings of the 5th International Conference on Cybernetics and Information Technologies, Systems and Applications: CITSA 2008, Orlando FL, July 2008. (BEST PAPER)

- V. Marbukh, "Can TCP Metastability Explain Cascading Failures and Justify Flow Admission Control in the Internet?", Proceedings of the 15th International Conference on Telecommunications, Saint Peterbsurg, Russia, June 16-19, 2008.

- V. Marbukh and K. Mills, "Demand Pricing & Resource Allocation in Market-based Compute Grids: A Model and Initial Results", Proceedings of the 7th International Conference on Networking, IEEE, April 2008, pp. 752-757.

- V. Marbukh and S. Klink, "Decentralized Control of Large-Scale Networks as a Game with Local Interactions: Cross-Layer TCP/IP Optimization", 2nd International Conference on Performance Evaluation Methodologies and Tools, Nantes, France, October 23-25, 2007.

- V. Marbukh, "Utility Maximization for Resolving Throughput/Reliability Trade-offs in an Unreliable Network with Multipath Routing", 2nd International Conference on Performance Evaluation Methodologies and Tools, Nantes, France, October 23-25, 2007.

- V. Marbukh, "Fair Bandwidth Sharing under Flows Arrivals/Departures: Effect of Retransmissions on Stability and Performance", ACM Sigmetrics Performance Evaluation Review, Vol. 35, No. 2, pp. 6-8. http://dx.doi.org/10.1145/1330555.1330560

- V. Marbukh, "Metastability of fair bandwidth sharing under fluctuating demand and necessity of admission control", IEE Electronics Letters, Vol. 43, No. 19. pp. 1051-1053.

- V. Marbukh and K. Mills, "On Maximizing Provider Revenue in Market-Based Compute Grids", Proceedings of the 3rd International Conference on Networking and Services, Athens, Greece, June 19-25, 2007.

- K. Mills, "A Brief Survey of Self-Organization in Wireless Sensor Networks", Wireless Communications and Mobile Computing, Wiley Interscience, 7/7, September 2007, pp. 823-834.

- K. Mills and C. Dabrowski, "Investigating Global Behavior in Computing Grids", Self-Organizing Systems, Lecture Notes in Computer Science, Volume 4124 ISBN 978-3-540-37658-3, pp. 120-136.

- K. Sriram, D. Montgomery, O. Borchert, O. Kim and D. R. Kuhn, "Study of BGP Peering Session Attacks and Their Impacts on Routing Performance", IEEE Journal on Selected Areas in Communications, 24/10, October 2006, pp. 1901-1915.

- J. Yuan and K. Mills, "Simulating Timescale Dynamics of Network Traffic Using Homogeneous Modeling", The NIST Journal of Research, 111/3, May-June 2006, pp. 227-242.

- J. Yuan and K. Mills, "Monitoring the Macroscopic Effect of DDoS Flooding Attacks", IEEE Transactions on Dependable and Secure Computing, 2/4, October-December 2005, pp. 324-335.

- J. Yuan and K. Mills, "A Cross-Correlation Based Method for Spatial-Temporal Traffic Analysis", Performance Evaluation, 61/2-3, pp 163-180.

- J. Yuan and K. Mills, "Macroscopic Dynamics in Large-Scale Data Networks", chapter 8 in Complex Dynamics in Communication Networks, edited by Ljupco Kocarev and Gabor Vattay, published by Springer, 2005, ISBN 3-540-24305-4, pp. 191-212.

- J. Yuan and K. Mills, "Exploring Collective Dynamics in Communication Networks", The NIST Journal of Research, 107/2, March-April 2002, pp. 179-191.

- J. Heidemann, K. Mills and S. Kumar, "Expanding Confidence in Network Simulation", IEEE Network Magazine, 15/5, September/October 2001, pp. 58-63.

Related Software Tools

- SLX software for simulated computing grid used in "Investigating Global Behavior in Computing Grids". (see http://www.wolverinesoftware.com/ for information on SLX)

- Matlab MFiles used in "Simulating Timescale Dynamics of Network Traffic Using Homogeneous Modeling".(see http://www.mathworks.com/ for information on Matlab)

- Matlab MFiles used in "Monitoring the Macroscopic Effect of DDoS Flooding Attacks".

- Matlab MFiles used in "A Cross-Correlation Based Method for Spatial-Temporal Traffic Analysis".

- Matlab MFiles used in "Macroscopic Dynamics in Large-Scale Data Networks".

- Matlab MFiles used in "Exploring Collective Dynamics in Communication Networks".

- MesoNet: a Medium-scale Simulation Model of a Router-Level Internet-like Network

- EconoGrid: a detailed Simulation Model of a Standards-based Grid Compute Economy

- Flexi-Cluster: a Simulator for a Single Compute Cluster

- MesoNetHS: A Medium-scale Network Simulation with TCP Congestion-Control Algorithms for High Speed Networks, including BIC, Compound TCP, FAST, H- TCP, HS-TCP and Scalable TCP

- Divisa: software for interactive visualization of multidimensional data

- Markov Model Rewriter: A Discrete Time Markov chain simulation and perturbation system

- Koala: a medium-scale discrete-event simulation of Infrastructure Clouds, including various algorithms for allocating virtual machines to clusters and nodes.

Related Demonstrations

- Animation (176 Mbyte Quicktime Movie in zip archive) of vCore, Memory and Disk Space usage and pCore, Disk Count and NIC Count load from a Koala Simulation (Oct. 22, 2010) of a 20 cluster x 200 node (i.e., 4,000 node) Infrastructure Cloud evolving over 1200 hours.

- Visualization (10 Mbyte .avi in zip archive) from a Simulation (May 23, 2007) of an Abilene-style Network

- Visualization (14.4 Mbyte .avi in zip archive) from a Simulation (July 31, 2007) of a Network Running CTCP

Other Information

- C. Dabrowski & K. Mills, FxNS Graphs and Cluster Analyses, October 2015.

- P. Gough, Interactive Multidimensional Visualization of FxNS Simulation Data, October 2015.

- K. Mills, Introduction of Melanie Mitchell, who spoke about her book Complexity: A Guided Tour, on May 4, 2012 at NIST.

- Enlarged Figures & Tables for IEEE CloudCom Paper 36

- References for Proposal called "Predicting Catastrophic Behavior in Complex Information Systems"