Meter

Whether it’s the interminable distance to Grandma’s house, a span of cloth, the number of yards to the goal line or the space between the unfathomably small transistors on a computer chip, length is one of the most familiar units of measurement.

People have come up with all sorts of inventive ways of measuring length. The most intuitive are right at our fingertips. That is, they are based upon the human body: the foot, the hand, the fingers or the length of an arm or a stride.

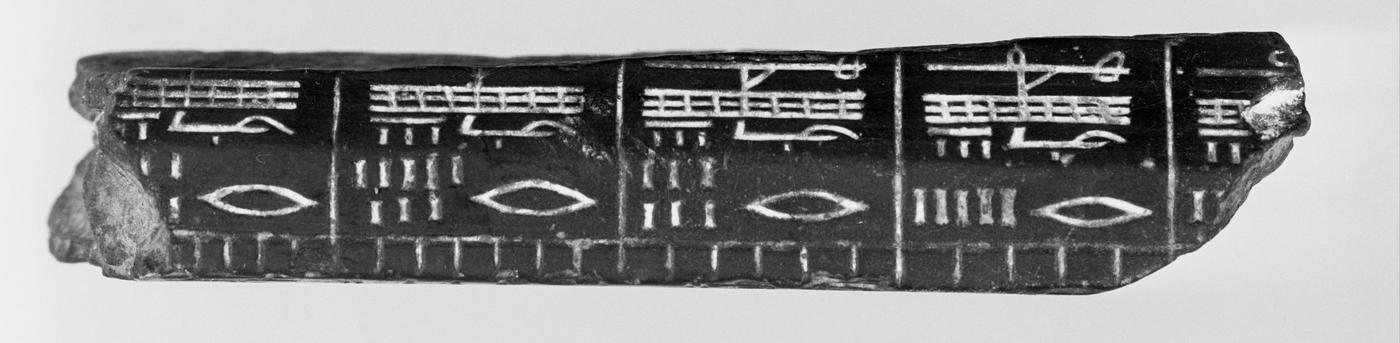

In ancient Mesopotamia and Egypt, one of the first standard measures of length used was the cubit. In Egypt, the royal cubit, which was used to build the most important structures, was based on the length of the pharaoh’s arm from elbow to the end of the middle finger plus the span of his hand. Because of its great importance, the royal cubit was standardized using rods made from granite. These granite cubits were further subdivided into shorter lengths reminiscent of centimeters and millimeters.

Later length measurements used by the Romans (who had taken them from the Greeks, who had taken them from the Babylonians and Egyptians) and passed on into Europe generally were based on the length of the human foot or walking and multiples and subdivisions of that. For example, the pace—one left step plus one right step—is approximately a meter or yard. (On the other hand, the yard did not derive from a pace but from, among other things, the length of King Henry I of England’s outstretched arm.) Mille passus in Latin, or 1,000 paces, is where the English word “mile” comes from. However, the Roman mile was not quite as long as the modern version.

The Romans and other cultures from around the world such as those in India and China standardized their units, but length measurements in Europe were still largely based on variable things until the 18th century. For instance, in England, for the purposes of commerce, the inch was conceived as the length of three barleycorns laid end to end.

A unit of length for measuring land, a rod, was the length of 16 randomly selected men’s feet, and multiples of it defined an acre.

In some places, the area of farmland was even measured in time, such as how much land a man, or a man with an ox, could plow in a day. This measure further depended upon the crop being grown: For example, an acre of wheat was a different size than an acre of barley. This was fine so long as accuracy and precision were not an issue. You could build your own house using such measures, and plots of land could be roughly surveyed, but if you wanted to buy or sell anything based on length or area, collect proper taxes and duties, build more advanced weapons and machines with interchangeable parts, or perform any kind of scientific investigation, you needed a universal standard.

The invention of the metric system at the end of the 18th century in revolutionary France was the result of a lengthy effort to establish such a universal system of measurement, one that wasn’t based on bodily dimensions that varied from person to person or from place to place. Rather, the French sought to create a system that would endure “for all times, for all peoples.”

To do this, the French Academy of Sciences established a council of preeminent scientists and mathematicians, Jean-Charles de Borda, Joseph-Louis Lagrange, Pierre-Simon Laplace, Gaspard Monge and Nicolas de Condorcet, to study the problem in 1790. A year later, they emerged with a set of recommendations. The new system would be a decimal system, that is, based on 10 and its powers. The measure of distance, the meter (derived from the Greek word metron, meaning “a measure”), would be 1/10,000,000 of the distance between the North Pole and the equator, with that line passing through Paris, of course. The measure of volume, the liter, would be the volume of a cube of distilled water whose dimensions were 1/1,000 of a cubic meter. The unit of mass (or more practically, weight), the kilogram, would be the weight of a liter of distilled water in vacuum (completely empty space).

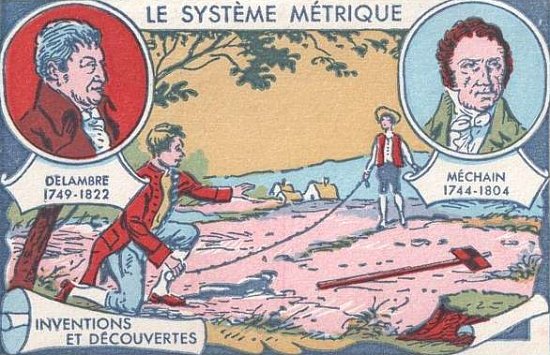

In 1792, astronomers Pierre Méchain and Jean-Baptiste Delambre set out to measure the meter by surveying the distance between Dunkirk, France, and Barcelona, Spain. After seven or so years of effort, they arrived at their final measure and submitted it to the academy, which embodied the prototype meter as a bar of platinum.

It was later discovered that scientists made errors in calculating the curvature of the earth, and as a result the original prototype meter was 0.2 millimeters shorter than the actual distance between the North Pole and the equator. While this doesn’t seem like a big discrepancy, it’s the kind of thing that keeps measurement scientists up at night. Nonetheless, it was decided that the meter would remain as realized in the platinum bar. Subsequent definitions of the meter have since been chosen to hew as closely as possible to the length of that first meter bar, despite its shortcomings.

As time passed, more and more European countries adopted the French meter as their length standard. However, while the copies of the meter bar were meant to be exact, there was no way to verify this. In 1875, the Treaty of the Meter, signed by 17 countries including the U.S., established the General Conference on Weights and Measures (Conférence Général des Poids et Mésures, CGPM) as a formal diplomatic organization responsible for the maintenance of an international system of units in harmony with the advances in science and industry.

The intergovernmental organization, the International Bureau of Weights and Measures (Bureau international des poids et mesures, BIPM), was also established at that time. Located just outside of Paris in Sèvres, France, the BIPM serves as the focal point through which its member states act on matters of metrological significance. It is the ultimate arbiter of the International System of Units (SI, Système Internationale d'Unités) and the repository of the physical measurement standards. The kilogram was the last of the artifact-based measurement standards in the SI. (On May 20, 2019, it was officially replaced with a new definition based on constants of nature.)

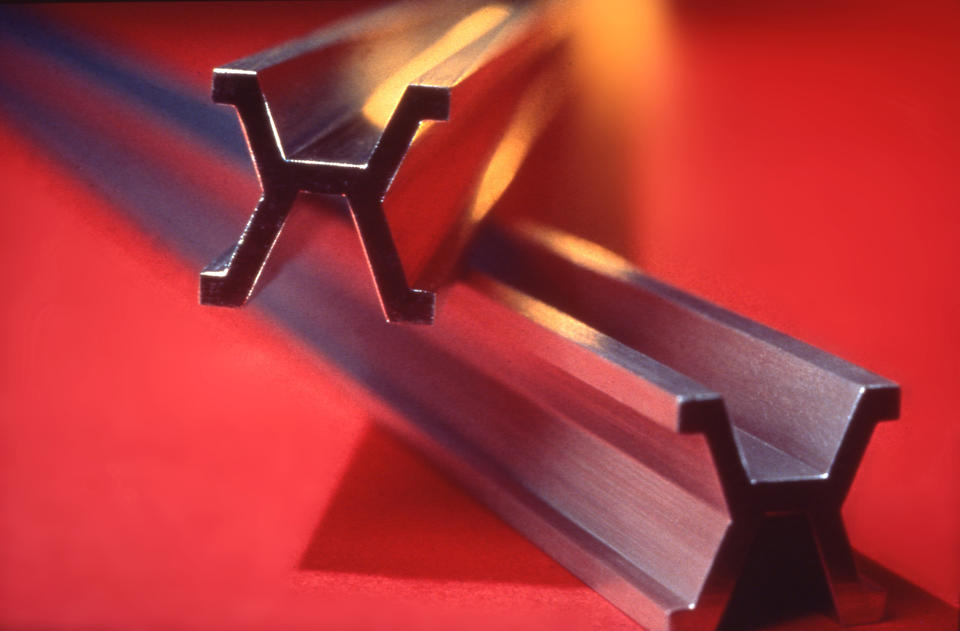

After that first meeting, the BIPM ordered a new prototype and 30 copies were given to the member states. This new prototype would be made of platinum and iridium, which was significantly more durable than platinum alone. The bar would also no longer be flat but have an X-shaped cross section to better resist distortions that could be introduced by flexing during comparisons with other meter bars. The new prototype would also not be an “end” standard, whereby the meter was defined by the ends of the bar itself. Instead, the bar would be over a meter long and the meter would be defined as the distance between two lines inscribed on its surface. Easier to create than an end standard, these inscriptions also enabled the measurement of the meter to survive if the ends of the bar got damaged. Official measurements of the prototype meter would occur at standard atmospheric pressure at the melting point of ice.

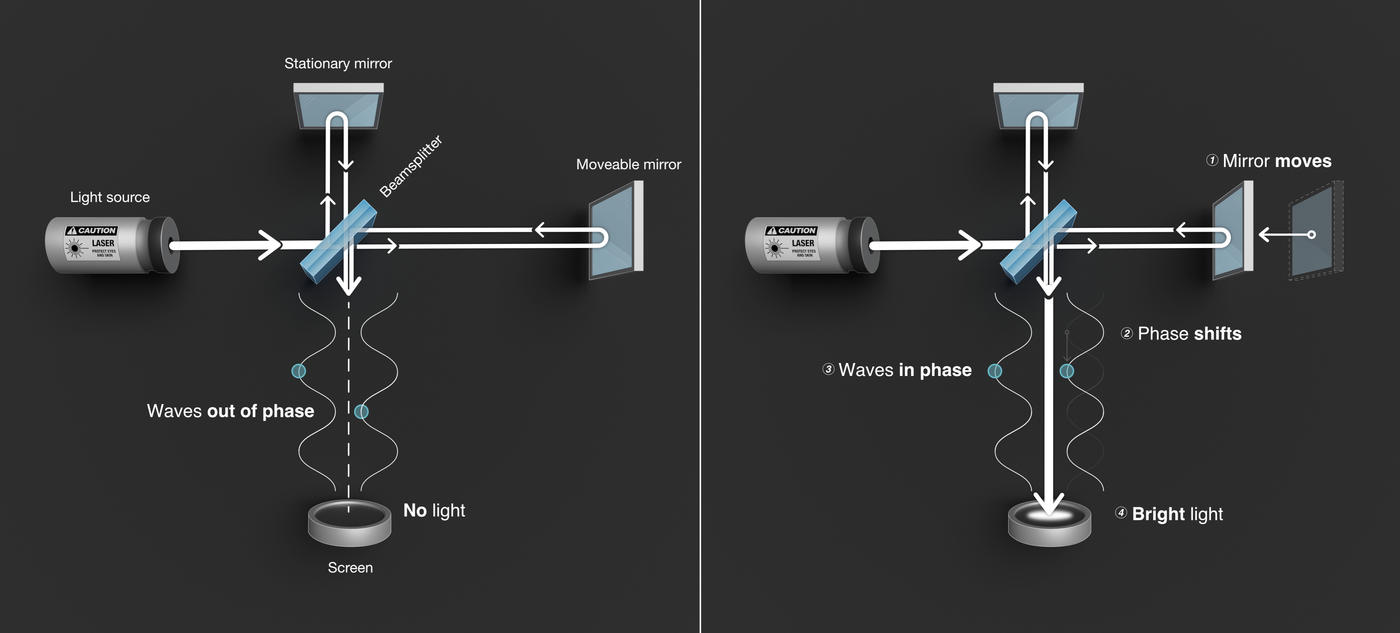

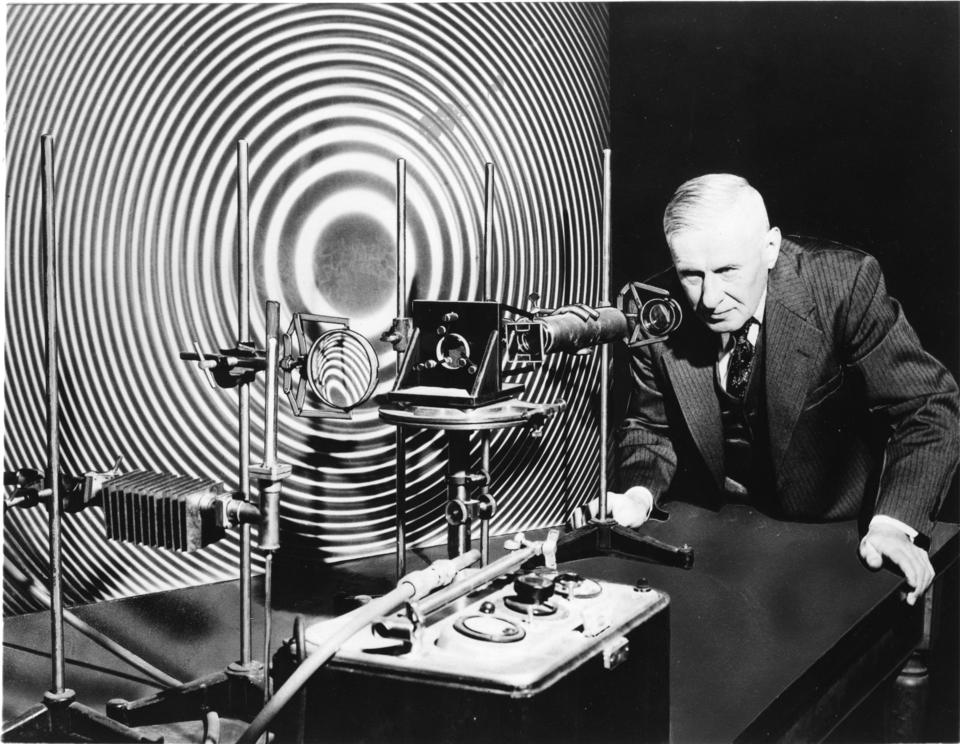

And this was how it remained until 1927, when precision measurement of the meter could make a quantum leap thanks to advancements made in a 40-year-old technique known as interferometry. In this technique, waves of light can be manipulated in such a way that they combine or “interfere” with one another, enabling precise measurements of the length of the waves—the distance between successive peaks.

It was in 1927 that NIST (then known as the National Bureau of Standards) advocated for the interference patterns of energized cadmium atoms to be made a practical standard of length. This was useful because international measurement artifacts such as meter bars could not be everywhere at once; however, with proper equipment, scientists anywhere could measure the meter with cadmium. Their copies, exquisite as they might be, are not as accurate as the real thing. Neither an artifact nor its copies are suited for every measurement one might want to make. To cite one real-world example, gage blocks are length standards commonly used in machining. Because of the extremely fine work demanded of machinists, their calibration standards must be finely crafted as well. Using cadmium (and krypton) wavelengths, gage blocks could be certified to being accurate to within 0.000001 inch per every inch (1 part per million), three times closer than previously.

In the mid-1940s, nuclear physicists aimed neutrons at gold to transform the atoms into mercury. NIST physicist William Meggers noted that aiming radio waves at this form of mercury, known as mercury-198, would produce green light with a well-defined wavelength. In 1945, Meggers procured a small amount of the mercury-198 and started experimenting with it.

Applying interferometry techniques to mercury-198, he came up three years later with a precise, reproducible and convenient way of defining the meter.

“In all probability the brilliant green mercury line will be the wave to be used as the ultimate standard of length,” he wrote in his papers.

Meggers measured the wavelength of the green light from mercury: 546.1 nanometers, or billionths of a meter. A meter would be defined as a precise number of multiples of this wavelength.

In 1951, NIST distributed 13 “Meggers lamps” to scientific institutions and industry labs. The agency sought to further increase the precision of its technique for redefining the meter. However, funding to do this was not immediately available, and the project could not be completed until 1959.

In the end, mercury lost out to krypton—the atomic element for which Superman’s home planet was named. Originally proposed as the atom of choice by Physikalisch-Technische Bundesanstalt (PTB), the national metrology institute of Germany, the krypton-86 isotope was more widely available in Europe and was able to provide higher precision in the laboratory measurements at the time.

So, in 1960, the 11th CGPM agreed to a new definition of the meter as the “length equal to 1,650,763.73 wavelengths in vacuum of the radiation corresponding to the transition between the levels 2p10 and 5d5 of the krypton-86 atom.” In other words, when electrons of a common form of krypton make a specific jump in energy, they release that energy in the form of reddish-orange light with a wavelength of 605.8 nanometers. Add up 1,650,753.73 of those wavelengths and you’ve got a meter.

But the krypton standard was not to endure for too long. (Sorry, Superman.) That’s because NIST scientists quickly developed another superhero-like power: the ability to reliably and precisely measure the fastest speed in the universe, namely, the speed of light in vacuum.

The light with which we are most familiar, the visible kind, is only a small part of the electromagnetic spectrum, which runs from radio waves to gamma rays. So, when we talk about the speed of light, we’re talking about the speed of all electromagnetic radiation including visible light.

Because light has an incredibly fast but ultimately finite speed, if that speed is known, then distances can be calculated using the straightforward formula: Distance is speed multiplied by time. This is a great way to measure the distance to satellites and other spacecraft, the Moon, planets and, with some additional astronomical techniques, even more remote celestial objects. The speed of light is also the backbone of the GPS network, which determines your position by measuring the time of flight of radio signals between atomic clock-equipped satellites and your smartphone or other device. And knowing the speed of light is integral to another closely related technology called laser ranging, a hyperaccurate kind of radar that can be used to position satellites and measure and monitor the Earth’s surface.

The speed of light had for centuries remained an elusive quantity, but scientists began to really close in on it with the invention of the laser in 1960, the same year that the krypton standard was introduced. The characteristics of laser light made it an ideal tool for measuring the wavelength of light. All that was missing from the equation was a highly accurate measurement of light’s frequency, the number of wave peaks that pass through a fixed point per second. Once the frequency was known with enough accuracy, calculating the speed of light was as simple as multiplying the frequency by the wavelength.

Between the years 1969 and 1979, scientists at NIST’s laboratory in Boulder, Colorado, achieved nine world-record measurements of laser light frequency. Of note was the 1972 record measurement with a novel laser stabilized to release a specific frequency of light. The light interacted strongly with methane gas, ensuring that similar lasers will operate at the same frequency, so the experiment can be repeated. This measurement was far more reproducible than anything in the 1960-approved technique for determining the meter. Led by NIST physicists Ken Evenson, future Nobel laureate Jan Hall, and Don Jennings, it resulted in the value c=299,792,456.2 ± 1.1 meters per second, a hundredfold improvement in the accuracy of the accepted value for the speed of light.

Independent of the NIST Boulder group, Zoltan Bay and Gabriel Luther at NIST’s Gaithersburg headquarters, in collaboration with John White, a colleague from American University, had published a new value for the speed of light a few months before. The Gaithersburg group used an ingenious scheme for modulating the light from the 633 nm line of a helium-neon laser using microwaves. Using a value for the wavelength of the He-Ne red line given earlier by Christopher Sidener, Bay, Luther and White obtained a value of c=299,792,462 ± 18 meters per second. This value, while not determined with the low uncertainty level claimed a few months later by the Boulder group, was entirely consistent with their result.

Building upon these and other advances, the meter was redefined by international agreement in 1983 as the length of the path traveled by light in a vacuum in 1/299,792,458 of a second. This definition also locked the speed of light at 299,792,458 meters per second in a vacuum. Length was now no longer an independent standard but rather was derived from the extremely accurate standard of time and a newly defined value for the speed of light made possible by the technology developed at NIST.

And thus, the meter has and likely will remain so elegantly defined in these terms for the foreseeable future.