Ampere: Introduction

The ampere (A), the SI base unit of electric current, is a familiar and indispensable quantity in everyday life. It is used to specify the flow of electricity in hair dryers (15 amps for an 1,800-watt model), extension cords (typically 1 to 20 amps), home circuit breakers (15 to 20 amps for a single line), arc welding (up to around 200 amps) and more. In daily life, we experience a wide range of current: A 60-watt equivalent LED lamp draws a small fraction of an amp; a lightning bolt can carry 100,000 amps or more.

The ampere has been an internationally recognized unit since 1908, and has been measured with progressively better accuracy over time, most recently to a few parts in ten million.

But defining the ampere has been problematic at best. Until 2019, its official definition ― a general version of an experiment conducted by French scientist André-Marie Ampère in the 1820s ― specified a completely hypothetical situation:

The ampere is that constant current which, if maintained in two straight parallel conductors of infinite length, of negligible circular cross-section, and placed 1 meter apart in vacuum, would produce between these conductors a force equal to 2 x 10-7 newton per meter of length.

Because infinitely long wires and vacuum chambers were generally unavailable, the ampere could not be physically realized according to its own definition, though it could, with considerable difficulty, be approximated in a laboratory. Equally unsatisfactory was the fact that the amp, though an electrical quantity, was defined in mechanical terms. The newton (SI unit of force, kg•m/s2) was derived from the SI unit of mass: the kilogram stored in Sèvres, France. Its mass value drifted over time and thus limited the accuracy of its derived units.

In November 2018, however, the redefinition of the ampere ― along with three other SI base units: the kilogram (mass), kelvin (temperature) and mole (amount of substance) ― was approved. Starting on May 20, 2019, the ampere is based on a fundamental physical constant: the elementary charge (e), which is the amount of electric charge in a single electron (negative) or proton (positive).

The ampere is a measure of the amount of electric charge in motion per unit time ― that is, electric current. But the quantity of electric charge by itself, whether in motion or not, is expressed by another SI unit, the coulomb (C). One coulomb is equal to about 6.241 x 1018 electric charges (e). One ampere is the current in which one coulomb of charge travels across a given point in 1 second.

That’s why an average lightning bolt carries around 5 coulombs of charge, even though its current may be tens of thousands of amps. The difference in those numbers arises from the fact that a lightning strike only lasts a matter of a few tens of milliseconds (thousandths of a second).

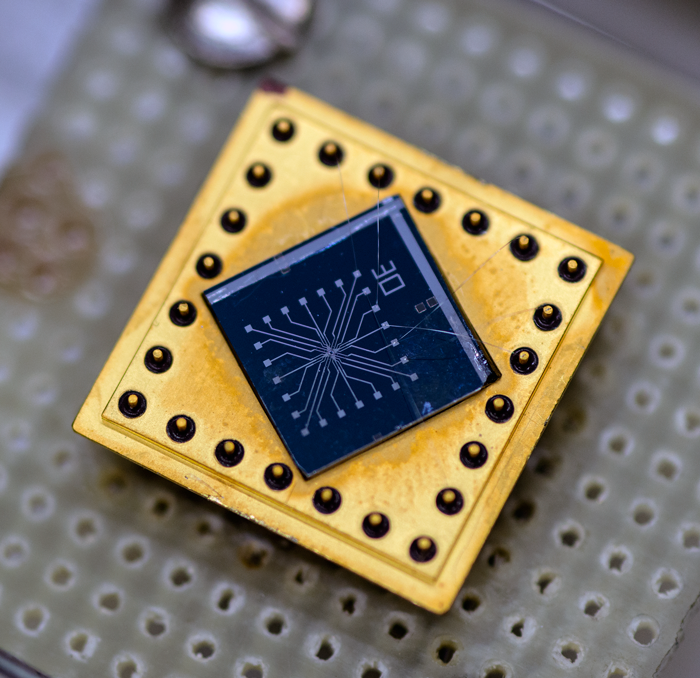

Defining the ampere solely in terms of the elementary charge e can be viewed as a sort of good news-bad news outcome. On the one hand, it defines the amp clearly in terms of only one invariant of nature that was given an exact fixed value at the time of redefinition. After that, direct measurements of the ampere became a matter of counting the transit of individual electrons in a device over time.

On the other hand, e is almost unimaginably small ― about a tenth of a billionth of a billionth of the amount of charge in a current of 1 ampere that moves past a given point in 1 second. Measuring individual electrons past a point is technically demanding, and a major challenge for scientists is to produce a current of individual electrons that can be routinely measured and used as a standard.

So, although the new definition has finally put the ampere on a more rational footing, it poses new and formidable challenges for measurement science.