Second: The Past

Archaeologists believe that ancient peoples first measured time by tracking the phases of the Moon. Starting around 20,000 years ago, people recorded these Moon phases on cave walls as well as on stone, antler and bone. Scientists speculate that early humans’ interest in time likely served both practical and ritual purposes.

Around 5,000 years ago, Egyptians built the first sundials to mark the passage of time. They arranged the day into the “duodecimal system” of 12 hours of day and night that we use today.

The Egyptians later built the first water clocks. These devices measured time by regulating the flow of water out of a tank. Markings on the tank represented hours, and as the tank emptied, the time could be told by looking at the level of the remaining water. Following the same design, the Chinese built a more accurate and reliable clock that used liquid mercury in place of water to avoid freezing.

Evidence suggests that the hourglass first appeared less than a thousand years ago. It operated according to the same basic principle as the water clock: using gravity’s pull on a substance to mark the passage of time. The candle clock, another invention from the last thousand years, measured time based upon how quickly a “standard” candle of a given material and length would burn.

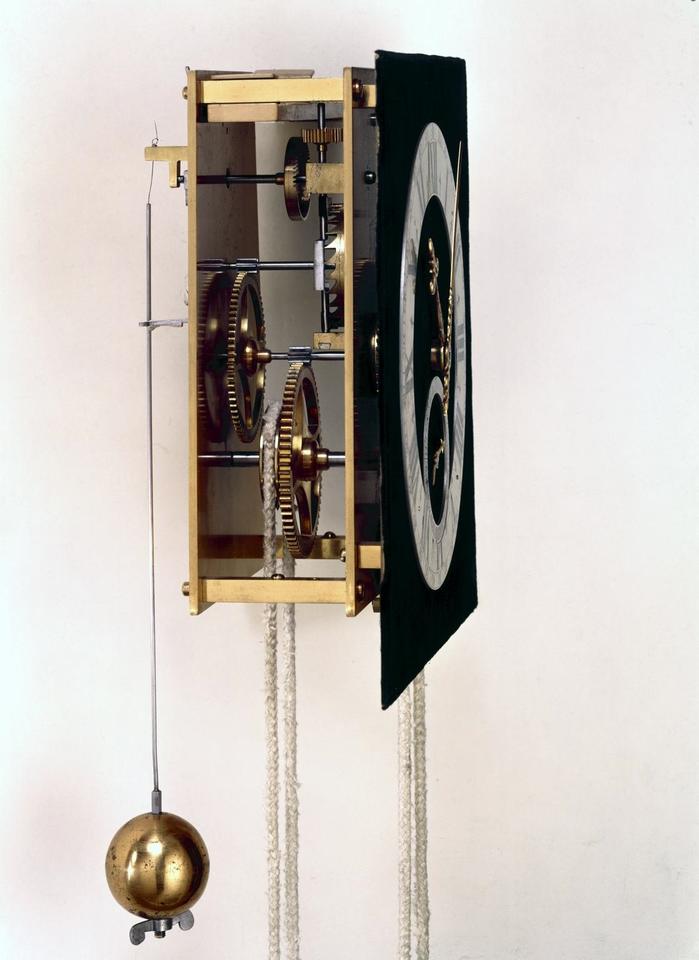

The first truly mechanical clock that resembled today’s timekeeping devices was built in Europe in the 14th century. Early mechanical clocks were accurate to only about 15 minutes per day — about the same as a sundial. Mechanical clocks’ precision and accuracy increased steadily with the development of spring-driven clocks in the 16th century and pendulum clocks in the 17th century.

As clocks improved, people started to divide time into smaller and smaller chunks. In the 1500s, clockmakers began adding “second hands” to their clocks, although the seconds these hands purported to tick off were not accurate. Dutch scientist Christiaan Huygens had the first pendulum clock built in 1657. Soon, pendulum clock makers began incorporating second hands that marked something that could be considered a unit of time.

Around this time, the Royal Society of London proposed a standard for timekeeping. It was actually a standard of length: the length of a pendulum that took a second to do a half-swing. But a problem quickly emerged: French scientists found that the proper length depended on the clock’s altitude, because gravity affects the rate at which a pendulum swings.

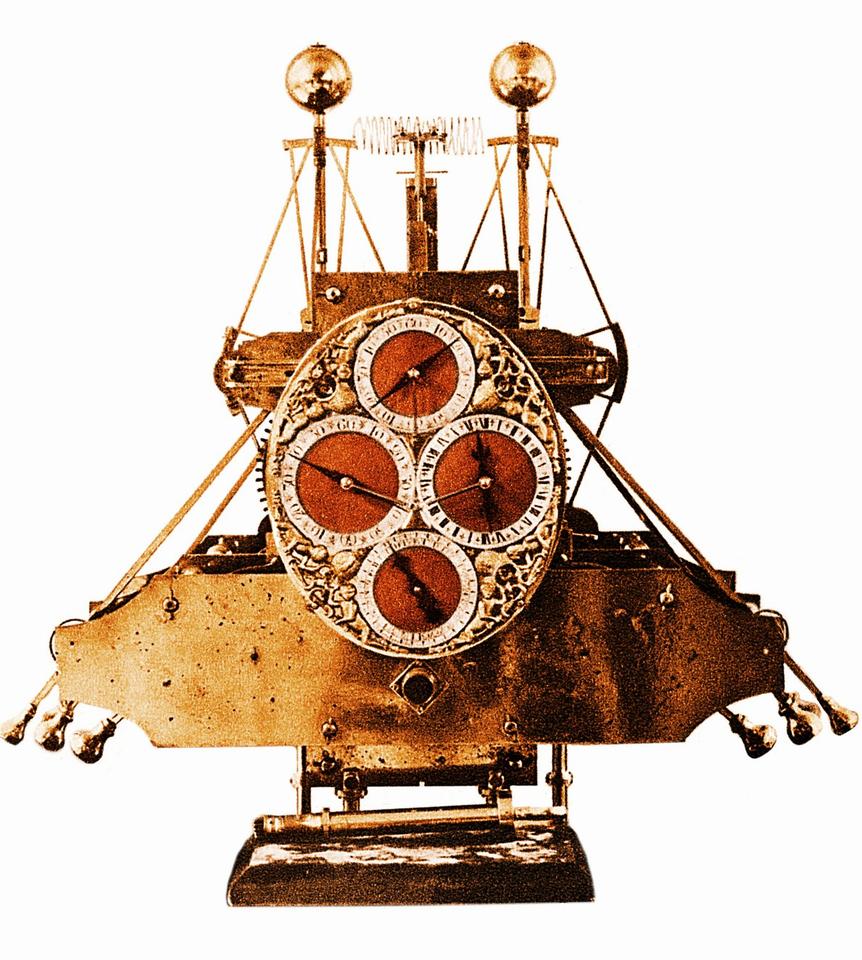

The 18th century saw the invention of the marine chronometer, which was the life’s work of English clockmaker John Harrison.

For navigation, the British Empire needed a clock durable enough to withstand travel at sea, yet accurate enough to calculate longitude, the distance traveled east or west from a defined meridian. To accomplish this, legislators established the Longitude Act, which funded enterprising clockmakers to improve and perfect their devices. Harrison took the lion’s share of these funds during the 31 years he worked on his chronometer, which kept accurate time to within one-fifth of a second per day. Other clockmakers in Britain and abroad built even more accurate versions.

Chronometers had a very specialized function: to help ships navigate. But they also drove innovation in timekeeping. Harrison’s first chronometer was a weighty metal construction that would barely fit into a box 1.2 meters (4 feet) on a side. His last chronometers could fit inside a pocket.

By the 19th century, timepieces of all types were becoming widespread. But the mechanical clocks of this era remained highly susceptible to errors from friction and temperature changes. So in the 1870s, famed Scottish physicist James Clerk Maxwell proposed a radical new idea, which was endorsed by the eminent mathematician and physicist Lord Kelvin, the namesake of the official international unit of thermodynamic temperature. The two mused that the vibrations of atoms could serve as an invariant “natural standard.” Atoms of a specific element, they explained, were identical to one another and would never change, so they would always emit light of a constant frequency and wouldn’t be susceptible to the disturbances that affected mechanical clocks.

The atomic clock was a prescient idea, but the technology needed to make a timepiece out of an atom wasn’t even close to being invented yet. So it would be some time before the mechanical clock was dethroned.

A new timekeeper enters the scene

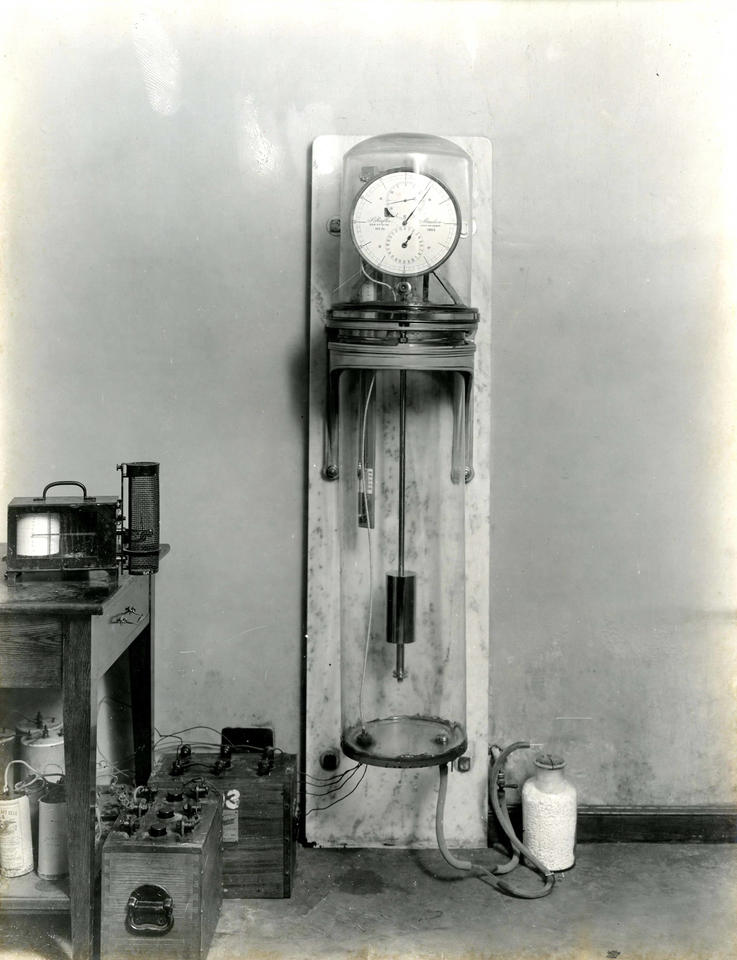

The formalization of time standards took a big step forward when the National Bureau of Standards, now the National Institute of Standards and Technology (NIST), was established in 1901. At that time, the most advanced clock was the Riefler clock, whose pendulum was encased in a vacuum chamber to reduce friction.

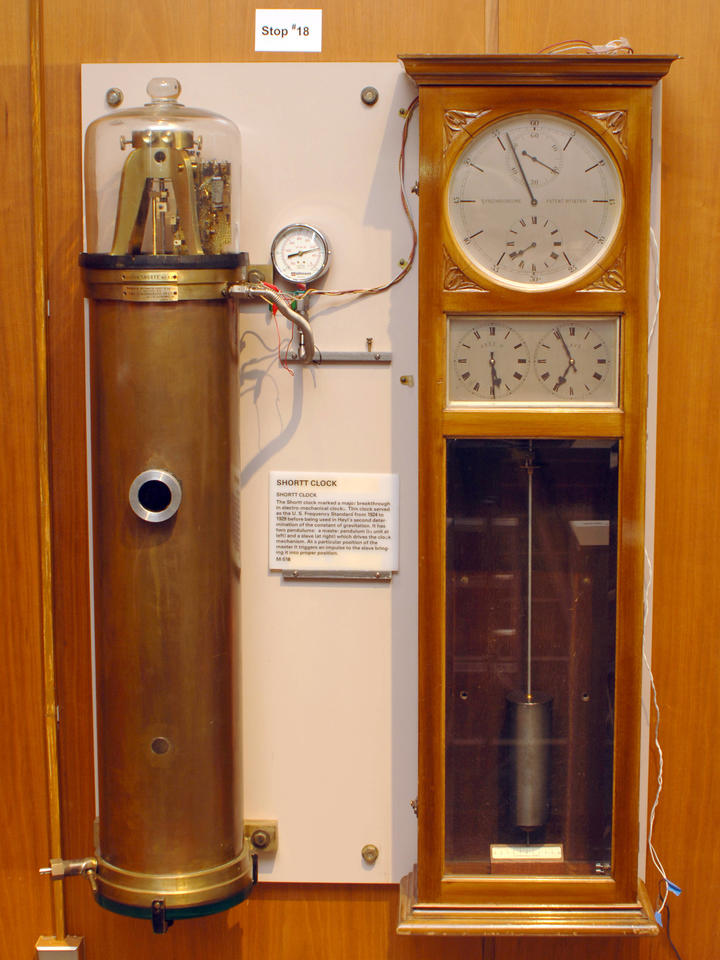

Accurate to within one hundredth of a second per day (or about 3.5 seconds per year), the Riefler clock was NIST’s primary time standard from 1904 until 1929, when it was replaced by the even more advanced pendulum-driven Shortt clock. The Shortt clock used two pendulums, one primary and one secondary. The secondary pendulum drove the clock’s mechanics and was kept synchronized to the primary pendulum with an electromagnetic linkage, to free it as much as possible from friction and disturbance. The Shortt clock was accurate to within about one second per year. Even though it was remarkably accurate for a mechanical clock, it was still subject to environmental disturbances such as vibration and had to be closely monitored.

The Shortt clock was soon replaced by a very different kind of timepiece: one based on crystals of quartz. Quartz crystal oscillators were invented and made into standard electrical circuits in the early 1920s to calibrate frequencies of radio transmissions and keep stations from interfering with one another. The technology took advantage of the fact that quartz is a piezoelectric material. This means the crystal flexes when an electrical current is run through it — and, conversely, when flexed, the crystal creates a small electric current. It does this at a very steady rate — typically tens of thousands of times per second.

After researchers at Bell Labs developed clocks based on quartz crystals, NIST adopted them as a new time standard. NIST’s quartz clocks were insulated from noise and outside vibration, making them accurate to about three seconds per year. This is a little worse than a Shortt clock, but the quartz clock’s reliability and reduced need for maintenance made it appealing for a national standard. The quartz oscillator remained the U.S. primary frequency standard until the 1960s, and it continues to power billions of wristwatches and other timekeeping devices today.

From new clocks to a new second

While clocks had changed profoundly over the ages, the fundamental unit of time had not. In 1960, the second was still understood as it had been for centuries: as a fraction of an Earth day, the time it takes our planet to make a complete rotation. Specifically, a second was 1/86,400th of an Earth day.

Yet scientists increasingly realized that this definition made for an unsatisfactory standard, because slight variations in the Earth’s rotational speed meant the second wasn’t constant. So in 1960, the General Conference on Weights and Measures (CGPM) — a diplomatic organization that makes decisions about international standards — approved a new definition of the second based on the yearly orbit of the Earth around the Sun. This new definition was termed the “ephemeris second.” Much more precise than the previous definition, it was also extremely unwieldy: The second would now be defined as 1/31,556,925.9747 of a tropical year (the time between two summer solstices) for 1900. Just a few years later, the CGPM would declare the ephemeris second to be “inadequate for the present needs of metrology.”

Around this time, a new clock burst onto the scene and revolutionized timekeeping and the second itself. It finally promised to realize Maxwell’s vision of a clock based on an unvarying natural vibration.

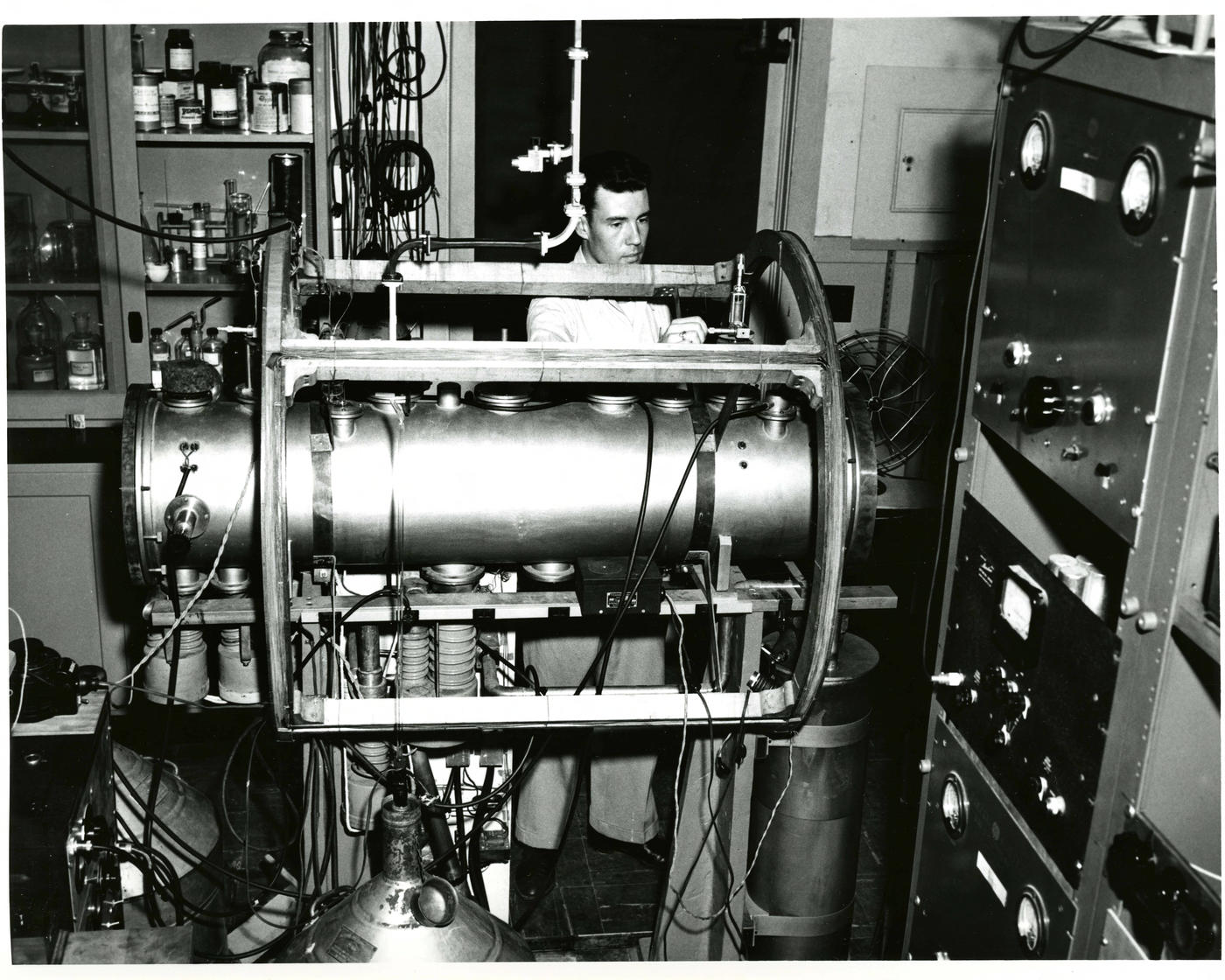

The new clock’s genesis began in 1947 in the Washington, D.C., laboratory of NIST’s Harold Lyons. Lyons’ group bombarded a cloud of ammonia molecules trapped in a 30-foot-long cell with microwaves. As the researchers tried different microwave frequencies — the number of wave peaks per second — they hit upon one that caused the molecules to absorb and re-emit a maximum amount of microwaves. The team then used a device that counted the microwave frequency and manually transferred it to a quartz crystal oscillator. This process is akin to how you might tune a guitar: You hear a reference pitch and tighten or loosen the string you want to tune until it plays that pitch. With this method, the team was able to define the second as the time it took the ammonia to emit roughly 23.9 billion cycles of radiation.

Lyons’ team had the clock working by August 1948; the researchers first demonstrated it publicly in 1949. The ammonia clock was crude by modern standards and ultimately proved no more accurate or precise than keeping time by measuring the Earth’s rotation. Still, it proved the concept of an atomic clock.

In 1955, Louis Essen at the National Physical Laboratory in the U.K. built upon an idea proposed by Nobel Prize-winning physicist Isidor Rabi to use a beam of cesium atoms rather than a diffuse cloud of ammonia molecules. Cesium, as Rabi and others had discovered, has several properties that made it easy for physicists to work with. Essen constructed the first atomic clock stable enough to be used as a time interval standard.

NIST had also started building cesium atomic clocks in the early 1950s, and by 1959, the institute had finished two high-performance cesium atomic beam frequency standards that could be precisely compared. NBS-1 became the U.S. national standard of frequency in 1959, quickly followed by NBS-2 in 1960.

What is a “standard of frequency” and how is it related to timekeeping? The first thing to note is that atoms don’t actually tell time. Instead, they absorb and release radiation such as microwaves or visible light. This radiation has a well-defined frequency — the number of wave cycles per second. In an atomic clock, a particular frequency of radiation absorbed and released by cesium or another atom is converted into a time interval. In other words, scientists define a second as the time it takes to count a certain number of radiation cycles.

And despite what headlines seem to suggest, atomic clocks don’t run forever once they are turned on. They run very accurately for limited periods of time, then need to be recalibrated or adjusted. But to get a sense of how accurate these clocks are, it’s often helpful to imagine how long it would take one to gain or lose a second if it ran continuously.

Both NBS-1 and NBS-2 had accuracies of 10 parts per trillion or better, meaning neither clock would gain or lose a second if it ran continuously for 3,000 years. By 1966, their successor NBS-3 had achieved an accuracy 10 times better and wouldn’t have gained or lost a second in 30,000 years.

With the advent of super-accurate atomic clocks, the definition of the second was ripe for change. In 1967, the CGPM redefined the second in the International System of Units (SI) to be the duration of 9,192,631,770 cycles of microwave radiation, corresponding to what’s known as the “hyperfine energy transition” in the cesium atom.

Let’s break down this phrase a bit. An “energy transition” refers to little up-and-down jumps in energy made by the outermost electron orbiting the cesium atom’s core, or nucleus, when the atom absorbs or emits radiation at specific energies determined by quantum mechanics. “Hyperfine” refers to a splitting of the atom’s energy levels when the electron interacts magnetically with the atom’s nucleus. Microwaves with a frequency of 9,192,631,770 cycles per second carry exactly the amount of energy the electron needs to go between the two hyperfine energy levels of the cesium atom in its lowest-energy configuration. When these microwaves hit a cesium atom, it makes the hyperfine energy transition: The outermost electron flips its “spin,” which can be imagined as a tiny bar magnet inherent to the electron flipping upside down, and the atom attains a higher energy level. When the atom then reflips its spin and drops to the lower energy level, it releases microwaves with the same frequency of 9,192,631,770 cycles per second. The frequency of the absorbed and emitted microwave radiation is used as a timekeeping standard.

(The value of 9,192,631,770 cycles per second to which the second was fixed emerged when astronomers at the U.S. Naval Observatory calculated the duration of a 1950s-era ephemeris second based on measurements of the Moon’s motion, and Essen and a colleague at the National Physical Laboratory then counted the cycles of radiation absorbed and emitted by cesium atoms during one of those seconds. The measurement, published in 1958, had an error of a few parts per billion, which suddenly enabled timekeeping with significantly lower uncertainty than with a second based on astronomical observations.)

The new cesium clocks, while better than any that had come before, were still far from perfect. Scientists continued to root out and minimize sources of error such as temperature variations and unwanted energy transitions. By 1975, NIST’s NBS-6 atomic clock was accurate and stable enough to neither gain nor lose a second in 400,000 years.

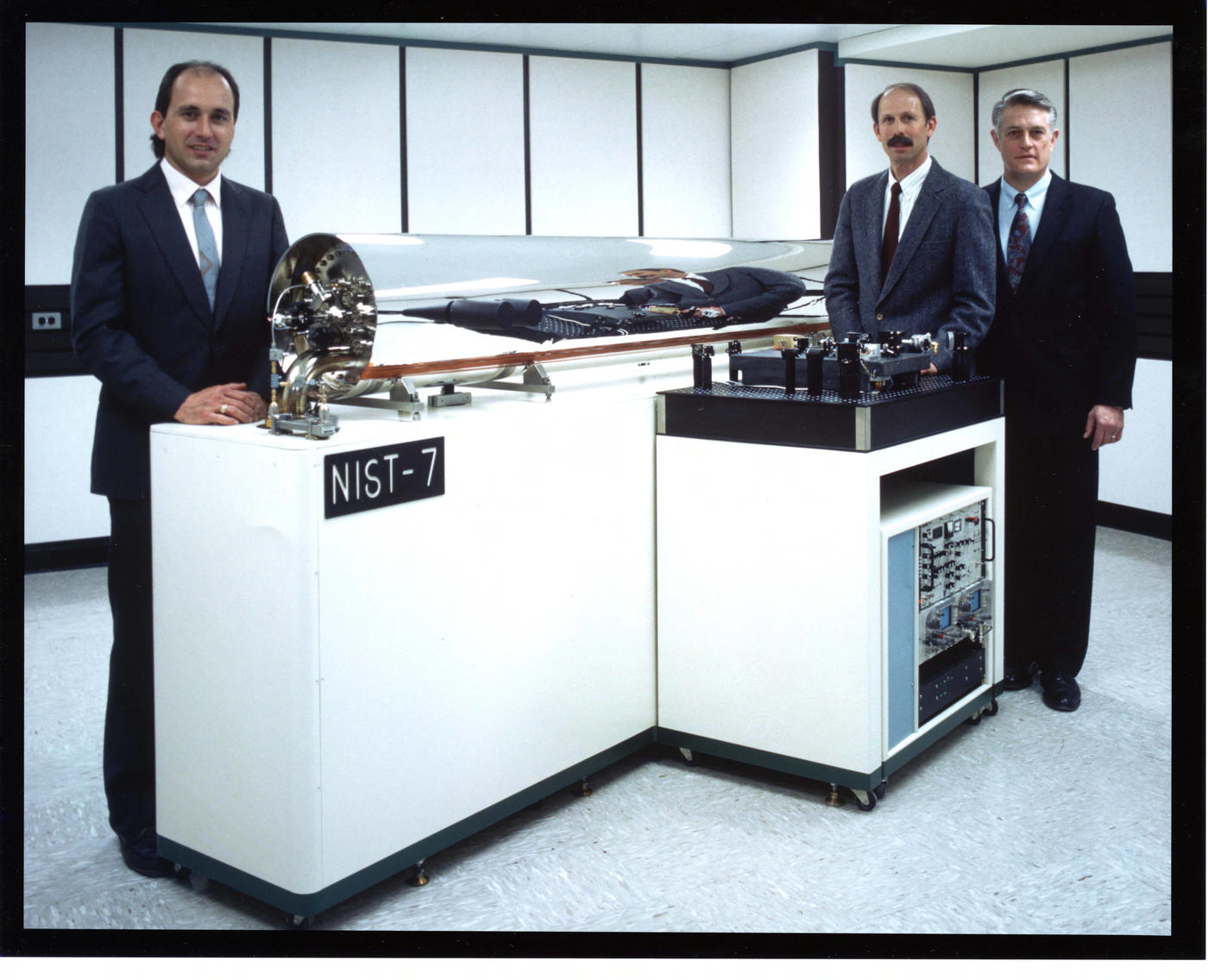

Launched nearly two decades later, in 1993, NIST’s NIST-7 atomic clock was significantly more accurate and wouldn’t gain or lose a second in 6 million years.

You don’t need this kind of precision for your wristwatch. But being able to measure such tiny fractions of a second opened up technological applications that would have been impossible just decades earlier. Atomic clocks have led to advances in timekeeping, communication, metrology, geodesy and advanced positioning and navigation systems.

Most famously, atomic clocks make possible the GPS satellite network that many of us rely on every day. Each of the 31 satellites in the GPS network has multiple atomic clocks that are synchronized daily with atomic clocks on the ground and with each other. GPS satellites continually broadcast information about their positions along with the time they broadcast that position. When a GPS unit in a car or a phone receives signals from four of these satellites, it can use the time and position signals to determine where it — and thus you — are on the globe with a high degree of accuracy.