Context of a Discovery: Jan Hall

The early 1960s were a time of great possibility in physics. Earlier in the century, physicists had developed the theory of quantum mechanics, which described the behavior of light and matter in the realm of the very small, such as the scale of atoms. But in the early 1960s, scientists succeeded in making an important idea from quantum physics theory—stimulated emission of radiation—a reality in the laboratory.

Natural light sources such as the sun and fire flames, as well as technologies such as the incandescent light bulb invented in the late 1800s, radiate light at a wide range of colors. To understand stimulated emission more, it’s important to consider both light’s wave-like and particle-like properties.

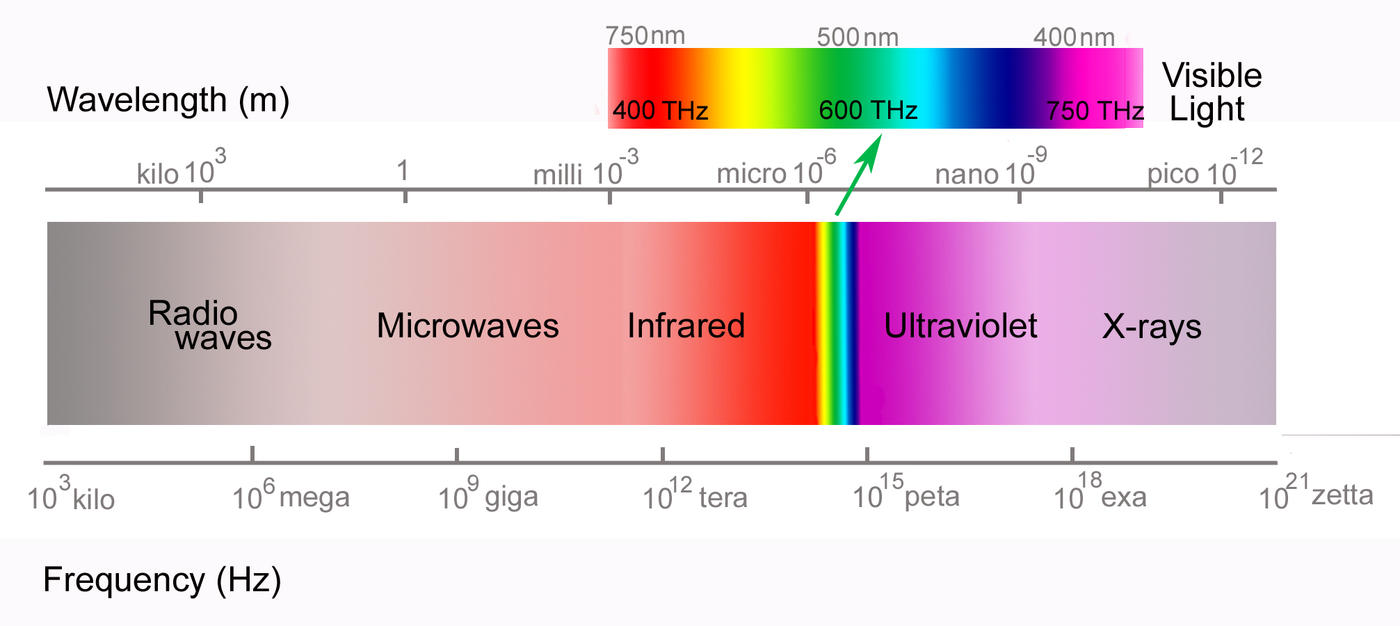

A light wave consists of electric and magnetic fields traveling at right angles to each other; in other words, it is a form of electromagnetic radiation. These fields can be imagined as waves consisting of peaks and valleys. The distance between two neighboring peaks is known as the wavelength. A related quantity is the frequency, the number of wave peaks that pass a fixed point every second. The frequency of a visible light wave—electromagnetic radiation with frequencies between around 430 and 770 trillion cycles per second—determines the color that we perceive the light as being. Electromagnetic waves that we cannot see—including ultraviolet, infrared, microwaves and so on—also have specific frequencies.

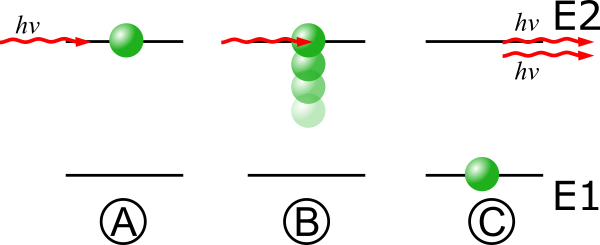

Since the late 1700s, scientists had thought of light as a wave. But in 1905, Albert Einstein explained a phenomenon that mystified physicists of his time by positing that light is not just a wave but also a particle, which he called a “photon.” The work became one of quantum mechanics’ first major successes and eventually earned Einstein the 1921 Nobel Prize in Physics. In 1917, Einstein expanded on the theory of how light and matter interact by showing that a photon at the right frequency could cause an atom to radiate a second photon with the same phase as the first photon, which he called “stimulated emission.”

This idea built on another discovery of quantum physics: that electrons inside atoms can occupy only certain energy levels. Atomic electrons make jumps or “transitions” between energy levels by absorbing or emitting photons, or discrete packets of light. This light has a specific frequency that depends on the energy difference of the transition. Einstein’s stimulated emission would occur when a photon hits an atom that has already absorbed a photon of the same frequency.

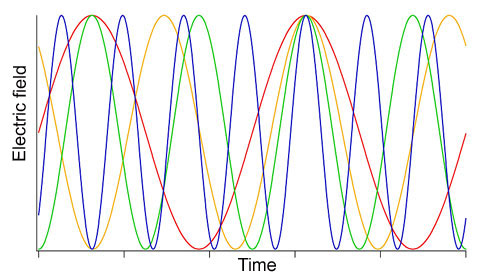

The released photon would have an important additional property, Einstein realized: It would be “in phase” with the photon causing the emission. The crests and valleys of electromagnetic waves emitted from most light sources—the sun, incandescent lamps, etc.—are not synchronized with each other, but rather emerge in a random jumble. Another way to put that is that the light from these sources is “out of phase” or “incoherent.” Stimulated emission would be different. In theory, if enough similar atoms could be stimulated to simultaneously emit in-phase, or coherent, single-frequency light, this could produce a visible light source very different from any that anyone during Einstein’s time had ever seen.

For several decades, scientists doubted whether a device based on Einstein’s principle could actually be built, or would even be useful. But in 1953, Columbia University researcher Charles Townes and his graduate students harnessed Einstein’s principle of stimulated emission to generate coherent microwaves with what they called a maser (Microwave Amplification by Stimulated Emission of Radiation). Microwaves, like visible light, are a form of electromagnetic radiation, but with much lower frequencies.

In 1958, Townes and Arthur Schawlow, then at Bell Laboratories in Murray Hill, New Jersey, published a paper on what they called an “optical maser”—a maser that would produce visible light rather than microwaves. In May 1960, Theodore Maiman demonstrated the first such device at Hughes Research Laboratories in Malibu, California. “Laser,” a term introduced by Gordon Gould, a graduate student at Columbia University, quickly became standard, replacing “optical maser.” (The “l” stood for “light”).

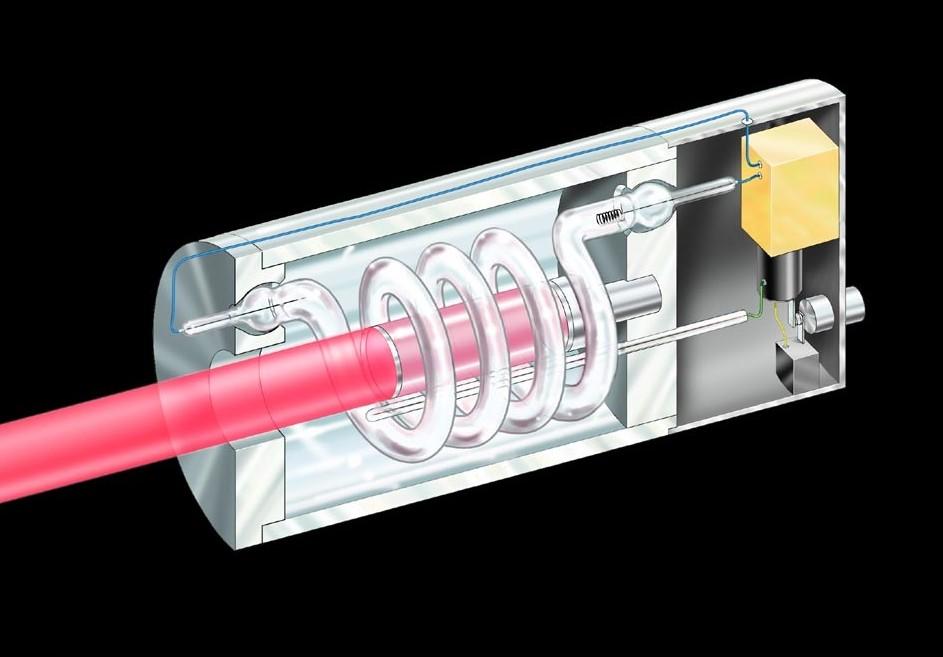

To make his laser, Maiman flashed light from a xenon lamp onto a ruby crystal, so electrons in the crystals’ atoms left the atom’s lowest, or “ground,” energy state and occupied a higher “excited” state. When the electrons fell back to the lowest-energy state, they released coherent red light. Maiman then placed the ruby inside a cavity—a hollow chamber—with a fully reflecting mirror at one end and a partially transparent mirror on the other. The red light bounced between the mirrors, causing more of the ruby’s electrons to fall to the lowest-energy state and release more red light (that is, stimulating the emission of red light). Meanwhile some light escaped the partially transparent side, in pulses lasting a fraction of a second.

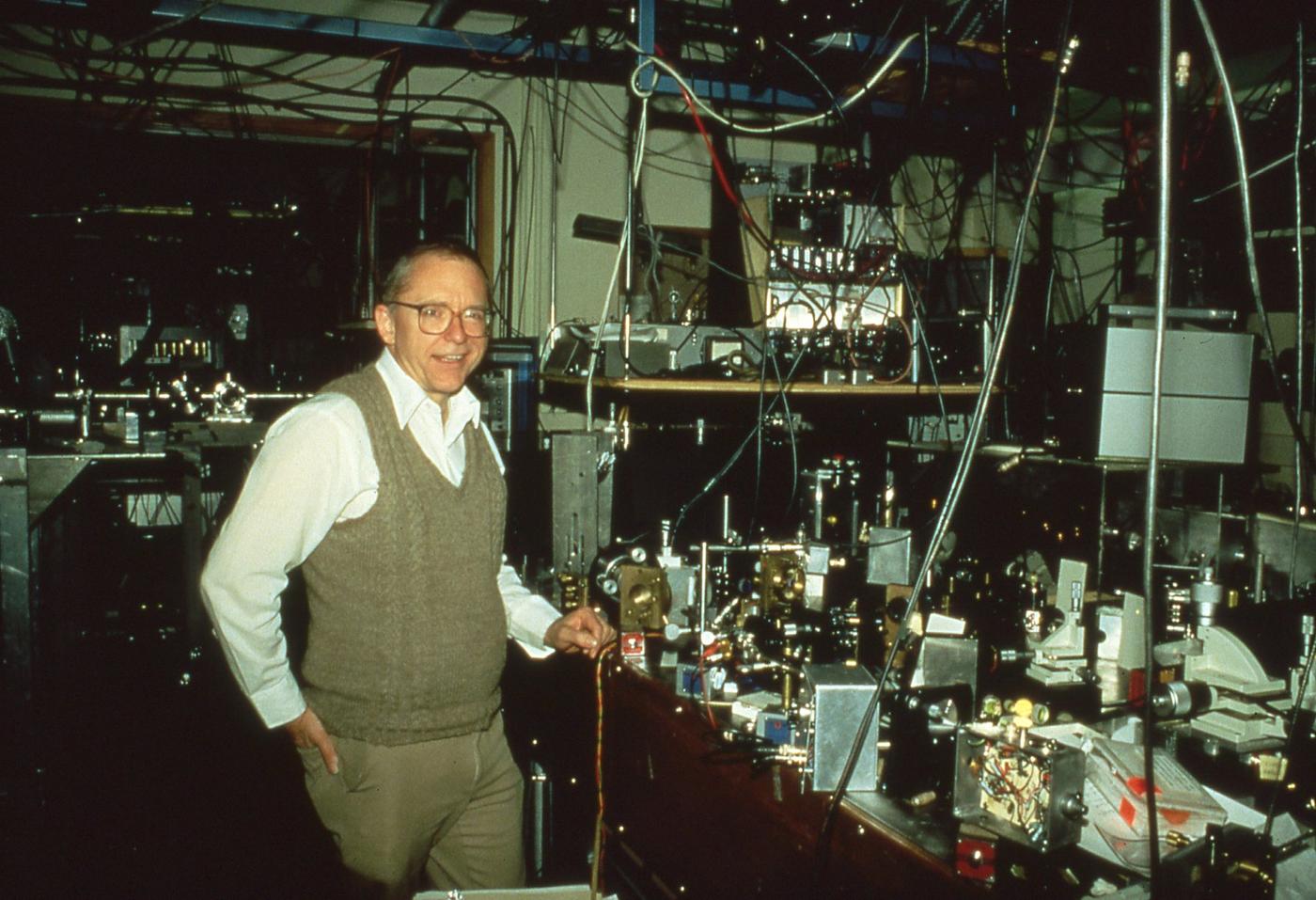

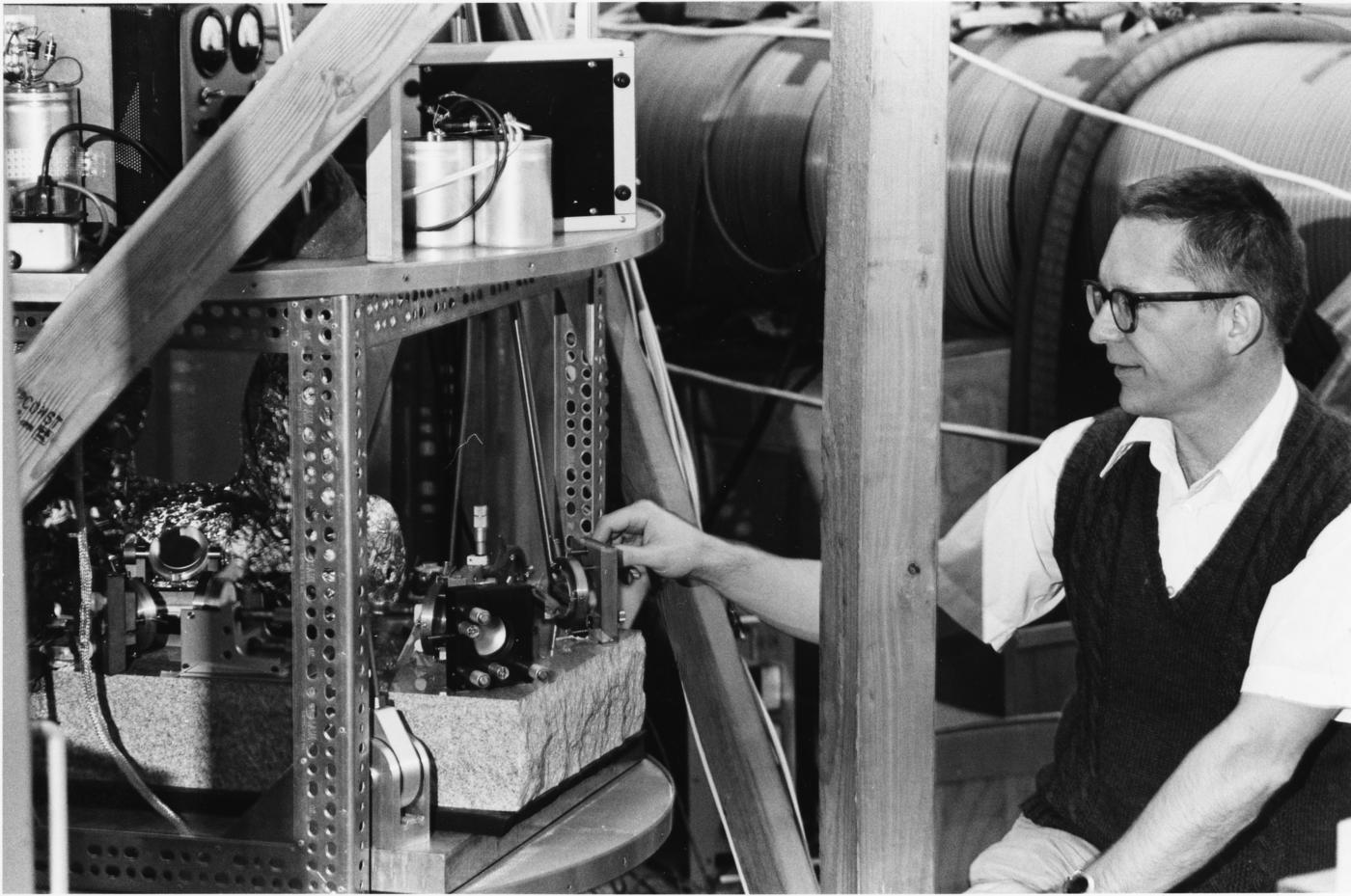

In the early 1960s, Hall began investigating the properties of the ruby pulsed laser at NIST. He enjoyed the work, but was not hooked on pulsed lasers.

“It was pretty fun and exciting, but I started realizing that, if this is going to become anything, it’s going to be a big engineering thing,” Hall says. “If you can have it generate 50 pulses a second rather than one pulse a minute, then you can drill holes in metal or do welding. That just didn’t ring my chimes.”

What did ring his chimes was a new kind of laser he learned about at a 1961 talk by Bell Laboratories postdoctoral researcher Ali Javan. Javan had, the previous December, invented the first continuous-wave laser, using helium and neon gases as the lasing medium. This type of laser emitted a single, pure frequency for as long as energy was supplied. Javan explained that he had set up two such lasers in the lab, Hall recalled in his Nobel lecture. Each laser sent out a stream of unremitting light waves with a frequency of approximately 300 trillion cycles per second (300 terahertz).

Despite coming from entirely separate electronics and hardware, the lasers’ frequencies diverged by just 1,000 cycles per second. Two nearby frequencies can combine or “interfere” to produce a lower-frequency pattern called a “beat.” The beat frequency of the two lasers was low-pitched enough to produce an audible signal, which Javan played for the audience.

While the lasers were remarkably well controlled, to the point of being nearly synchronized, Townes and Schawlow’s theory suggested that they could be made some 300,000 times more accurate—or perhaps even more accurate than that. That sort of challenge appealed to Hall.

“A laser gives out radiation similar to microwaves or radio frequencies,” Hall explains. “And we [now] know you can do completely amazing things with that, like sit in your car and talk to someone on a cell phone. That kind of precision and guidance and control was not possible yet, but was implicit when lasers first came out.”

The challenge, Hall says, was “learning how to stabilize a laser so the frequency doesn’t change, or if it changes, it’s because I want it to.” After laser light is created, the artificial lenses, coils, mirrors and electronics around it conspire to disrupt its purity, causing the light’s frequency to shift unpredictably. This causes problems for scientists making high-precision measurements that are critical for devices such as atomic clocks.

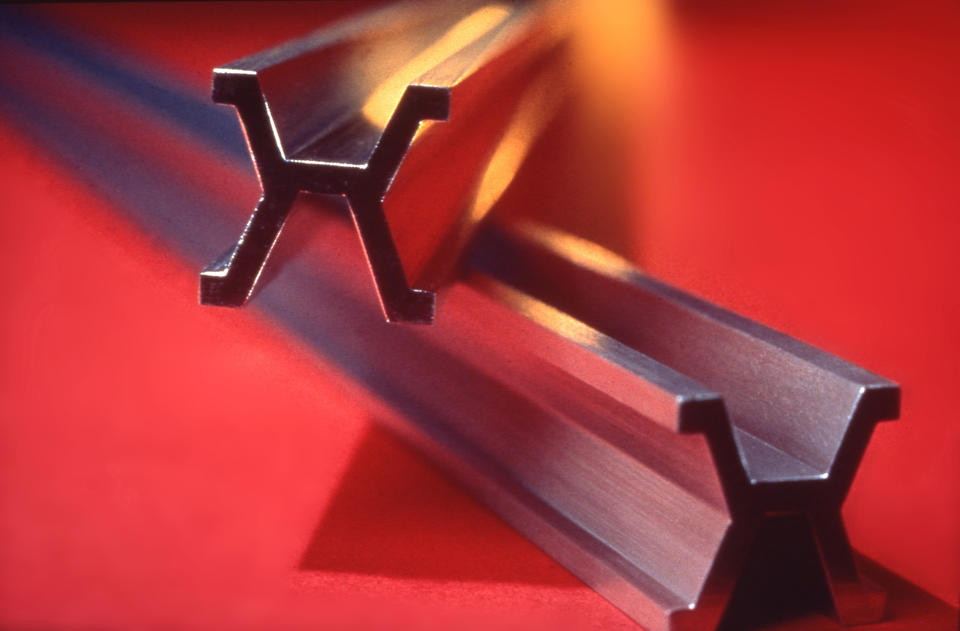

To make lasers more useful, Hall invented equipment to stabilize them by using heat or magnets to change almost imperceptibly the length of the glass cavities in which the laser light reflected. He designed and built amplifiers capable of instructing piezoelectric transducers—tiny devices that convert electrical signals to mechanical motions—to nudge laser-cavity mirrors a hundredth of a millimeter. He also developed electronics to continually monitor a mirror’s position to ensure constant laser output. His technologies to control continuous-wave lasers spread throughout the physics community.

Hall and his colleagues also used their stable lasers to make astoundingly precise measurements. To minimize disturbances from vibrations, NIST fellow Judah Levine, a postdoc at the time, set up Hall’s instruments in an abandoned shaft of the Poorman’s Relief Gold Mine in the foothills west of Boulder and at the edge of forest land. They conducted a remarkable series of experiments in the 1960s and early 1970s. Levine and colleague J.C. Harrison were able to pick up the moon’s tug on Colorado—the first tidal measurement ever done in the mountainous, landlocked state.

“I was the one who went up to the mine most of the time,” Levine says, “but it was his laser that made it possible.”

Levine and his student Robin Tucker Stebbins even performed early searches for gravitational waves from the Crab pulsar in the cosmos, though they knew full well that their instruments were likely not yet sensitive enough.

“We didn’t detect anything, but we shouldn’t have detected anything,” Levine says. They sensed the jostling of underground nuclear tests two states away in Nevada, to help the U.S. government determine if they could effectively monitor bans on nuclear tests. “The test ban treaty was a political issue and a technical issue,” Levine says.

All this was possible because of the super-stable laser light that could be used to detect tiny changes in the environment. Hall had already earned a reputation of being, as NIST Fellow Bill Phillips would later put it, “a consummate experimentalist.”

Through his precision spectroscopy work, Hall also made an important contribution to metrology—the branch of science concerned with precision measurement—by helping establish a new worldwide definition of the meter. Beginning in 1875, the meter was defined by a platinum bar at the International Bureau of Weights and Measures in Paris, with copies distributed to labs around the world.

That worked well enough for some purposes, but metrologists prefer definitions that are tied to natural phenomena, not artificial objects. Scientists looked into using the light from mercury and other atoms as a possible solution. In 1960, the meter was redefined by indirectly measuring the wavelength of orange light emitted by an electron transition in krypton atoms, with a known frequency of around 496 terahertz (trillion cycles per second). This definition meant any lab could, in principle, reproduce the meter.

Scientists realized they could still do better, however, by directly measuring the frequency, rather than relying on the relatively imprecise indirect technique.

Work by Hall and others had brought lasers to the point that they were stable enough to match the frequencies of light emitted by an atom’s electrons as it made transitions between excited and lowest-energy states. If scientists could synchronize a laser’s frequency to that of a known atomic transition, they could be assured of the laser frequency’s accuracy and then use that frequency as a finely hashed ruler for time.

The problem was that electronic counters scientists used to read that ruler couldn’t keep up with lasers probing atomic transitions that produced or absorbed light at optical frequencies. Using electronic counters would be like trying to measure the speed of a race car with a lazy metronome. Carl Williams, deputy director of NIST’s Physical Measurement Laboratory, captures it another way: “It would be like here’s exactly one meter, but I don’t have subdivided markings on it.”

NIST scientists worked for years to build an elaborate system with daisy-chained single-frequency lasers, each half the frequency of the next, to step down infrared light from a transition of one of Hall’s methane-stabilized helium-neon lasers, with a transition around 88 trillion cycles per second, to a roughly 9-billion-cycle-per-second microwave signal that electronic oscilloscopes could grasp.

In 1971, a team led by JILA’s Ken Evenson measured the frequency, while a team led by Hall measured the laser light’s wavelength. Multiplying these two measurements together yielded a new value for the speed of light—299,792,457.4 meters per second—that was 100 times more precise than the accepted value.

Though impressive, the method was far too complicated to be practical, and the measurement was never repeated. At the time, the second was already defined based on a microwave-frequency transition in the cesium atom to 10,000 times greater precision than the light speed measurement. To leverage this precision, in 1983, the International Bureau of Weights and Measures used Hall’s as well as other labs’ measurements to redefine the meter as the distance light in a vacuum travels in 1/299,792,458ths of a second, making the meter a derived unit based on the cesium-defined second.

Cesium is a good atom for metrology because it has a relatively simple electron energy level structure that includes an energy transition that lies in the upper end of the microwave frequency range—high enough to give a relatively large number of cycles per second, yet low enough that electronics can still access. But Hall knew that an elegant means of measuring the higher-frequency light emitted by optical transitions would open up new frontiers of science and enable an even more precise definition of the second. This would be a natural extension of his quest for ever-more-accurate frequency measurements. He just wasn’t quite sure yet how to get there.