Blogrige

The Official Baldrige Blog

Baldrige Award-Winning University Breaks Ground (Again) with Benchmarking Project

An assistant chancellor at the University of Wisconsin-Stout—a Baldrige Award recipient—helped develop a national benchmarking project that will soon provide comparative data for the nation’s universities to better measure their performance.

University of Wisconsin-Stout

Baldrige Award Recipient, 2001

Baldrige Examiner, 2018

JCCC Executive Director of Institutional Effectiveness, Planning, and Research John Clayton, who oversees the NHEBI, recently conveyed that he is excited by the prospect of the partnership with UW-Stout. “The Benchmarking Institute has provided this kind of data to the two-year college sector for 15 years,” he said. “Since 2004, over 400 two-year colleges have relied on us to provide comparative data on many key indicators to improve efficiency, institutional effectiveness, and student outcomes.”

Wentz, who is also a senior Baldrige examiner, has overseen several other ground-breaking improvement efforts at her university in recent years. One example, is UW-Stout’s annual “You Said, We Did” institutional improvements based on faculty and staff input.

She described the new benchmarking project as “another example of how the Baldrige program has helped [our university] grow and improve.”

Project Scope and Measures

Asked how many other universities will be involved in the benchmarking project, Wentz responded, “For the first year [the university’s fiscal year 2019, which begins this fall], we’re targeting 25 to 50 other four-year universities. The long-term goal is to involve over 300 institutions per year.” She added that the NHEBI previously launched a similar initiative involving two-year colleges that has “consistently had 250 colleges per year” reporting data.

Wentz provided two examples of standard measures for the project, as follows:

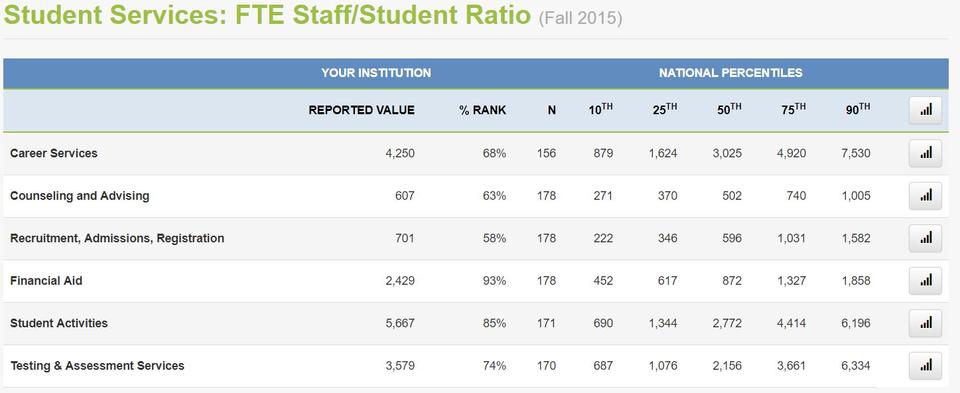

- Full-Time Equivalents (FTEs) allocated to the work of a support unit within a university. Data for this measure will be split between centralized and decentralized staff members, she explained, given that (beyond staff members working full-time in such a unit) universities often have some personnel based in other administrative units on campus who help support the work of centralized units.

- Budget allocated to the work of a support unit, split by personnel and non-personnel and by centralized and decentralized

The project also will collect data for outcome metrics, she said, citing as an example the retention rate of faculty and staff members as a measure associated with a university’s Human Resources office.

Project Phases

As stated in a recent UW-Stout news story, Wentz said a stimulus for the project was the “need to make our [internal] review process more meaningful using benchmarking data.” Faced with a lack of comparative results to provide context for her office’s performance measurements of UW-Stout support units, Wentz decided to help develop such data. “We wanted to provide more meaningful data for continuous improvement,” she said.

Wentz and her PARQ colleagues Frank Oakgrove and Elena Carroll are currently developing metrics for the NHEBI project, to be refined based on input from a national advisory board. Data collection for the project will be launched in early November, and the plan is to have results available for use by other universities by mid-February, according to Wentz.

Contact Information

If you are interested in participating in this benchmarking project (e.g., as a university) or getting more information about it, contact the info [at] universitybenchmark.org (University Benchmark Project).

Enrich Your Career and Improve Organizational Performance

Become a Baldrige Examiner

If you are looking for a one-of-a-kind professional development and networking opportunity, and the chance to make a meaningful contribution to organizational improvement and U.S. competitiveness, apply to serve as a volunteer on the Baldrige Board of Examiners.

Application Opens: November 2018

The 2019 Board of Examiner Application will open in November 2018 and close in January 2019.

This is an important effort. The lack of good comparison data has been a stumbling block for higher education institutions' participation in Baldrige improvement programs. While most nonacademic support units have professional associations that have organized efforts to share data, the lack of standardization of collection methods and terminology has made the use of such data difficult.

It should be noted that the term "benchmark" can lead to confusion. As used in this article, the meaning is "comparison data" as opposed to the Baldrige "best practices, best performances" definition. Ideally, enough institutions will eventually participate to assure that the data provided are indeed benchmarks by both definitions.