The Space Age: Highlights

1960: Automating the Census

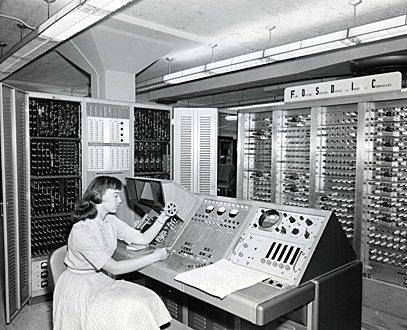

Without realizing it, most Americans adults likely have had some of their household information scrutinized by FOSDIC.

The Film Optical Sensing Device for Input to Computers, which scanned microfilm of hand-marked forms and converted the markings into electronic form, was developed by NIST for the U.S. Bureau of the Census in the early 1950s. Updated versions of the device processed data collected in the censuses held every 10 years from 1960 until 1990, introducing automation to this massive survey.

Before FOSDIC, census data were key-punched into cards. FOSDIC enabled the switch to multiple-choice documents and, by 1970, self-enumeration by the public, a cost-saving measure. Scanning with a cathode ray tube under the direction of a computer program, the first FOSDIC translated up to 10 million "answer positions" per hour into computer input. The last model used in the 1990 census operated at 20 times this rate.

Twelve different versions of FOSDIC were developed and put into service from 1954 through 1998. Various models were used by or for federal agencies to process data on fallout shelters, atmospheric pollutants, medical records, postal mail volume, and other surveys. The machines also were used to scan microfilms of archival weather data and special films made in underwater instruments. Among census-related applications, the machines collected data on which the nation's unemployment figures were based for more than 30 years.

1961: Synchrotron Sheds New Light on Research

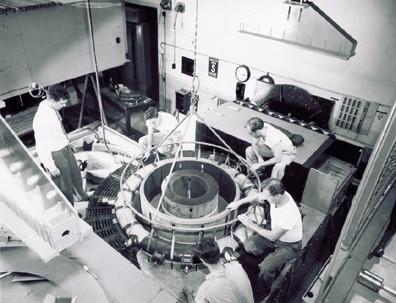

Today's scientists compete for time on synchrotrons—large, donut-shaped facilities that produce a unique type of radiation. The technology is used, for example, to analyze protein structures as a basis for designing new drugs. But back in 1961, this research tool was little more than a curiosity outside of NIST, where the first regular experiments using synchrotron light were carried out.

Synchrotron radiation is emitted by electrons that are accelerated in a magnetic field. In the early 1950s, NIST found itself with an electron accelerator that another federal lab did not want. At the time, physicists were most interested in the particles (electrons) circulating in the device. It was also known that the electrons emitted light, which interested NIST because of the potential for an absolute radiometric standard—in effect, a "national standard light bulb." Among its advantages, the radiation is continuous across the spectrum and can be "tuned" to the desired wavelength.

Robert Madden, who became director of the Institute's Synchrotron Ultraviolet Radiation Facility (SURF), was hired in 1961 along with another spectroscopist, Keith Codling, to develop the NIST facility into a light source. Their groundbreaking studies of the energy-absorption spectrum of helium, and later other noble gases (neon, argon, krypton, and xenon), revealed new interaction mechanisms among the gases, radiation, and free electrons. The results startled scientists around the world and influenced the direction of atomic physics for some time. The research also affected fields such as astrophysics, where the new processes had to be taken into account in stellar models. Perhaps most importantly, Madden and Codling proved how useful synchrotron radiation could be.

An upgraded SURF remains in operation today, supporting work by the National Aeronautics and Space Administration and the microelectronics industry.

1964: Mathematics Handbook Becomes Best Seller

From making a military map to explaining the knock in gasoline engines or the light scattering that produces a rainbow, many technical and scientific challenges are best solved with the aid of mathematical functions. Such functions are so important that a national project devoted to compiling tables of them was established in the late 1930s. Thus began a NIST tradition of publishing mathematics reference data.

The invention of computers threatened to make the tables obsolete. But at a national conference in 1954, it was agreed that computers merely changed how the tables should be designed. For instance, for use in programming computers, the figures would need to be more accurate than before. So Milton Abramowitz and Irene A. Stegun of NIST produced an updated compendium, an effort that took eight years.

More than 1,000 pages long, the Handbook of Mathematical Functions was first published in 1964 and reprinted many times, with yet another reprint in 1999. Its influence on science and engineering is evidenced by its popularity. In fact, when New Scientist magazine recently asked some of the world's leading scientists what single book they would want if stranded on a desert island, one distinguished British physicist said he would take the Handbook.

The Handbook is likely the most widely distributed and most cited NIST technical publication of all time. Government sales exceed 150,000 copies, and an estimated three times as many have been reprinted and sold by commercial publishers since 1965. During the mid-1990s, the book was cited every 1.5 hours of each working day. And its influence will persist as it is currently being updated in digital format by NIST.

1966: An Early Spreadsheet

More than a decade before spreadsheet software helped to launch the boom in personal computers (PCs), NIST published Omnitab, a computer program for statistical and numerical analysis that had many attributes of a spreadsheet.

Conceived by the Institute's Joseph Hilsenrath and based in part on colleague Joseph Wegstein's earlier work on a tabular computing scheme, Omnitab was written to automate routine programming tasks—such as handling data input and output and producing graphs—for NIST physicists, chemists, and engineers, who were thus freed to concentrate on higher level science. Omnitab proved so helpful that its use extended far beyond NIST. For about 10 years after its 1966 publication, it was popular with statisticians in agricultural research, private industry, and universities. Foreign-language editions appeared as well; the program could accept simple commands in French, German, and even Japanese.

Omnitab was like a spreadsheet because it had an extensive and accurate math facility, a macro language, and a graphical output. Most importantly, it created a tableau in which the entries were calculated from input values. However, operations were defined for entire columns. VisiCalc, the "killer application" business spreadsheet unveiled in 1979 for PCs, allowed functions to be entered in individual cells and was more dynamic and interactive.

Omnitab initially used an old programming language and did not migrate to PCs until after its heyday. But its influence persists today in the form of Minitab, a PC-based commercial software package for teaching statistics and for research in business and manufacturing.

1967: Learning from Structural Failures

By understanding the history of structural failures, NIST engineers and materials scientists help to make sure they aren't repeated.

So it was in 1967, when the Point Pleasant Bridge linking West Virginia to Ohio suddenly collapsed, dumping vehicles into the Ohio River and killing 46 people. Transportation officials asked NIST to send an investigator, and after he arrived, John Bennett found a shallow crack that appeared to initiate a fracture in a key piece of the bridge suspension system. This system was made of a relatively new carbon steel that, as was later learned, tended to crack. Many other cracks were found, all in areas of heavy corrosion.

The investigation underscored the importance of basic research in materials science and engineering. It also led to safer bridges nationwide. An identical bridge was closed in West Virginia, and federal highway officials then investigated cracks in all U.S. highway bridges.

Other high-profile failure analysis cases focused on ships and buildings. In the 1940s, a number of U.S. "Liberty" ships that had been welded together (instead of riveted) cracked apart when exposed to frigid water. NIST test results led to the use of better materials in supertankers.

In 1981, NIST was asked to investigate the collapse of walkways in the Kansas City Hyatt Regency Hotel—the nation's worst building collapse, which killed 114 people and injured more than 200.

Among the major conclusions: Critical connections in two walkways were capable of supporting less than one-third of the load expected under the local building code. This condition resulted from a change by the fabricator in the original design, and subsequent approval of that change by the design engineer, without further analysis. As a result of this case, the American Society of Civil Engineers adopted a document assigning, for the first time, responsibility for various aspects of the construction process.

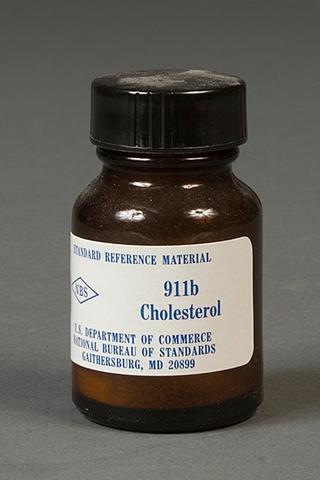

1967: Assuring Quality in Tests and Materials

Before 1967, it was difficult to know whether a person really had high cholesterol. That's because U.S. cholesterol tests were off by as much as 23 percent—resulting in either unnecessary treatment or an increased (and unacknowledged) risk of death.

Things changed for the better after 1967, when NIST produced its first Standard Reference Material® (SRM®) for clinical applications. This pure, crystalline material, laboriously measured and analyzed, was used by manufacturers and clinical labs to calibrate instruments for analyzing cholesterol. By 1969, the uncertainty in cholesterol tests had fallen to 18 percent. It fell further to about 5 percent in 1995, thanks to new and improved renewals of blood serum SRMs for cholesterol values, as well as other factors.

In recent decades, NIST has produced more than 60 different clinical chemistry standards, which help to assure the accuracy, reliability, and consistency of a wide variety of laboratory tests, from the amount of lead or glucose in blood to "DNA fingerprinting."

The clinical standards are just one category of SRMs, which date back to 1906 (when they were called standard samples). The program began when the Institute was asked to settle disputes regarding the carbon and sulfur contents of various irons and steels used by the railroad industry. At the time, virtually no technical information existed on the physical properties of these materials, and there were few standard test methods or calibrated measurement tools. NIST produced its first standard sample when the American Foundryman's Association requested standardized iron for its member industries. Today, some 35,000 units of SRMs are sold each year.

1969: An Out-of-This-World Experiment

In their pioneering moon landing on July 20, 1969, the Apollo 11 crew left behind a briefcase-sized array of reflectors that bounce back a powerful laser pulse aimed at it from telescopes on Earth. By measuring the round-trip travel time for the pulse (about 2.5 seconds), scientists defined the distance between the Earth and moon to better than 2.5 cm (1 inch). The experiment—one of the space program's longest running and most cost effective—was suggested by James Faller of JILA, a Boulder, Colo., research institute jointly operated by NIST and the University of Colorado. He also provided the initial design for the Apollo 11 array and the two additional reflector arrays that were left by later missions, Apollo 14 (shown on right) and Apollo 15. The experiment, which is still active today, continues to provide new insights into the length of the Earth's day; knowledge of the moon's orbit, the lunar tides, and the combined mass of the Earth and moon; and an important test of gravitational theories.

| Notice of Online Archive: This page is no longer being updated and remains online for informational and historical purposes only. The information is accurate as of 2001. For questions about page contents, please inquiries [at] nist.gov (contact us). |