Summary

One promising new approach to next generation information processing is spintronics, where information is carried by electronic spin rather than charge. Among the spintronic devices that are being developed, magnetic tunnel junctions are particularly suited to implementing novel approaches to computing because they are multifunctional and compatible with standard integrated circuits. When they are operated near the thermal switching threshold, magnetic tunnel junctions exhibit complex stochastic behavior that is reminiscent of some aspects of brain activity. In other configurations they can oscillate when driven by a steady current. We are exploring the extent to which these novel functionalities can be applied to computing that emulates the brain.

Description

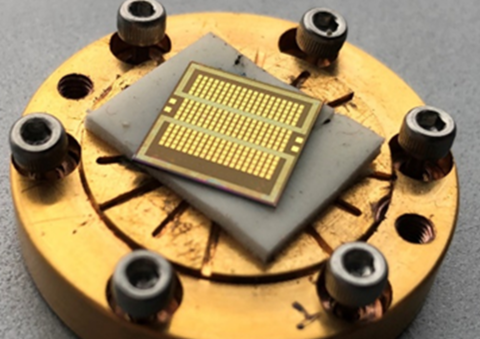

Fig. 1. Colorized scanning electron micrograph of a crossbar array with 30 nm diameter magnetic tunnel junctions, a schematic drawing of the crossbar, and a schematic drawing of a magnetic tunnel junction.

Magnetic tunnel junctions (see Fig. 1) consist of two thin films of ferromagnetic material separated by a few atomic layers of an insulating material. The insulator is so thin that electrons can tunnel quantum mechanically through it. The rate at which the electrons tunnel is affected by the relative magnetic configuration of the two ferromagnetic layers. If the magnetizations in the two layers are parallel, it is easier to tunnel than if they are antiparallel. The resulting difference in resistance makes it straightforward to read the state of the magnetic layers using electronic circuits. This ease of reading the magnetic state is only one important feature of these devices. The other is the ability to change the state of the device by passing a current through it, creating a spin torque. Practical reading and current-control of magnetic tunnel junctions are key features that are enabling the realization of fast, dense, non-volatile memory integrated into complementary metal-oxide-semiconductor (CMOS) circuits in commercial applications today. They are also the basis of several proposed applications in novel computing schemes.

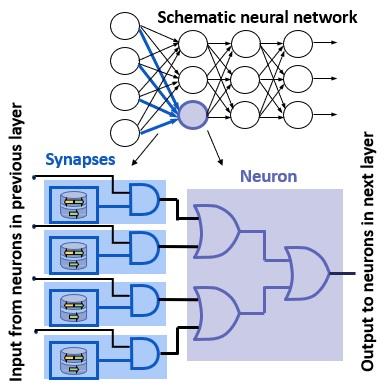

The most straightforward way to use magnetic tunnel junctions in a novel computing scheme is to use them as controllable binary “weights” connecting neurons forming a neural network. Neural networks have become a workhorse in many aspects of information technology from image and voice recognition through search algorithms and even self-driving cars. Implementing them in typical computing environments is not as energy efficient as it could be. The use of crossbar arrays of programmable devices, like magnetic tunnel junctions, is one way of addressing the energy efficiency. Working with Western Digital, we have implemented such a crossbar array and used it to solve a simple neural network task. We are implementing bigger and better arrays to solve more complicated tasks and learn what measurements are needed on arrays of such devices to bring them to commercialization.

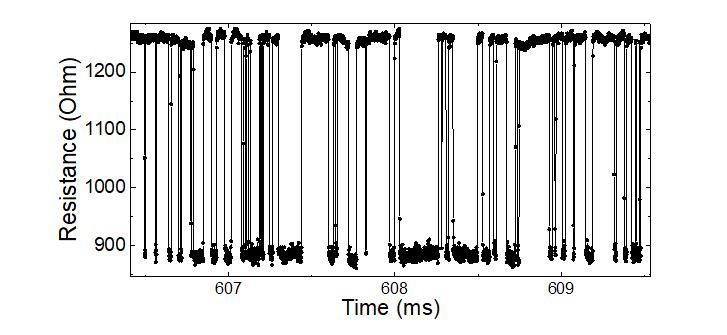

When the energy barrier that separates the parallel and antiparallel states in a magnetic tunnel junction becomes comparable to ambient thermal energy, the tunnel junction spontaneously flips back and forth between these two states (see Fig. 2). Such junctions are said to be superparamagnetic. As current passes through the device in one direction, the junction spends more time in one state and the flipping rate reduces. As current flows in the opposite direction, it spends more time in the other state and the flipping rate also reduces. The flipping rate is fastest at some intermediate current close to zero, where equal time is spent in each state. We are measuring the properties of such devices, how they respond to external inputs like periodic voltages and noise, and how the time varying resistance coupled with spin torques allows fluctuating devices to influence other such devices. We are developing efficient models of these devices to enable their incorporation in efficient circuit modelling software.

In the brain, some neurons emit their spikes with seemingly random patterns, encoding information sometimes in the average rate, and sometimes in the arrival time of a spike. This neuronal spiking operates with energies very close to the thermal limit, that is, normal thermal fluctuations can randomly create extra events or cause other events to be lost. Such behavior suggests that designing computers to operate close to the thermal limit could in fact be practical if they could be designed so that they are resilient to thermal noise. A resilient computer of this kind could function using much less power than conventional computers.

One computational approach that can be implemented with superparamagnetic tunnel junctions is stochastic computing. In stochastic computing, all numbers are between zero and one and are not represented by binary numbers but by random bitstreams generated with a probability corresponding to the number they represent. We have designed a low energy bitstream generator based on superparamagnetic tunnel junctions. It consumes less energy than equivalent generators based on just CMOS circuitry and generates truly random bitstreams unlike those based on CMOS circuitry. We show how such bitstream generators can be combined with CMOS circuitry to carry out stochastic computing to identify hand-written digits effectively and efficiently (see Fig. 3).

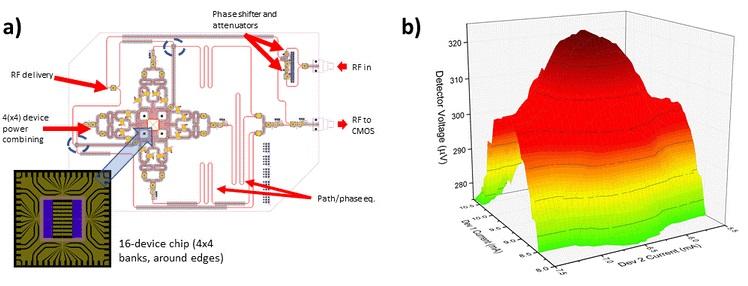

Some collections of neurons spike in a periodic pattern in response to certain stimuli. The coupling between such oscillations and sympathetic oscillations in other parts of the brain is thought to be an efficient way of processing sets of stimuli. This behavior suggests that it might be efficient to compute with sets of connected oscillators. Such coupling can be mapped on to a variety of computations, particularly computations that seek a relative ranking or choice rather than computing with high precision such as pattern matching. For example, we have recently demonstrated that spin-torque oscillators have shown promise as potential non-Boolean or neuromorphic computational devices by using their ability to phase lock. In these devices, the nonlinear process of frequency-pulling and phase-locking can be used as a measure of how “close” a test value is to a reference value, the essential calculation for “degree of match”. The degree of phase coherence can be mapped on to a “distance” measure. We fabricated arrays of injection-locked spin-torque oscillators (see Fig. 4) and showed that they can act as a system to calculate the degree of match of a set of test images. In addition, we showed that such arrays can be re-purposed to calculate convolutions.

Another way to take advantage of the ability of these oscillators to lock to an external signal is to use and array of them for image reconstruction. We have simulated an array of oscillators and the associated CMOS circuitry that reconstructs stored images from noisy or incomplete inputs. The simulations show that the circuit could reconstruct 16x12 color images using only 120 nJ of energy.

Other sets of oscillators can form recurrent networks, that is, networks where the output not only depends on the input, but also the current state of the network. Such networks can be extremely efficient processors for time-dependent tasks, like speech recognition. We have demonstrated that appropriately shaped magnetic tunnel junction oscillators can act as recurrent networks by using them in a demonstration of reservoir computing. With the development of efficient ways to couple groups of such oscillators, they could form the basis of extremely efficient computers for tasks like voice and video recognition. Their ultimate scaling suggests that such oscillators could be among the most energy efficient and take up the least area in an integrated circuit.