Summary

The Polymer Analytics project was established with the goal of accelerating the discovery of knowledge for polymer design through the development of datasets, methods and tools through the integration of the four scientific paradigms (experiment, theory, computation, and machine learning) with a focus on broad problems including a lack of large datasets for machine learning, limited computational resources, and polymer waste streams, among others.

Description

This project focuses on a variety of activities to achieve the aforementioned goal of accelerating the discovery of new polymer physics.

Enhanced machine learning

Two major hurdles to applying machine learning to polymer science is a lack of large datasets and a need to understand the model predictions often in terms of underlying physics. Here we simultaneously tackle these challenges by developing new methods for incorporating theory and domain knowledge into machine learning among other methods. Our efforts enable the widespread use of machine learning to accelerate the discovery of new polymer physics. For example, see our Theory aware Machine Learning code.

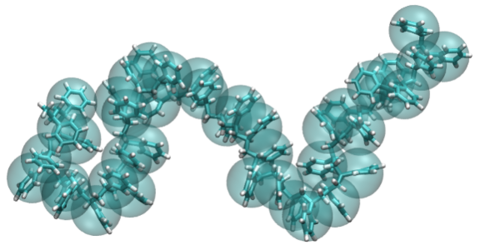

Molecular Simulation Tools

We develop digital data infrastructure for the multiscale modeling of polymers starting at the molecular level to address limited computational resources and the time-consuming nature of setting up both atomistic and coarse-grained molecular simulations. Specific efforts include WebFF: Force-field repository for organic and soft materials and COMSOFT Workbench: Tools for Efficient Coarse-Grained Modeling of Soft Materials. The latter includes recent advances on preserving dynamics in addition to thermodynamics during coarse-graining as detailed here. Also part of our portfolio is ZENO: A software tool for computation of material, solution, and suspension properties for a specified particle shape or molecular structure using path-integral and Monte Carlo methods. The open source code can be found here.

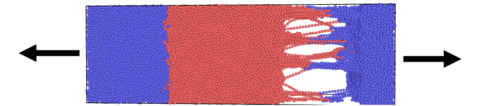

Circular Economy

To enable next generation recycling, we apply simulation techniques and machine learning. For example, we use near infrared spectroscopy combined with machine learning to predict polymer properties enabling property based sortation. This effort is in collaboration with the Macromolecular Architectures project. Additional details can be found on that project page.

Polymer databases

In collaboration with partners, we build FAIR (findable, accessible, interoperable, reproducible) data resources that enable machine learning across the entire polymers community and provide data to test emerging theories. Specifically, in collaboration with MIT, University of Chicago, Citrine Informatics and Dow, we contributed to the the development of A Community Resource for Innovation in Polymer Technology and in collaboration with CHiMaD and Air Force Research Laboratory, we contributed to the development of the Polymer Property Predictor and Database

Other efforts

We also apply our skill sets in molecular simulation and machine learning in close collaboration with other projects in the Materials Science and Engineering Division. Please see Related NIST Projects (to the right) for details.

Publications

For the most up to date publications, please visit the websites of staff members.