Nanomagnets Can Choose a Wine, and Could Slake AI’s Thirst for Energy

A new type of neural network aced a virtual wine-tasting test and promises a less energy-hungry version of artificial intelligence.

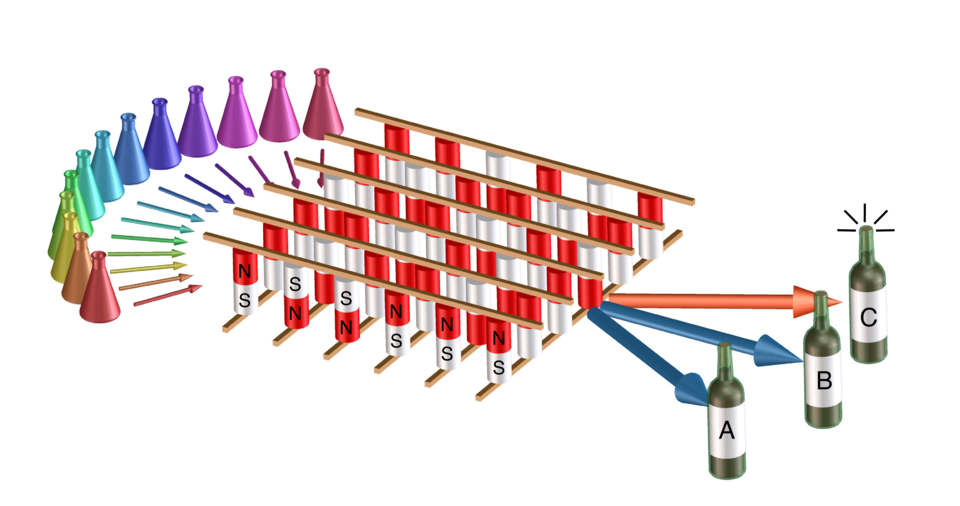

By analyzing the different characteristics of wines, such as acidity, fruitiness and bitterness (represented as colored flasks on the left), a novel AI system (center) successfully determined which type of wine it was (right). The AI system is based on magnetic devices known as "magnetic tunnel junctions," and was designed and built by researchers at NIST, the University of Maryland and Western Digital.

Human brains process loads of information. When wine aficionados taste a new wine, neural networks in their brains process an array of data from each sip. Synapses in their neurons fire, weighing the importance of each bit of data — acidity, fruitiness, bitterness — before passing it along to the next layer of neurons in the network. As information flows, the brain parses out the type of wine.

Scientists want artificial intelligence (AI) systems to be sophisticated data connoisseurs too, and so they design computer versions of neural networks to process and analyze information. AI is catching up to the human brain in many tasks, but usually consumes a lot more energy to do the same things. Our brains make these calculations while consuming an estimated average of 20 watts of power. An AI system can use thousands of times that. This hardware can also lag, making AI slower, less efficient and less effective than our brains. A large field of AI research is looking for less energy-intensive alternatives.

Now, in a study published in the journal Physical Review Applied, scientists at the National Institute of Standards and Technology (NIST) and their collaborators have developed a new type of hardware for AI that could use less energy and operate more quickly — and it has already passed a virtual wine-tasting test.

As with traditional computer systems, AI comprises both physical hardware circuits and software. AI system hardware often contains a large number of conventional silicon chips that are energy thirsty as a group: Training one state-of-the-art commercial natural language processor, for example, consumes roughly 190 megawatt hours (MWh) of electrical energy, roughly the amount that 16 people in the U.S. use in an entire year. And that’s before the AI does a day of work on the job it was trained for.

A less energy-intensive approach would be to use other kinds of hardware to create AI’s neural networks, and research teams are searching for alternatives. One device that shows promise is a magnetic tunnel junction (MTJ), which is good at the kinds of math a neural network uses and only needs a comparative few sips of energy. Other novel devices based on MTJs have been shown to use several times less energy than their traditional hardware counterparts. MTJs also can operate more quickly because they store data in the same place they do their computation, unlike conventional chips that store data elsewhere. Perhaps best of all, MTJs are already important commercially. They have served as the read-write heads of hard disk drives for years and are being used as novel computer memories today.

Though the researchers have confidence in the energy efficiency of MTJs based on their past performance in hard drives and other devices, energy consumption was not the focus of the present study. They needed to know in the first place whether an array of MTJs could even work as a neural network. To find out, they took it for a virtual wine-tasting.

Scientists with NIST’s Hardware for AI program and their University of Maryland colleagues fabricated and programmed a very simple neural network from MTJs provided by their collaborators at Western Digital’s Research Center in San Jose, California.

Just like any wine connoisseur, the AI system needed to train its virtual palate. The team trained the network using 148 of the wines from a dataset of 178 made from three types of grapes. Each virtual wine had 13 characteristics to consider, such as alcohol level, color, flavonoids, ash, alkalinity and magnesium. Each characteristic was assigned a value between 0 and 1 for the network to consider when distinguishing one wine from the others.

“It’s a virtual wine tasting, but the tasting is done by analytical equipment that is more efficient but less fun than tasting it yourself,” said NIST physicist Brian Hoskins.

Then it was given a virtual wine-tasting test on the full dataset, which included 30 wines it hadn’t seen before. The system passed with 95.3% success rate. Out of the 30 wines it hadn’t trained on, it only made two mistakes. The researchers considered this a good sign.

“Getting 95.3% tells us that this is working,” said NIST physicist Jabez McClelland.

The point is not to build an AI sommelier. Rather, this early success shows that an array of MTJ devices could potentially be scaled up and used to build new AI systems. While the amount of energy an AI system uses depends on its components, using MTJs as synapses could drastically reduce its energy use by half if not more, which could enable lower power use in applications such as “smart” clothing, miniature drones, or sensors that process data at the source.

“It’s likely that significant energy savings over conventional software-based approaches will be realized by implementing large neural networks using this type of array,” said McClelland.

—Reported and written by Chad Boutin and Rebecca Jacobson

Paper: J.M. Goodwill, N. Prasad, B.D. Hoskins, M.W. Daniels, A. Madhavan, L. Wan, T.S. Santos, M. Tran, J.A. Katine, P.M. Braganca, M.D. Stiles and J.J. McClelland. Implementation of a Binary Neural Network on a Passive Array of Magnetic Tunnel Junctions. Physical Review Applied. Published online July 18, 2022. DOI: 10.1103/PhysRevApplied.18.014039