Cybersecurity Insights

a NIST blog

Differential Privacy for Privacy-Preserving Data Analysis: An Introduction to our Blog Series

Does your organization want to aggregate and analyze data to learn trends, but in a way that protects privacy? Or perhaps you are already using differential privacy tools, but want to expand (or share) your knowledge? In either case, this blog series is for you.

Why are we doing this series? Last year, NIST launched a Privacy Engineering Collaboration Space to aggregate open source tools, solutions, and processes that support privacy engineering and risk management. As moderators for the Collaboration Space, we’ve helped NIST gather differential privacy tools under the topic area of de-identification. NIST also has published the Privacy Framework: A Tool for Improving Privacy through Enterprise Risk Management and a companion roadmap that recognized a number of challenge areas for privacy, including the topic of de-identification. Now we’d like to leverage the Collaboration Space to help close the roadmap’s gap on de-identification. Our end-game is to support NIST in turning this series into more in-depth guidelines on differential privacy.

Each post will begin with conceptual basics and practical use cases, aimed at helping professionals such as business process owners or privacy program personnel learn just enough to be dangerous (just kidding). After covering the basics, we’ll look at available tools and their technical approaches for privacy engineers or IT professionals interested in implementation details. To get everyone up to speed, this first post will provide background on differential privacy and describe some key concepts that we’ll use in the rest of the series.

The Challenge

How can we use data to learn about a population, without learning about specific individuals within the population? Consider these two questions:

- “How many people live in Vermont?”

- “How many people named Joe Near live in Vermont?”

The first reveals a property of the whole population, while the second reveals information about one person. We need to be able to learn about trends in the population while preventing the ability to learn anything new about a particular individual. This is the goal of many statistical analyses of data, such as the statistics published by the U.S. Census Bureau, and machine learning more broadly. In each of these settings, models are intended to reveal trends in populations, not reflect information about any single individual.

But how can we answer the first question “How many people live in Vermont?” — which we’ll refer to as a query — while preventing the second question from being answered “How many people name Joe Near live in Vermont?” The most widely used solution is called de-identification (or anonymization), which removes identifying information from the dataset. (We’ll generally assume a dataset contains information collected from many individuals.) Another option is to allow only aggregate queries, such as an average over the data. Unfortunately, we now understand that neither approach actually provides strong privacy protection. De-identified datasets are subject to database-linkage attacks. Aggregation only protects privacy if the groups being aggregated are sufficiently large, and even then, privacy attacks are still possible [1, 2, 3, 4].

Differential Privacy

Differential privacy [5, 6] is a mathematical definition of what it means to have privacy. It is not a specific process like de-identification, but a property that a process can have. For example, it is possible to prove that a specific algorithm “satisfies” differential privacy.

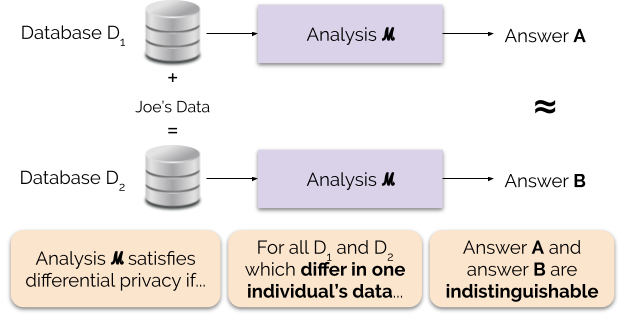

Informally, differential privacy guarantees the following for each individual who contributes data for analysis: the output of a differentially private analysis will be roughly the same, whether or not you contribute your data. A differentially private analysis is often called a mechanism, and we denote it ℳ.

Figure 1 illustrates this principle. Answer “A” is computed without Joe’s data, while answer “B” is computed with Joe’s data. Differential privacy says that the two answers should be indistinguishable. This implies that whoever sees the output won’t be able to tell whether or not Joe’s data was used, or what Joe’s data contained.

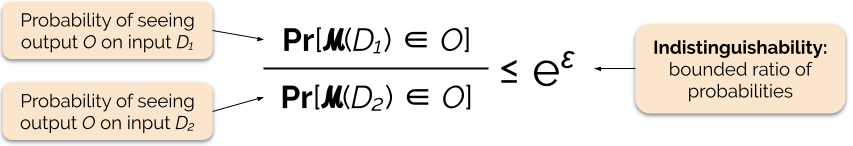

We control the strength of the privacy guarantee by tuning the privacy parameter ε, also called a privacy loss or privacy budget. The lower the value of the ε parameter, the more indistinguishable the results, and therefore the more each individual’s data is protected.

We can often answer a query with differential privacy by adding some random noise to the query’s answer. The challenge lies in determining where to add the noise and how much to add. One of the most commonly used mechanisms for adding noise is the Laplace mechanism [5, 7].

Queries with higher sensitivity require adding more noise in order to satisfy a particular `epsilon` quantity of differential privacy, and this extra noise has the potential to make results less useful. We will describe sensitivity and this tradeoff between privacy and usefulness in more detail in future blog posts.

Benefits of Differential Privacy

Differential privacy has several important advantages over previous privacy techniques:

- It assumes all information is identifying information, eliminating the challenging (and sometimes impossible) task of accounting for all identifying elements of the data.

- It is resistant to privacy attacks based on auxiliary information, so it can effectively prevent the linking attacks that are possible on de-identified data.

- It is compositional — we can determine the privacy loss of running two differentially private analyses on the same data by simply adding up the individual privacy losses for the two analyses. Compositionality means that we can make meaningful guarantees about privacy even when releasing multiple analysis results from the same data. Techniques like de-identification are not compositional, and multiple releases under these techniques can result in a catastrophic loss of privacy.

These advantages are the primary reasons why a practitioner might choose differential privacy over some other data privacy technique. A current drawback of differential privacy is that it is rather new, and robust tools, standards, and best-practices are not easily accessible outside of academic research communities. However, we predict this limitation can be overcome in the near future due to increasing demand for robust and easy-to-use solutions for data privacy.

Coming Up Next

Stay tuned: our next post will build on this one by exploring the security issues involved in deploying systems for differential privacy, including the difference between the central and local models of differential privacy.

Before we go — we want this series and subsequent NIST guidelines to contribute to making differential privacy more accessible. You can help. Whether you have questions about these posts or can share your knowledge, we hope you’ll engage with us so we can advance this discipline together.

References

[1] Garfinkel, Simson, John M. Abowd, and Christian Martindale. "Understanding database reconstruction attacks on public data." Communications of the ACM 62.3 (2019): 46-53.

[2] Gadotti, Andrea, et al. "When the signal is in the noise: exploiting diffix's sticky noise." 28th USENIX Security Symposium (USENIX Security 19). 2019.

[3] Dinur, Irit, and Kobbi Nissim. “Revealing information while preserving privacy.” Proceedings of the twenty-second ACM SIGMOD-SIGACT-SIGART symposium on Principles of database systems. 2003.

[4] Sweeney, Latanya. “Simple demographics often identify people uniquely.” Health (San Francisco) 671 (2000): 1-34.

[5] Dwork, Cynthia, et al. “Calibrating noise to sensitivity in private data analysis.” Theory of cryptography conference. Springer, Berlin, Heidelberg, 2006.

[6] Wood, Alexandra, Micah Altman, Aaron Bembenek, Mark Bun, Marco Gaboardi, James Honaker, Kobbi Nissim, David R. O'Brien, Thomas Steinke, and Salil Vadhan. "Differential privacy: A primer for a non-technical audience." Vand. J. Ent. & Tech. L. 21 (2018): 209.

[7] Dwork, Cynthia, and Aaron Roth. "The algorithmic foundations of differential privacy." Foundations and Trends in Theoretical Computer Science 9, no. 3-4 (2014): 211-407.

About the author

Comments

Do we guarantee that if the same data is analyzes more than once, it will result in the same answer.

So if Database D1 goes through the analysis 3 times, the answer will be the same A each time?

Great first blog!

Looking forward to examples of architectural patterns that included privacy by design concepts.

Informative. Waiting for the next post.

As someone math impaired it would be great if you built out the Joe example with illustrative data - for example what specific Joe's data would be discussed - perhaps via income or medical condition. Also what in reality would 'noise' look like in this account. Great start and looking forward to more.

Hi, Anil. In general, no, differential privacy doesn't make this guarantee – if you run an analysis on the same data 3 times, you'll choose a new noise sample to add each time, and you'll get 3 different answers. But, each time you run the analysis, you incur a "privacy cost" (ε), and these add up. So if you run the analysis 3 times, your total privacy cost is 3⋅ε.

This kind of composition prevents an "averaging attack" where you run the same analysis many times and average away the noise. Differential privacy systems typically set an upper bound on privacy cost called the "privacy budget," and stop answering queries when it's exceeded.

Thank you for sharing your question!

Hi... The idea is great, the Blog is looking very much interesting, But let me know is it possible to get high level of privacy just by having anonymization? because, Hope you all know the problem of NETFLIX during 2007, where researchers released around 99% of data from their data source which was built on collaborative filtering algorithm.

I am very interesting to see your next blog ,how you analyse the security issues for the Differential privacy models.

Thanks for this question! The major challenge with traditional "anonymization" (or "de-identification") techniques is that it's often difficult to measure what privacy level you have obtained. In general, it's not possible to prove that a given strategy for de-identification yields a particular level of privacy, and de-identified datasets are often susceptible to linking attacks using auxiliary data that can lead to the re-identification of individuals. This is one of the main motivations behind the development of formal privacy notions like differential privacy.

We’re glad you’re interested in this series. In case you missed it, the next post in this series on threat models is now live, and you can check it out via the following link: https://www.nist.gov/blogs/cybersecurity-insights/threat-models-differe…

This is fantastic...I've read several "non-technical" papers on differential privacy, and they're not easy to grasp! As I talk with interested clients and others, I'm going to direct them to this!