Summary

The goal of the Face and Ocular Challenge Series (FOCS) is to engage the research community to develop robust face and ocular recognition algorithms along a broad front.

Description

Overview

The goal of the Face and Ocular Challenge Series (FOCS) is to engage the research community to develop robust face and ocular recognition algorithms along a broad front. Currently the FOCS consists of three tracks: the Good, the Bad, and the Ugly (GBU); Video; and Ocular. These challenges are based on the results of previous NIST challenge problems and evaluations. An overview of the FOCS was given at an invited talk at the IEEE Fourth International Conference on Biometrics: Theory, Applications, and Systems (BTAS 2010). That presentation can be found here.

This website has been created as a resource for researchers tackling the FOCS. The data for the GBU, Video, and Ocular challenges comes as part of the Face and Ocular Challenge Series (FOCS) distribution. . To obtain the FOCS distribution please follow the instructions for the FOCS license on https://sites.google.com/a/nd.edu/public-cvrl/data-sets.

The Good, The Bad, and The Ugly (GBU)

Update: A GBU compliant training set used to train the LRPCA baseline algorithm can be downloaded here.

The Good, the Bad, and the Ugly challenge consists of three frontal still face partitions. The partitions were designed to encourage the development of face recognition algorithms that excel at matching "hard" face pairs, but not at the expense of performance on "easy" face pairs.

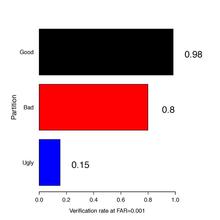

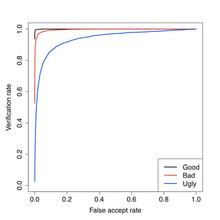

The images in this challenge problem are frontal face stills taken under uncontrolled illumination, both indoors and outdoors. The three partitions were constructed by analyzing results from the FRVT 2006. The Good set consisted of face pairs that had above average performance, the Bad set consisted of face pairs that had average performance, and the Ugly set consisted of face pairs that had below average performance. There are 437 subjects in the data set. All three partitions have the same 437 subjects. All three partitions have 1085 images in both the target and query sets. After constructing the Good, the Bad, and the Ugly partitions, performance of a fusion algorithm on the partitions was computed. The performance rates for the verification rate (VR) @ false accept rate (FAR) = 0.001 are:

- GOOD = 0.98

- BAD = 0.80

- UGLY = 0.15

The performance results can be seen in both a

- Obtain the FOCS distribution by following the instructions for the FOCS license on https://sites.google.com/a/nd.edu/public-cvrl/data-sets.

- Download the Face and Ocular Challenge Problem Series (FOCS) data set

- Download the Good, the Bad, and the Ugly tar gzip file

- The baseline algorithm used in the GBU Challenge Problem is the Colorado State University (CSU)/NIST LRPCA. The algorithm source code can be downloaded free here.

- The CSU/NIST LRPCA algorithm was trained using the following sigsets.

For a detailed look at the Good, Bad, and Ugly Challenge please review the following academic paper. Found here.

Video

The Video Challenge is a re-release of the Multiple Biometric Grand Challenge (MBGC) Video Challenge. This challenge focused on matching frontal vs. frontal, frontal vs. off-angle, and off-angle vs. off-angle video sequences (Powerpoint presentations on the MBGC Video Challenge Version 2 and MBGC Video Challenge Version 1 can be found at the links) The results of the challenge show that video matching is extremely challenging (see slides 9 and 12 in the MBGC Video Challenge Version 2). Due to the difficulty of the MBGC Video Challenge we are keeping this problem open for researchers.

- Obtain the FOCS distribution by following the instructions for the FOCS license on https://sites.google.com/a/nd.edu/public-cvrl/data-sets.

- Download the Face and Ocular Challenge Problem Series(FOCS) data set.

- Included in the download will be a file containing the experiments, target and query sets, and the mask matrices for Video Challenge problem.

Ocular Challenge

A significant amount research has gone into iris recognition from "high" quality iris images captured from cooperative subjects. The results of this research are commercially available products for matching "high" quality iris images. The cutting edge of research is recognition from images that contain the eye region of the face and the iris. The iris in these images can be of variable quality. This research track is referred to as Ocular recognition to differentiate it from similar recognition problems. The goal of this challenge problem is to encourage the development of Ocular recognition algorithms.

The Ocular Challenge data set consist of still images of a single iris and eye region. These regions were extracted from near infrared (NIR) video sequences collected from the Iris on the Move (IoM) system used in the MBGC Portal Track Version 2 (Powerpoint presentations on the MBGC Portal Challenge Version 2 and MBGC Portal Challenge Version 1 can be found at the links).

In order to get the FOCS distribution researchers will need to:

- Obtain the FOCS distribution by following the instructions for the FOCS license on https://sites.google.com/a/nd.edu/public-cvrl/data-sets .

- Download the Face and Ocular Challenge Problem Series (FOCS) data set.

- Included in the download will be a file containing the target and query sets, and the mask matrices for Ocular challenge problem.