PML Researchers Create Tool for 'Circuit-Aware' Reliability Testing

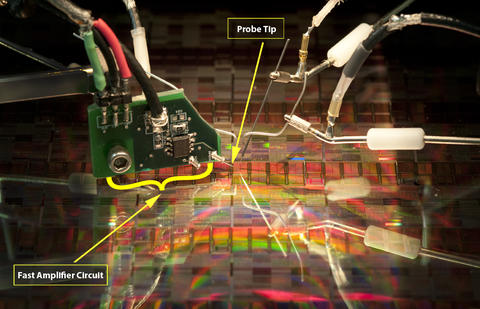

High-speed, amplified probe tip used to collect reliability data in the NIST Advanced Device Characterization and Reliability laboratory. Many transistor reliability problems require extremely fast characterization to fully capture fast transient effects. These fast transient effects have become increasingly important for understanding the reliability of advanced transistor technologies. Keeping the distance between the high speed amplifier circuit and the transistor under study very short (< 1 cm) facilitates extremely fast (< 100 ns) transistor characterization.

A PML research team has devised a reliability data transformation methodology that could ease one of the semiconductor industry's most vexing problems: reliability qualification.

Today's electronic devices are smaller, yet vastly more complex than those of yesterday. The smart phone is one of the best examples of this scaling trend, with the latest and greatest phones thinner than ever but powered by dual-core 1 GHZ processors. Shrinking a device such as this involves the use of integrated circuits that are much more complex and smaller in size than their predecessors from just a couple years ago. This creates multiple reliability challenges for manufacturers.

Currently, the semiconductor electronics industry has a set of rigid transistor-level reliability criteria that do not take into account the end use of the product. In other words, all product applications for a given technology generation are bound by the same reliability specifications. Whether the technology is used to manufacture chips for use in simple electronic trinkets or used to manufacture supercomputers, the reliability specifications are identical. Additionally, current reliability specifications do not take into account the disposable nature of some electronic devices, such as cellphones, where short term performance is more desirable than long term reliability. Under these constraints, manufacturers have to work harder than ever before to qualify their technology by meeting these performance and reliability specifications.

In the NIST PML's Semiconductor and Dimensional Metrology Division, scientists are working on a way to enable manufacturers to qualify their technology by helping industry update its reliability specifications to reflect the diverse product landscape of the electronics industry.

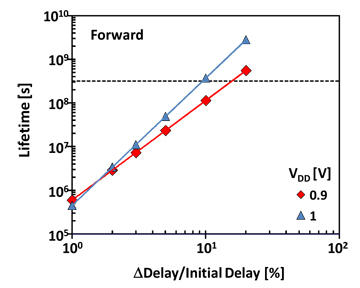

Led by Charles Cheung, the research team has developed a methodology to transform transistor-level reliability data into data that reflect how a generic, and therefore highly customizable, electronic circuit will degrade in its intended application. Evaluating reliability in this manner would let a manufacturer know that a particular circuit will last two years in a high-performance application, or 20 years in a low-performance one. Additionally, products with less stringent reliability needs could be manufactured and sold earlier, even though the technology is not necessarily ready for use in highly demanding applications. The key idea is that all product applications would no longer be bound by a single, all-encompassing reliability specification.

In a landscape where companies are in a race to produce the smallest and fastest electronics in the shortest period of time, they are often forced to make performance versus reliability tradeoffs. The goal of Cheung's team is to make sure these tradeoffs can be made intelligently based on what matters most for a particular application. That capability, he believes, could prompt the industry to adopt more flexible specifications which would save manufacturers time and money.

"You cannot roll out a technology if it does not meet the reliability specifications," Cheung says. But the industry has learned that a transistor failing a reliability stress test does not necessarily translate to the failure of the end product. So, the methodologies used to test reliability in the laboratory are not matching the real life operation of the circuit. "There is a disconnect between rigid device-level reliability specifications and actual circuit-level reliability. There is a great need to have a spec that reflects the circuit application."

An advantage of this methodology is that no new tests are needed. Semiconductor manufacturers can simply use the data they collected during their standard reliability tests. This aspect is extremely important because testing capabilities for manufacturers are often stretched very thin already.

Cheung and his team started developing this new methodology by collecting reliability data on real transistors; i.e., they observed how transistors degrade over time under certain stress conditions. These data were then input into a circuit simulation tool where the transistor degradation data were transformed to reflect how a circuit, as a whole, responds to the degradation of each of its components.

The test circuit can be easily programmed to reflect the operation of most common circuit elements, and the simulation tool outputs real-world parameters most important to the operation of the circuit (such as timing delay and energy per switch). This provides an easy way to determine how a particular circuit design will behave over the life of a product. Engineers can then use this information to reevaluate their circuit design and intelligently optimize performance and reliability to best suit their needs. Initial demonstrative results of this methodology were recently published.*

Cheung and his team believe that their methodology will enable the industry to continue to develop more advanced technology. "This will buy a tremendous amount of breathing room in technology development," Cheung says. He acknowledges that the biggest hurdle is the acceptance of this technique by an industry that has been traditionally conservative with its reliability specifications. His team is currently working with industry to speed the acceptance of this methodology.

*J. T. Ryan, L. Wei, J. P. Campbell, R. G. Southwick, K. P. Cheung, A. Oates, J. S. Suehle, P. Wong, "Circuit-Aware Device Reliability Criteria Methodology," Proceedings of the European Solid State Device Research Conference, European Solid State Device Research Conference, Helsinki, -1, Finland, 09/12/2011 to 09/16/2011, pp. 255-258, (12-Sep-2011)