Ground Robot Tests

- Background

- Definition of Response Robot

- Difference Between a “Standard Test Method” and a “Standard Robot”?

- Approach

- Documents

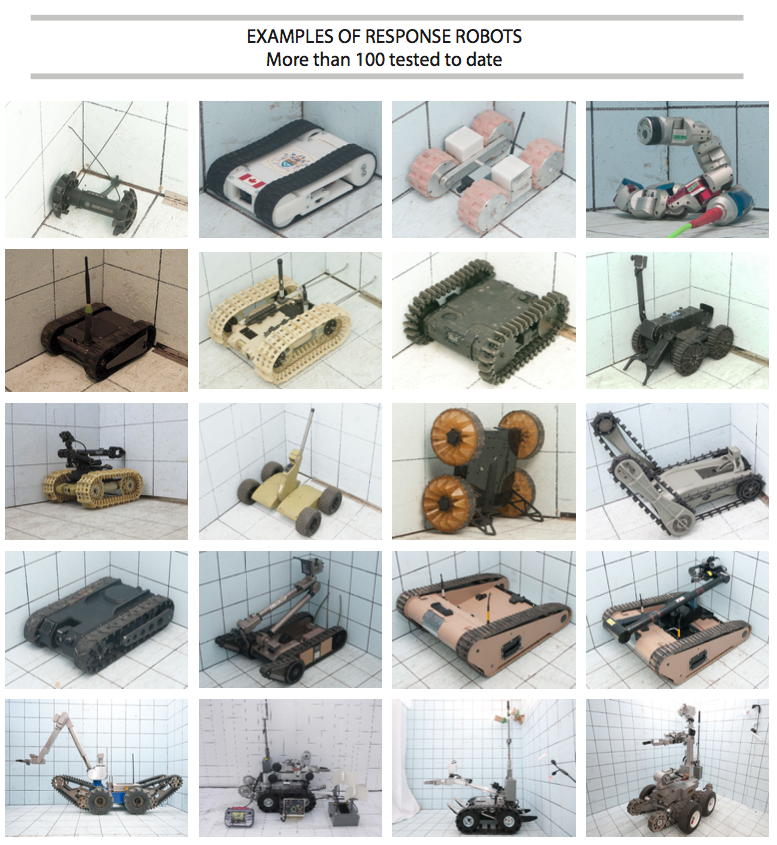

The National Institute of Standards and Technology, Engineering Laboratory, Intelligent Systems Division, is developing the measurement and standards infrastructure necessary to evaluate robotic capabilities for emergency responders and military organizations addressing critical national security challenges. This includes leading development of a comprehensive suite of ASTM International Standard Test Methods for Response Robots that includes more than 50 test methods for remotely operated ground, aerial, and maritime systems. Several different civilian and military sponsors have supported this effort. And the test methods have been replicated and used in dozens of locations worldwide to measure and evaluate response robot capabilities to meet the following objectives:

- Facilitate communication between user communities and commercial developers, or between development program managers and performers, by representing essential capabilities in the form of tangible test apparatuses, procedures, and performance metrics that produce quantifiable measures of success.

- Inspire innovation and guide developers toward implementing the combinations of capabilities necessary to perform essential mission tasks.

- Measure progress, highlight break-through capabilities, and encourage hardening of developmental systems through repeated testing and comparison of quantitative results.

- Inform purchasing and deployment decisions with statistically significant capabilities data. To date, the suite of ground robot test methods have been used to specify more than $60M worth of robot procurements for military and civilian organizations performing C-IED missions.

- Focus operator training and measure proficiency to track very perishable skills over time and enable comparison across squads, regions, or national averages. To date, the suite of ground robot test methods have been used by more than 250 civilian and military bomb technicians.

The working definition of a “response robot” is a remotely deployed device intended to perform operational tasks at operational tempos. It should serve as an extension of the operator to improve remote situational awareness and provide means to project operator intent through the equipped capabilities. It should also improve effectiveness of the mission while reducing risk to the operator. Key features include:

- Rapidly deployed

- Remotely operated from an appropriate standoff

- Maneuverable in complex environments

- Sufficiently hardened against harsh environments

- Reliable and field serviceable

- Durable or cost effectively disposable

- Equipped with operational safeguards

Standard test methods are simply agreed upon ways to objectively measure robot capabilities. They isolate certain robot requirements and enable repeatable testing. They are developed and validated through a consensus process with equal representation of users, developers, and test administrators. In the case of ground systems, one key standards committee is the ASTM International Standards Committee on Homeland Security Applications; Response Robots (E54.09). The resulting robot capabilities data captured within standard test methods can be directly compared even when the tests are performed at different sites at different times. They help establish confidence in a robot and remote operator’s ability to reliably perform particular tasks. Each standard test method includes the following elements:

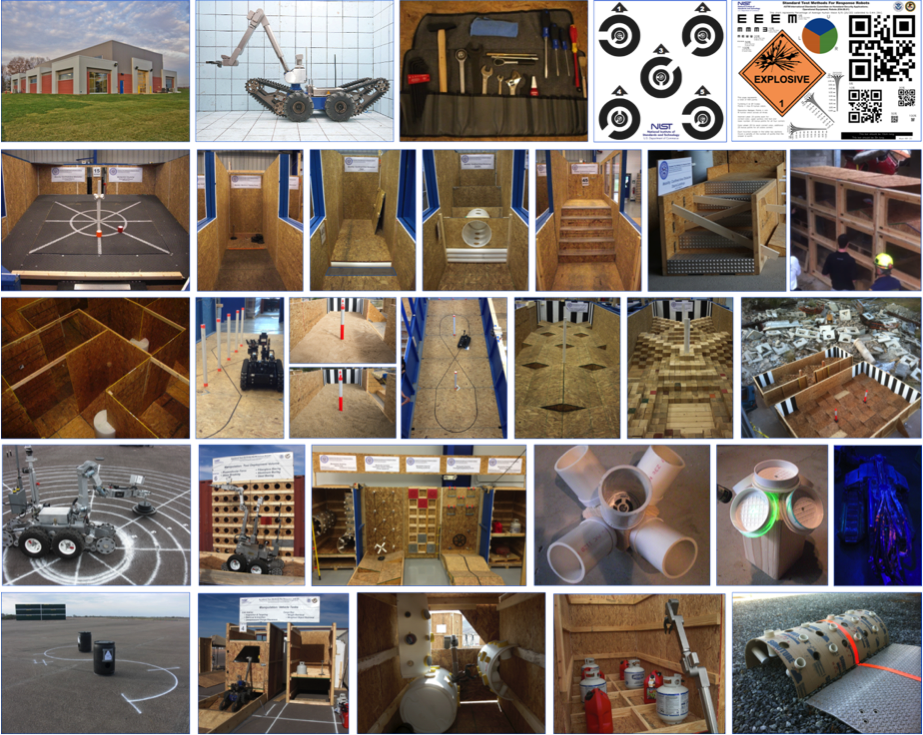

- Apparatus (or prop): A repeatable, reproducible, and inexpensive representation of tasks that the system is expected to perform. The apparatus should challenge the system with increasing difficulty or complexity and be easy to fabricate internationally to ensure all robots are measured similarly.

- Procedure: A script for the system operator to follow (along with a Test Administrator when appropriate). These tests are not intended to surprise anybody. They should be practiced to refine designs and improve techniques.

- Metric: A quantitative way to measure the capability. For example, completeness of 10 continuous repetitions of a task or distance traversed within an environmental condition, etc. Together with the elapsed time, a resulting rate in tasks/time or distance/time can be calculated.

- Fault Condition: A failure of the robotic system preventing completion of 10 or more continuous repetitions. This could include a stuck or disabled vehicle requiring maintenance, or software issues at the remote operator control unit. All such failures are catalogued during testing to help identify recurring issues.

A “standard robot” is a completely different concept and should be carefully considered. A standard robot would presumably meet a well-defined equipment specification for size, shape, capabilities, interfaces, and/or other features. Such equipment standards are typically intended to improve compatibility, enable interoperability, increase production, lower costs, etc. This can be important for many reasons, but can also hinder innovation. In this effort, we are not proposing to develop a standard ground robot specification of any kind. Rather, we are proposing to develop standard test methods that can be used to evaluate and compare entire classes of such systems in objective and quantifiable ways. The resulting suite of standard test methods could indeed be used to specify a standard ground robot at some point, similar to how they are used to specify purchases. But that is left to each user community to define for themselves based on their particular mission objectives. We use standard test methods to encourage implementation of new technologies to improve capabilities and measure progress along the way.

Our process for developing, validating, standardizing, and disseminating these test methods involves several types of events:

- Test method development workshops to decompose mission requirements into suites of elemental test methods. These events define the initial apparatuses, procedures, and performance metrics necessary to capture baseline capabilities. They also define an approach toward controlling environmental variables and then implementing such variables individually, such lighting, wet or dry conditions etc.

- Validation exercises with developers and user communities. These somewhat public events exercise the tangible test apparatuses with repeated testing of different ground systems to facilitate bi-directional communication between the communities. This helps cut through the inevitable marketing, even within development programs. These events determine if the test methods appropriately challenge the state-of-the-science as intended, and that they adequately measure and highlight “best-in-class” capabilities to inform/align expectations for user communities.

- Comprehensive capability assessments for developmental or commercial systems. These private evaluations test specific system configurations across all applicable test methods. This involves typically more than 20 test methods when considering testing all sensors, endurance, latency, etc. in addition to maneuvering, dexterity, situational awareness, etc. All the applicable environmental variables are also tested individually. So-called “expert” operators provided by the developer are used to capture the best possible performance. The resulting data enables direct comparison of capabilities and trade-offs while helping to establish the “repeatability” and “reproducibility” of the test methods for the standardization process. Test trials are conducted to statistical significance up to 10, 20, or 30 repetitions depending on the number of failures. The objective is typically 80% reliability with 80% confidence but test sponsors can adjust these thresholds depending on envisioned mission resilience to failure. Such capabilities data across an entire class of similar robots provides objective comparisons to support purchasing decisions.

- Operator training with standard measures of proficiency. These events typically include 10 basic skills tests for maneuvering, dexterity and situational awareness. Different user communities can define their own training suites using different combinations of test methods. Training trials are time limited to 5 or 10 minutes each so they are quick and focused. Novices and expert operators work for similar times within each test method and across the entire suite to normalize fatigue. For example, 10 tests take 100 minutes plus some time for transitions. Some tests can be longer or shorter. The objective is to test long enough to establish a rate of successful task completions. Operators can then compare their rate of success to that of the “expert” provided by the system developer. This is presumably the best ever operator score which sets to 100% of proficiency. Different user communities can use this process to set their own thresholds of acceptability for deployment. Example categories for eventual certification could include Novice: 0–39%, Proficient: 40–79%, Expert: 80–100%. Complete circuit training can typically be conducted in 1-2 hours, alone or in groups, performing timed trials with synchronized rotations from test to test.

- Robot competitions with academic and commercial robot developers. Robot competitions introduce prototype and standard test apparatuses as tangible descriptions of robot requirements captured from various user communities. The test apparatuses guide innovation while providing inherent measures of progress. Competitions can include up to 100 test trials with a wide variety of robotic approaches on display, making them ideal for refining standard test apparatuses and procedures. Emerging robot capabilities can be quantified and “Best-In-Class” approaches highlighted. Competitions help quicken the pace of development of the standard test methods while inspiring innovation so have become a main driver of our process. They also act as incubators for test administrators to learn to fabricate and administer the latest test methods.

- Guide for Evaluating, Purchasing, and Training with Response Robots using DHS-NIST-ASTM International Standard Test Methods

- Counter-Improvised Explosive Device (C-IED) Applications

- Counter-Improvised Explosive Device (C-IED) Applications

- Assembly Guide - Please email us RobotTestMethods [at] nist.gov (RobotTestMethods[at]nist[dot]gov )to received a link to download the material. RobotTestMethods [at] nist.gov ( (link sends e-mail))

- Urban Search and Rescue (US&R) Applications

Contact Information:

Adam Jacoff - Project Leader

RobotTestMethods [at] nist.gov (RobotTestMethods[at]nist[dot]gov (link sends e-mail))

Adam.Jacoff [at] nist.gov (Adam[dot]Jacoff[at]nist[dot]gov (link sends e-mail))

301-975-4235