Taking Measure

Just a Standard Blog

Even though research on artificial intelligence (AI) goes back to the 1960s, it wasn't until the past decade that AI really became an integral part of our lives. From automatically recognizing faces in our photo library to predicting traffic congestion and finding the fastest routes to our destination, AI is everywhere. It is also revolutionizing how research and science are being done, from data mining to drug discovery.

What makes AI particularly attractive and at the same time really powerful is that it not only automates many laborious tasks — this in principle could be done with a well-written script — but learns how to do them from data alone, without ever being explicitly programmed to solve the problem at hand. This is known as training the AI.

Think of tagging pictures: I thought it was really neat when my first “smart” photo album app not only highlighted faces of my friends and family members in pictures, but — after I tagged a couple of pictures with names — started suggesting (surprisingly accurately) when those people were in a new picture, even if their pose and facial expression were quite different from the already tagged pictures. At some point, my app even gave me the option to scan through all my pictures and tag all those people I had already identified. And did so really fast, considering the tens of thousands of pictures I had on my computer at that point! Now, whenever I take new pictures, my photo app matches any people in them to the people I have already tagged. And all I had to do was give the app just a couple of shots of each person to learn from: AI did the rest.

In my research, I use an AI-powered face-recognition-like approach to classify “faces” of quantum dot devices for use as so-called qubits, the building blocks of a quantum computer’s processor. While in classical computers, information and processes are coded as strings of 0 (no signal in the circuit) and 1 (signal is on), quantum computers use 0, 1 and everything in between. This is achieved by replacing the classical 0-1 bits with quantum bits, aka qubits. There are certain mathematical problems, such as the factorization of numbers, in which quantum computers are expected to outperform classical ones.

Controlling the Flow

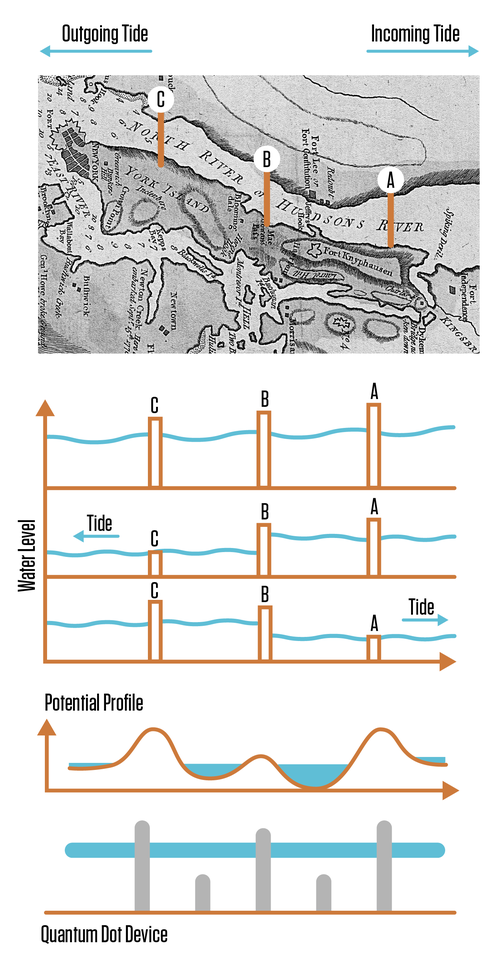

Quantum dots are one of the possible realizations of qubits. How do quantum dots work? Let’s conduct the following thought experiment: Suppose there are three locks on the Hudson River. Since the Hudson River can flow in both directions depending on the tide, by carefully adjusting the height of the locks we should be able to — at least in principle — control how much water flows between the reservoirs and chambers, and how much water gets trapped in the two chambers between the locks.

For example, if all three locks were simultaneously brought up higher than the water level, there would be no water flow and the water levels in the two chambers should be approximately the same. If during the outgoing tide locks A and B were set high, and lock C brought down, we would lower the water level trapped in chamber BC below that in chamber AB. Conversely, if during the incoming tide we would lower lock A, the water levels would be reversed, i.e., the level of water trapped in chamber AB would be lower than in chamber BC. By playing with the heights of the locks we could achieve all possible combinations of the relative depths of chambers AB and BC (ignoring for a moment the actual effect of such locks on the changing tidal currents).

This is quite like how quantum dots work, except that what flows is electric current, what is being trapped are individual electrons, and what is being raised and lowered is voltage applied to metallic gates imprinted above the electronic channels. A precise control of quantum dots allows researchers to shuttle electrons around and modify their state, and in doing so perform information processing tasks.

Toward the Quantum Revolution

Now, to transform quantum dot devices into functioning qubits in a research lab, someone, usually a graduate student or postdoc, has to carefully adjust voltages on all those gates and then take sensitive measurements to make sure that the dots have formed, that the number of electrons is just right, and that the dots can interact with one another. This requires the researcher to measure the current flowing through the device for a set of parameters, recognize what state the device is in from that measurement, change the gate voltages a bit, and then check the current again, repeating the process until the desired state is achieved. And the more dots (and gates) involved, the harder it is to tune all of them to work together properly.

In fact, full automation of this process is one of the main obstacles to widespread use of semiconductor-based qubits. Even with semi-scripted tuning protocols, a lot of decisions about the proper parameters range are still made by the researcher. At the same time, as one of my colleagues, Jake Taylor, put it well, legions of graduate students applying “trial-and-error” approaches cannot be the ultimate answer for deploying quantum technologies. To enable the quantum revolution, we need to find a way to take the human out of the picture.

This is the goal of our work. Using the mathematics of pattern recognition and classical optimization, we are developing an auto-tuning protocol that doesn’t require a human to navigate between quantum dot states in real time. The AI in our protocol works like the face recognition app on a phone — whenever a new measurement is taken, it analyzes it and returns a prediction of the most likely state of the device. That information is then fed into an optimization routine that, based on what has been seen so far, suggests how the voltages should be adjusted for the next measurement and— with each iteration — tries to get closer and closer to the desired state, tuning the quantum dot device in the process.

To train the AI, one of my colleagues, Sandesh Kalantre of the University of Maryland, has developed a model that generates large sets of images of simulated measurements, just like the ones we see in the lab. This was an extremely important step, as a large volume of data is necessary to train the AI.

In light of the recent advances in building larger quantum dot arrays, it is particularly exciting to be involved in a research project aimed at development of fully autonomous tuning software. However, even though the numerous attempts to automate the various steps of the tuning process — using a combination of image processing, pattern matching, and machine learning — bring us much closer to this goal than ever before, full automation is yet to be achieved. Still, our work is not only paving a path forward for experiments with a larger number of quantum dots, but will also allow us to allocate more precious time — and graduate students — to do more stimulating research.

About the author

Related Posts

Comments

Very informative-- thanks for an illustrative description of a complex problem and making these ideas accessible to a larger audience.

This is a fascinating piece! How are you accounting/correcting for complexity , uncertainty or non-stationarity of those training data. Are empirical approaches used to avoid hand-crafting? So many question. I suppose I should read the research.

For the author, Justyna Zwolak:

This was extremely well written and gave very concrete, understandable examples of a topic that might be new to many. I also really like the visuals, especially the example of the locks, which reminds me of walks on the C&O Canal.

Thank you for sharing this.

Jenny Payette

NIST Alumnus

NIST Workshop Participant

Thank you for your kind word Jenny! I am glad to hear you enjoyed reading my post.

The locks on C&O Canal are what got me thinking about the hits analogy :)

Sounds like fun and exciting new technology 😇