Taking Measure

Just a Standard Blog

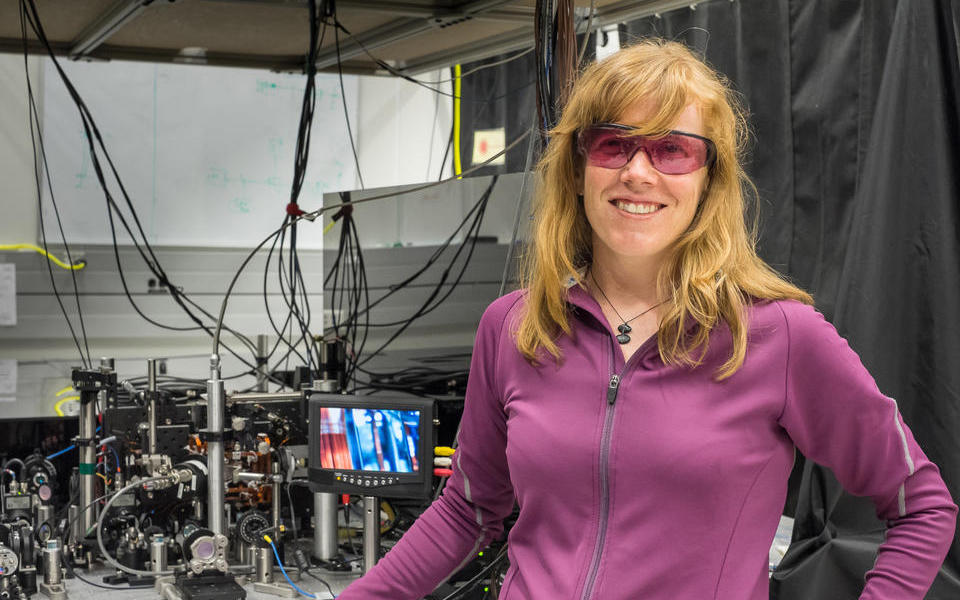

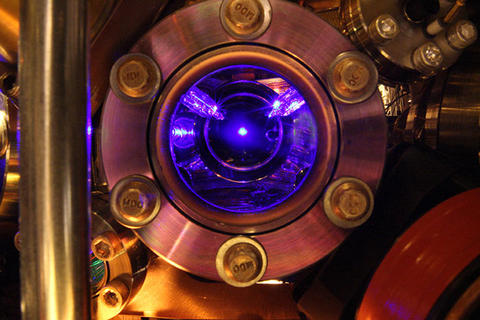

NIST physicist Elizabeth Donley with a compact atomic clock design that could help improve precision in ultraportable clocks. About 1 million cold rubidium atoms are held in a vacuum chamber in the lower left of the photo. On the screen is a close-up of the atom trapping region of the apparatus.

Einstein is reported to have once said that time is what a clock measures. Some say that what we experience as time is really our experience of the phenomenon of entropy, the second law of thermodynamics. Entropy, loosely explained, is the tendency for things to become disorganized. Hot coffee always goes cold. It never reheats itself. Eggs don’t unscramble themselves. Your room gets messy and you have to expend energy to clean it, until it gets messy again.

Here at NIST, we don’t worry about any of these philosophical notions of time. For us, time is the interval between two events. That could be the rising and setting of the sun, the swing of a pendulum from one side to another, or the back-and-forth vibration of a small piece of quartz. For the most precise measurement of the second, we look at the resonant frequencies of atoms.

James Clerk Maxwell, the father of electromagnetic theory, was the first person to suggest that we might use the frequencies of atomic radiation as a kind of invariant natural pendulum, but he was talking about this in the mid-19th century, long before we could exert any kind of control over individual atoms. We would have to wait a century for NIST’s Harold Lyons to build the world’s first atomic clock.

Lyons’ atomic clock, which he and his team debuted in 1949, was actually based on the ammonia molecule, but the principle is essentially the same. Inside a chamber, a gas of atoms or molecules fly into a device that emits microwave radiation with a narrow range of frequencies. When the emitter hits the right frequency, it causes a maximum number of atoms to change state, enabling scientists measure the duration of a certain number of cycles and define a second.

Lyons’ clock, while revolutionary, wasn’t any better at keeping time than doing so by astronomical observations. The first clock that used cesium and was accurate enough to be used as a time standard was built by NIST’s counterpart in the U.K., the National Physical Laboratory, in 1955. NIST’s first cesium clock accurate enough to be used as a time standard, NBS-2, was built a few years later in 1958 and went into service as the U.S. official time standard on January 1, 1960. It had an uncertainty of one second in every 3,000 years, meaning that it kept time to within 1/3,000 of a second per year, pretty good compared to an average quartz watch, which might gain or lose a second every month.

The atomic second based on the cesium clock was defined in the International System of Units as the duration of 9,192,631,770 cycles of radiation in 1967. It remains so defined to this day.

While the definition has stayed the same, atomic clocks sure haven’t. Atomic clocks have been continually improved, becoming more and more stable and accurate until the hot clock design reached its peak with the NIST-7, which would neither gain nor lose one second in 6 million years.

Why do we say “hot” clock? That’s because until the 1990s, the temperature of the cesium inside these clocks was a little more than room temperature. At those temperatures, cesium atoms move at around 130 meters per second, pretty fast. So fast, in fact, that it was hard to get a read on them. The clocks simply didn’t have much time to maximize their fluorescence and get a more accurate and stable signal. What we needed to do was give our detectors more time to get the best signal by slowing down the atoms. But how do you slow down an atom? With laser cooling, of course.

But how can lasers cause something to cool down? Aren’t lasers hot? The answer is: It depends. The science of slowing down atoms with lasers was pioneered by Bill Phillips and his colleagues, a feat for which they shared the 1997 Nobel Prize in Physics. Very basically what they did was use a specially tuned array of lasers to bombard the atoms with photons from all angles. These photons are like pingpong balls compared to the bowling-ball-like atoms, but if you have enough of them, they can arrest the motion of the cesium atoms, slowing them from about 130 meters per second to a few centimeters per second, giving the clock plenty of time to get a good read on their signal and vastly improving the accuracy and precision of the clock.

The first clock to use this new technology, NIST-F1, called a fountain clock, was put into service in 1999 and originally offered a threefold improvement over its predecessor, keeping time to within 1/20,000,000 of a second per year. NIST continued to enhance the design of NIST-F1 and subsequent fountain clocks until the accuracies approached one second every 100,000,000 years.

Not ones to rest on our laurels, NIST and its partner institutions, including JILA, are also working on a series of experimental clocks that operate at optical frequencies with trillions of clock “ticks” per second. One of these clocks, the strontium atomic clock, is accurate to within 1/15,000,000,000 of a second per year. This is so accurate that it would not have gained or lost a second if the clock had started running at the dawn of the universe.

But why do we need such accurate clocks? One thing that wouldn’t exist without such accurate time is the Global Positioning System, or GPS. Each satellite in the GPS network has atomic clocks aboard that beam signals to users below about their position and the time they sent the signal. By measuring the amount of time it takes for the signal to get to you from four different satellites, the receiver in your car or in your phone can figure out where you are to within a few meters or less.

Such accurate time is also used to timestamp financial transactions so that we know exactly when trades are happening, which can mean the difference between making a fortune and going broke. Accurate time is also necessary for synchronizing communications signals so that, for instance, your call isn’t lost as you travel between cellphone towers.

And as new, even more accurate clocks are invented, it’s assured that we will find uses for them. In the meantime, you’ll have to settle for knowing where you are anywhere on Earth at any given time while talking on your cellphone on your way to an appointment. Even if you arrive a few millionths of a second late, we won’t give you a hard time about it.

*Edited 5/12/2022

About the author

Related Posts

Comments

Bob,

Thank you for your question. Per our clock expert Mike Lombardi: The reason cesium fountain clocks are more accurate than cesium beam clocks is the long interaction time they allow with atoms. The interaction time with F-1 was about 100X longer than a cesium beam clock, about 1 second as opposed to about 0.01 seconds (10 ms). This was because the atoms were first slowed down via laser cooling, then tossed up vertically through a microwave cavity, reaching an apex about one meter above the cavity, and then observed again as they pass through the cavity no their way down about 1 second later. So it's true that the upward toss, and the fall back downwards due to gravity are keys to how a fountain works, but the technique would not work without laser cooling first being applied. For example, it was tried by Zacharias in the 1950s without success, before the first laser cooling experiments.

To learn more, go to https://tf.nist.gov/general/pdf/2039.pdf.

Thanks!

I am glad I asked the question! You pointed me toward an excellent article by Mike Lombardi that I had not read before. Many thanks.

(1) What about very small Atomic Clocks? Seems like I remember that some could fit in a very small box.

(2) What about the clock that is on a GPS Satellite? How accurate are these? How does Clock-accruracy translate to position accuracy?

Charlie,

Thanks for your inquiry! We do have chip-scale atomic clocks. You can learn more about them here: https://www.nist.gov/news-events/news/2019/05/nist-team-demonstrates-he….

NIST does not maintain the GPS system. You can learn more about how GPS works and its accuracy at https://www.gps.gov/systems/gps/.

Charlie,

(2) is a bit of a trick question. The atomic clocks on GPS satellites don't have to keep to the second as well as atomic clocks on the ground. The Air Force provides slight adjustments twice a day to keep all the GPS satellites in sync with each other. https://www.nasa.gov/feature/jpl/what-is-an-atomic-clock The speed of light is close to a foot (about 1/3 of a meter) per nanosecond. So, to make GPS work the satellites have to be synchronized together to better than a nanosecond.

Regarding GPS its a lot more complicated than the question you asked. The GPS satellites are identified by a Block number. The latest GPS satellites are called Block III. I believe two have been launched, but more are going to be launched. Most of the satellites are some derivative of Block II. None of the original Block II or Block IIA satellites are functioning, and the remaining ones are Block IIR (Replenishment), Block IIR-M (Replenishment with Military enhancements), Block IIF (Follow-on). The different versions of the GPS satellites have different levels of performance. So it's not a simple answer in terms of the performance, since you're looking at four different types of satellite.

That said, the Block IIA satellites (which are more primitive than anything currently operating) were designed to operate autonomously for up to 180 days. The keyword here is autonomously. The Block II satellites contain 4 atomic clocks, 2 each of two types (cesium and rubidium). They also are constantly monitored by ground stations with their own atomic clocks which are transmitting correction information, so even if the atomic clocks aren't perfect, they should be corrected by the ground stations. Obviously the specification seems to indicate that it can run for 180 days without correction and not suffer huge degradation in performance.

But... It gets much more complicated. The satellites are orbiting at about 12000 miles, or about 16000 miles from the center of the Earth, whereas we're orbiting at about 4000 miles from the center of the Earth. So the Satellites are moving about 4x faster than we are, and we're moving about 1000 mph. So the satellites are moving about 3000 mph faster than we are.. So... yeah, time dilation... Seriously. If you don't correct for it, it's going to dominate any error from the clock.

Now, let's assume the atomic clock was perfect, and we perfectly accounted for relativity. We determine distance by taking the speed of light and dividing by time. The question is... What's the speed of light? The speed of light in a vacuum is well known, but going through the atmosphere? That's less clear (pardon the pun). Air density can change it as could moisture, but the real killer is the ionosphere where its going through charged particles which can really change the speed of light, which also means it could literally deflect the radiation. And all of this is constantly changing both in time and position. All of these errors are small, but they add up. The ionosphere is the real killer, that's what dominate the performance at your receiver.

There are techniques to better deal with the ionospheric error, enter the Wide Area Augmentation System -- which consists of ground stations at known locations monitoring the signal error and transmitting to the satellites which rebroadcast corrections to the user. There is also a Local Area Augmentation System which works in the same way but it transmits directly to the user and works over a much narrower area (think airports). Another technique is called carrier phase tracking in which the GPS receiver compares the carrier signal from the two transmissions (1.2GHz and 1.5GHz) which can do at neutralizing atmospheric interference because the ionosphere's impact is very frequency dependent -- unfortunately carrier phase tracking is not readily available to consumers due to cost. The Block III satellites are going to a much better job at dealing with ionospheric uncertainty.

Very good introduction to how modern GPS system operates. Thank you!

Great information thank you. One thing I always speak about to my engineering classes is TIME. There must be a clock somewhere or timing source to synch with.

Thanks

Just curious why the strontium atomic clock was chosen as an example here since two more accurate clocks exist at NIST?

Greetings!

Yes, it's true that NIST's ytterbium and quantum logic clocks are more accurate than the strontium clock. We chose to feature the strontium clock because we thought it was a good example of an optical clock and is perhaps the one closest to real-world implementation.

valuable information

Time Lapse

Great information. Would like to know more about GPS and real-time

Great in the light

"NIST 7 accurate up to a second in a million years"? This must be "right to a sixth of a millionth per second because a clock has to be placed somewhere and there are currents inside Earth which change the local g. So is done further on in the article and like a wrote to NIST some years ago. Read in the Canadian Journal of Pure and Applied Science Vasily Yanchilin's article on Poincaré, who suggested to use euclidian geometry with varying unit of length instead of Riemann maths. Read Yanchilin's book - boycotted by wikipedia instead of providing counterargumentation- with application of the principle of least action to a photon passing mass (also big masses acting as lenses): it seeks a path with as big steps (oscillations of low frequency) as possible and a minimum of these. Observed is not a route close to the mass where time thus must run slower to get oscillations of lower frequency.

In the young very concentrated universe processes ran very fast of the second there was shorter. The "beginning"did not start from a point since a point is a mathematical concept and does not exist in physics because it has no dimensions. Read top-formula.net on Yanchilin's proposal for an experiment with atomic clocks at different heights. During a few weeks the numbers of ticks have to be registered after which these are compared at a same location. Note that to a shorter second below has to be added delta of the second above which renders more ticks. Beach and 700 m high mountain on Saba in the Caribean seem fit for the measurement. A more compact atom changes the amount of energy for transitions of electrons. I wrote during several years on this matter on www.janjitso.blogspot.com but did not receive any comments and neither I could find serious objections to Yanchilin's vision. His reasoning is worth studying very much, broadens our horizon but at the Amsterdam universities his book was put in a distant storehouse not easily accessible for students. Who thus stay ignorant, dumb.

Why can't the radio signal be boosted so that it is available during the day also.

Hi Chuck,

To answer your question it isn't so much the output power of the radio but rather the absorption of the ionosphere. From what I remember the US uses multiple frequencies to transmit their clock information. I believe they are 2.5 MHz, 5.0 MHz, 10.0 MHz 15.0 MHz and 20.0 MHz on the HF frequencies. There are other frequencies they use as well. There is a frequency which is used for synchronizing the public "Atomic Clocks" found in most stores, and I believe it is something like 60 kHz. Also as explained earlier, the atmosphere also attenuates the radio signals depending on moisture density, particulate density for example. Then there is ground absorption to take in consideration. So in order to get around this they use frequency diversity. While one or several frequencies may be absorbed or attenuated, others will be reflected by the ionosphere at any given time of the day. During the evening or dark side the ionosphere is reduced so that the propagation of radio waves at higher frequencies is changed to lower frequencies. Other factors such as space weather can also cause radio frequencies to be absorbed by the ionosphere at HF frequencies. I hope this what you were looking for.

Always keeping the history alive and the story straight.

Bravo!

I'm curious about the definition of the second. Or the year, for that matter. Both are units of time, equivalent by some scaling factor. If one second is defined as the duration of 9,192,631,770 cycles of radiation (Ce atoms), what does it mean to say, for example, that a clock loses 1/3,000 of a second per year? Or is accurate to within 1/15,000,000,000 of a second per year (Sr atoms)? Is one year, say, defined as the 'time' the earth takes to revolve around the sun once, and if so, is there no atomic clock whose radiation cycles match that period integrally? To define a unit and then state an inaccuracy with respect to that same unit begs for clarification. Thank you!

While I am sure the NIST-Fx fountain clocks use laser cooling as a way to read cesium atoms' output more accurately, is it not true that the major advance in the fountain clock is the fact that atoms are propelled in an upward direction in order that GRAVITY will slow them down, too, as the atoms reach the apex of their travel?