Taking Measure

Just a Standard Blog

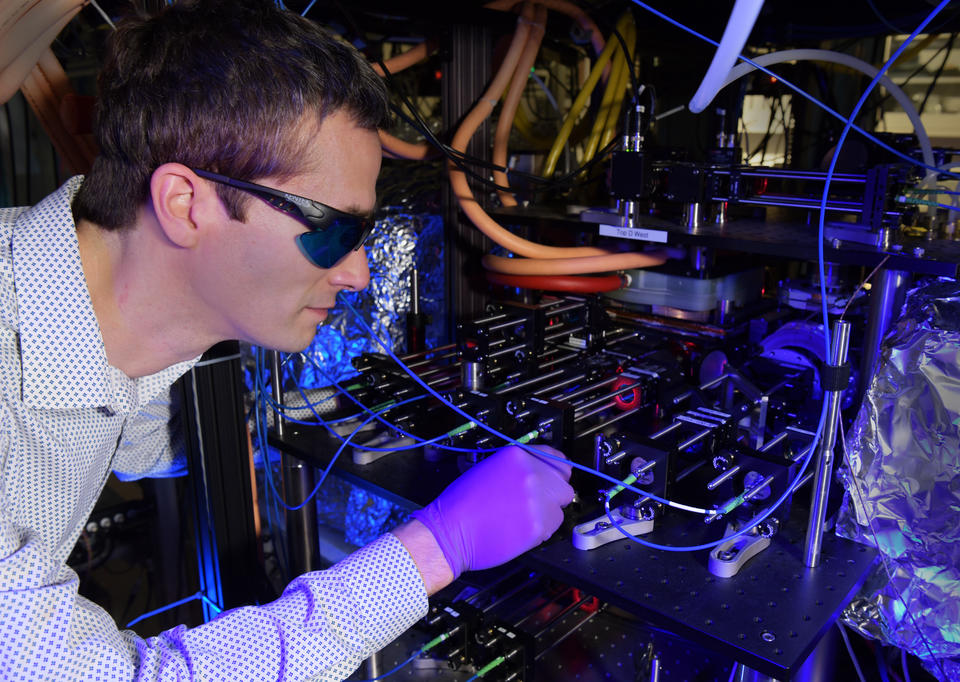

In Stephen Eckel’s lab at NIST, he gets to work with some of the coldest stuff in the universe.

Temperature is probably the second most measured physical quantity in our modern world — after time.

When I wake up in the morning, the first thing I usually check is the time (to see if I should go back to sleep), but the second thing I check is the temperature outside (so that I know how to dress).

Temperature is such a common measurement that we sometimes forget how important it is. From dairy farming to rocketry, from climate science to weather prediction, so many things require an accurate knowledge of temperature.

The metric (SI) unit for temperature is called the kelvin, after Lord Kelvin, whose 200th birthday we celebrate today.

Lord Kelvin and the Early Science of Temperature

Lord Kelvin, or William Thomson, worked in what was then the emerging field of thermodynamics — transforming heat into dynamical motion. He did this both as a student at the University of Cambridge and as a young professor at the University of Glasgow. Together with his close collaborator, James Joule, he researched all sorts of problems in thermodynamics, including temperature scales.

At the time, the scientifically accepted scale for temperature was the Celsius scale, with zero temperature being the freezing point of water and 100 degrees being the boiling point of water. But after studying how gases changed volume and pressure in response to changing temperature, Thomson, Joule and other scientists realized that there was an absolute coldest temperature that could be reached.

To understand how they reached this conclusion, consider a gas in a balloon. If you cooled the balloon, the gas inside would exert less pressure against the balloon itself and against the atmosphere outside it, causing the balloon’s volume to shrink.

Don’t believe me? Inflate a balloon and stick it in your freezer. When you pull it out, you can feel the balloon expand. Now extrapolate: How cold would you have to make the balloon to make its volume go to zero (ignoring the fact that the gas inside will eventually condense into a liquid)? That must be the coldest possible temperature because the balloon cannot have a negative volume.

In 1848, Lord Kelvin used similar reasoning to accurately calculate the absolute coldest temperature as negative 273.15 Celsius (or negative 459.67 degrees Fahrenheit). It would be roughly another decade before scientists like Lord Kelvin and Ludwig Boltzmann understood that at absolute zero, the molecules in the gas stop moving.

Since 2019, all three of these scientists have been immortalized in the SI. The kelvin is our SI unit of temperature, defined through the Boltzmann constant, which relates temperature to energy, the SI unit of which is the joule.

Today, atomic physicists like myself use a technique partly pioneered at NIST called laser cooling, which uses lasers to cool clouds of between 100,000 and 1 billion atoms to temperatures of about 100 microkelvin. This temperature is 1/10,000th of a degree Celsius above absolute zero.

And we measure these ultracold temperatures in a way that would not be surprising to Lord Kelvin (although making such cold gases might be!).

We measure the average speed of the atoms in the gas. Researchers at NIST use such laser-cooled atoms for all sorts of applications, from atomic clocks to vacuum standards.

Vacuum Standard

Laser cooling atoms to near absolute zero only works inside a chamber where almost all the air has been removed by a pump to isolate the atoms from the surrounding environment. Such vacuum chambers are common and are used in industries such as semiconductor manufacturing.

Most of the components in your cellphone have been in and out of at least one vacuum chamber. The core components, like the central processing unit, have probably been through a chamber that has produced some of the best vacuums on Earth. For every trillion gas molecules that started in the chamber, all were removed but one. Such exquisite vacuums are required because leftover gas molecules can both contaminate the chip and scatter the ultraviolet light that is used to imprint the designed circuit. This can cause the chip to be ruined.

Amazingly, the current best way to measure such pure vacuums is by using what is effectively a vacuum tube. But now, the laser-cooled atoms in my lab may be the best sensor of ultralow vacuum pressures on Earth.

After the sensor atoms are cooled to near absolute zero, we hold the sensor atoms in a “trap” that is made entirely of magnetic fields. This trap is very weak, only able to hold onto the ultracold sensor atoms. The vacuum sensor works because if a cold sensor atom is struck by a leftover gas molecule, it will almost always be ejected from the weak trap. The rate at which this process occurs depends on the number of gas molecules the pump has left behind. Thus, determining the number of leftover gas molecules just involves counting the number of sensor atoms that remain after some time.

This “cold-atom vacuum standard (CAVS)” is a new way of measuring vacuum pressure, which NIST has played a crucial role in developing. We anticipate it being used to measure ultrapure vacuums in semiconductor manufacturing, quantum computers and other big science experiments, such as an experiment detecting collisions of extremely distant black holes, known as the Laser Interferometer Gravitational Wave Observatory (LIGO).

Having a standard like the CAVS that always gives the correct vacuum pressure reading will help these applications build better vacuum chambers, diagnose problems and increase both reliability and productivity.

The CAVS is the only experiment that I am aware of that needs to measure two very different temperatures at the same time: the sensor atom temperature of around 100 microkelvin (very cold!) and the temperature of the leftover gas in the vacuum chamber, near room temperature at 300 kelvin.

I think Lord Kelvin would be amazed to learn that two very different temperatures could exist at the same time, and both need to be measured for a single experiment to work.

Thermometers

Another interesting research pursuit here at NIST is trying to use atoms or molecules to build a thermometer that actually measures temperature.

You may be wondering what I mean.

After all, you probably have multiple thermometers in and around your home, and they all give you some number in either Fahrenheit or Celsius. But the truth is they all measure some other physical quantity — like the resistance of a platinum wire or the voltage generated between two dissimilar metals — that depends on temperature.

For these devices to read out a temperature in Fahrenheit or Celsius, they must be calibrated. NIST does such calibrations, and it’s more likely than not that the calibration for the thermometer in your home’s thermostat can be traced through a complicated set of steps all the way back to NIST.

But we may be able to make this whole calibration process simpler by making thermometers that directly measure temperature, using techniques that Lord Kelvin would appreciate.

For example, my colleague Daniel Barker and I are working on using lasers to measure the distribution of velocities of a gas of rubidium atoms at room temperature and above. This technique, called Doppler thermometry, gets at the very heart of how Lord Kelvin understood temperature.

Together with my colleague Eric Norrgard, I am also working on two projects trying to create a new type of infrared thermometer using atoms and molecules. If these efforts are successful, calibrating our thermometers could get much easier, and it may further other scientific advancements as well.

Keeping It (Very) Cool in the Lab

I came to NIST as a postdoctoral researcher in 2012 after finishing my graduate work at Yale University.

As a postdoc, I worked with some of the coldest stuff in the universe: Bose-Einstein condensates (BECs). Like the CAVS, BECs are also made of laser-cooled atoms, but they have been cooled even further to less than 100 billionths (!) of a degree above absolute zero.

After my postdoc, I decided to stay at NIST and try to use my experience with ultracold atoms and lasers to realize practical and useful standards, like the CAVS.

I gain a great sense of pride when I see what appear to be glowing balls of ultracold atoms — which are certainly fun to play with — used to solve real-world measurement problems. I suspect that Lord Kelvin may have felt the same sense of pride to see his measurements and theories regarding thermodynamics (which were probably also fun to work on) be applied to make more efficient steam engines.

Happy Birthday, Lord Kelvin

Lord Kelvin didn’t just calculate absolute zero. After his early work in establishing absolute temperature scales, he was instrumental in laying the first telegraph cables across the Atlantic Ocean. Lord Kelvin also invented a machine that predicted tides and a compass that helped the Royal Navy navigate the seas. While my research is not quite that varied, one of the ways I mix up my work is by working at both room temperature and temperatures near absolute zero.

One of the key things I have learned is that measuring temperature, as Lord Kelvin understood it, is almost always harder than you might think. While the ideas are straightforward, making them work in practice is the real challenge.

And this fact makes it even more impressive that Lord Kelvin accurately predicted the temperature of absolute zero … in 1848.

On his 200th birthday, I’ll take a moment to appreciate that.

About the author

Related Posts

Comments

Great article, but I had a couple of nits to pick. First, Celsius temperature was referred to as "centigrade" during the time period referenced (and is still incorrectly called centigrade by a significant number of people today). While I think that should be acknowledged to give historical context, I would support NIST and other bureaus of weights and measures internationally putting forth a greater effort to purge "centigrade" from everyday language(s) in the present day. Second, was the scale of what is now called the Celsius thermometer not inverted when originally proposed, so that water boiled at 0 °C and froze at 100 °C? I think that would make an interesting article all by itself.

Indeed, you are correct on both counts. The International Committee of Weights and Measures (abbreviated in French as CIPM) resolved in 1948 that "degree Celsius" is the preferred name (see https://www.bipm.org/documents/20126/41483022/SI-Brochure-9-EN.pdf). I should have been more careful in my editing. Second, while Celsius did indeed propose the scale 1742 with the inversion you describe, by the nineteenth century, the scale we know today with 0 °C being the freezing point and 100 °C had been well established.

Thank you Stephen. Very nicely written article which addresses a critical subject in the needy hours of Quantum Computing & Quantum sensors. I am going to share this article in my LinkedIn post.

Good information and really cool (pun intended), but you didn't answer your pivotal question: How low can temperature go? Are we challenging absolute zero - or is the better title: How close to absolute zero can we get? In either case, I'd love to hear your thoughts on the both questions.

Excellent question! The third law of thermodynamics states that while one can never reach absolute zero, there is no fundamental limit as to how close you can get. To the best of my knowledge, the lowest temperatures obtained are about 50 pK, for use in a tall "atom fountain" (see https://arxiv.org/pdf/1407.6995). After the atoms are cooled to these low temperatures, they are accelerated upwards such that they fly 10 m up and 10 m back down. Such experiments can measure inertial forces very accurately, and need such low temperatures to prevent the cloud from expanding too much during its 10 m up-and-down flight. So, to return to your question, we are challenging absolute zero, but how close we get is really a practical limit: it ultimately depends on what temperature a given experiment needs.

In this passage it is talking about temperature and how you need different temperatures for different experiments .

I liked it.

For your amusement: Baron Kelvin's name comes from the River Kelvin which flows past Glasgow into the River Clyde. https://en.wikipedia.org/wiki/River_Kelvin