Summary

Designing artificial intelligence (AI) from the device up could unlock improvements in critical metrics such as energy delay product and enable unique networks that would potentially be too cumbersome to train using an algorithmic approach. We are investigating (fabricating, measuring, and modeling) novel devices for this biologically inspired approach to computing. Devices of interest include hybrid magnetic-superconducting devices, which have many properties that make them a natural choice for bio-inspired computing. For example, Josephson junctions can produce a voltage spike that is analogous to the action potential produced by a neuron in the brain, except at a time scale that is nearly 8 orders of magnitude faster. Another set of devices of interest are magnetic tunnel junctions. These devices could potentially exploit the thermal energy present in the system to aid in probabilistic style computations. The underlying goal of this effort is to find a way to let the physics of the devices do the work of the computation.

Description

Here is a brief description of our work with links to recent papers from our investigations, broadly classified as experimental and modeling. A brief overview of Josephson junction-based bio-inspired computing can be found in our review article.

Experimental

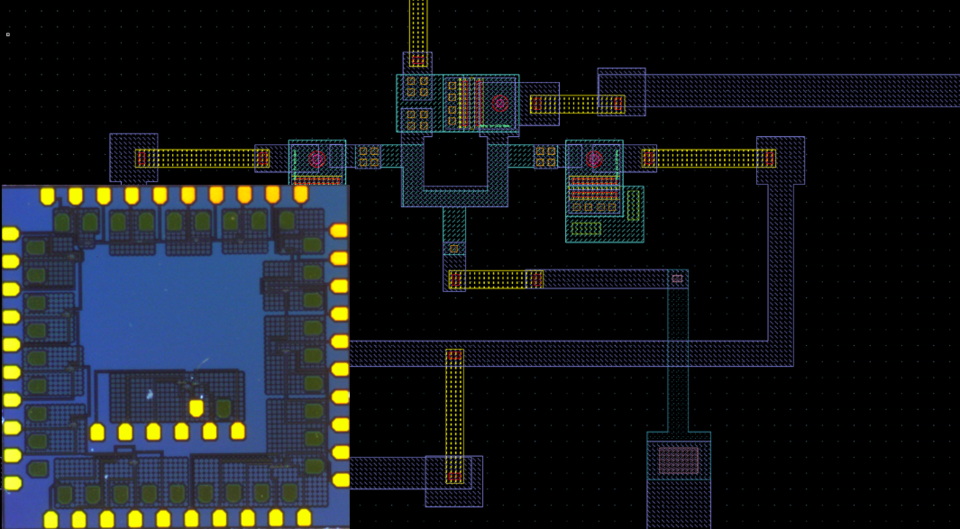

We have facilities to develop our devices from the materials level up. These include dedicated superconducting and magnetic deposition systems, and tools to fabricate sub-50 nm devices and high-complexity circuits in the Boulder Microfabrication Facility (clean room). In addition, we have advanced, high speed (50 GHz) electrical test measurement facilities and expertise for both room temperature and cryogenic devices.

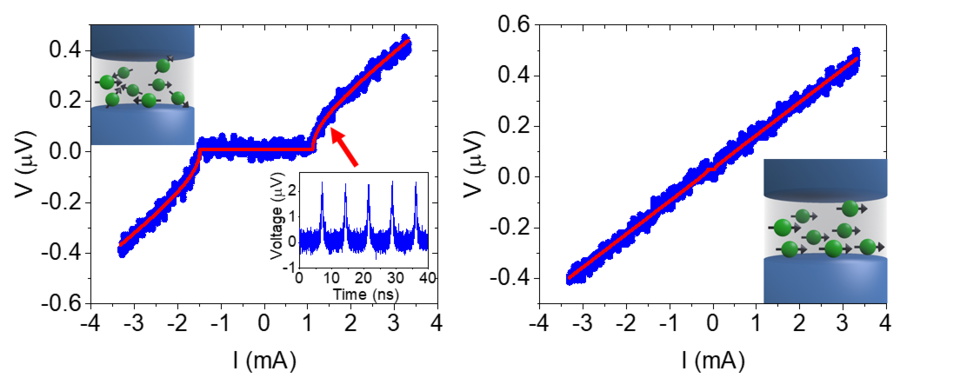

We recently demonstrated hybrid magnetic-superconducting devices that can be used as artificial synapses in a superconducting bio-inspired computational system. These devices are able to provide a near-analog weight for superconducting circuits that is both trainable and non-volatile while working at an extremely low energy scale (sub-attojoule).

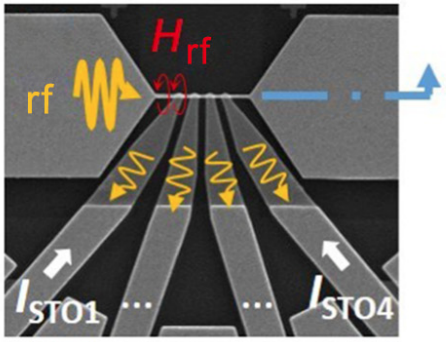

We have also investigated computation using magnetic devices as spin-torque oscillator arrays to do nonBoolean computation. The phase locking dynamics of these coupled oscillator arrays were map on to important computational primitives for image recognition, such as convolution operations and degree-of-match (distance) approximation. We are currently exploring stochastic oscillator MTJs to perform such tasks in a different, potentially lower energy manner.

Modeling

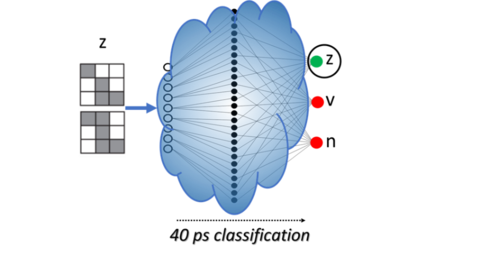

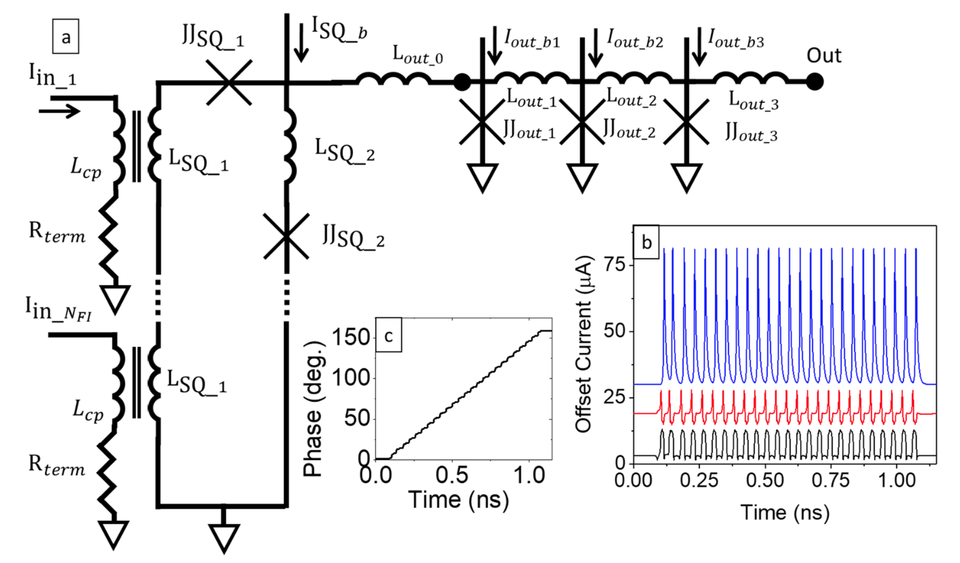

We have used superconducting SPICE simulations to physically model Josephson junction based artificial neural networks that can solve a 9-pixel classification problem. In further simulations, we have found that such networks have the potential to run at speeds in excess of 100 GHz.

We have confirmed that the SPICE models that we are using accurately predict the performance of synaptic circuits that we developed, giving us greater confidence in using these modeling tools to explore new architectures.

We have performed SPICE simulations to investigate scaling limits of superconducting architectures. We find no fundamental limitation on the fan-out level and digital communications, meaning that any limitations there will likely result from size considerations and can scale with Josephson junction fabrication advances.

Opportunities:

We currently have opportunities for postdocs and graduate students. We also have opportunities for postdoctoral fellows through the National Research Council Associateship Program.

- Neuromorphic Computing with Superconducting and Spintronic Devices

- Spin Electronics

- Phase and Frequency Dynamics of Arrays of Coupled Spin Torque Oscillators

Related Publications

- SuperMind: a survey of the potential of superconducting electronics for neuromorphic computing

- Artificial synapses based on Josephson junctions with Fe nanoclusters in the amorphous Ge barrier

- Fan-out and fan-in properties of superconducting neuromorphic circuits

- Synaptic weighting in single flux quantum neuromorphic computing

- Tutorial: High-speed low-power neuromorphic systems based on magnetic Josephson junctions

- Ultralow power artificial synapses using nanotextured magnetic Josephson junctions