3D in Web Pages

Summary

WebVR is a subset of VR (Virtual Reality) displays that are limited to displays in a web page. Information displayed in web pages are some of the most widely accessible types of information. Everyone has a web browser and give a location, an URL, access to information is simple and virtually unlimited. Graphics presented in web pages has become enhanced with the arrival and support of WebGL, a web based version of the time tested GL specification. Simply put if you go to a page with WebGL 3D graphics it will be displayed correctly in your web browser without the need of any browser plug-ins. This means that complex 3D graphics can be placed into web pages and the rich capabilities of these graphics can be used within a web page. Similarly, VR environments can now be placed into web pages via the extension of VR via WebVR.

One of the big differences between VR from 20 years ago and today is the ability to create and use 360-degree video environments. These image based environments offer a simple method to bring users and the public to places that are inaccessible, dangerous or simply forbidden. Our first taste of a widely used method of pseudo image based VR is an application such as Google Streetview. People have modified Streeview to work with Oculus Rift headsets and clearly this is just the beginning of more image based VR on the web.

We can work with three types of images, purely computer generated graphics, purely image based environments and combinations of the two (which leads to a type of Augmented Reality). Each of these types of imagery has different authoring and interaction requirements. Placing these images into web pages presents opportunities and challenges.

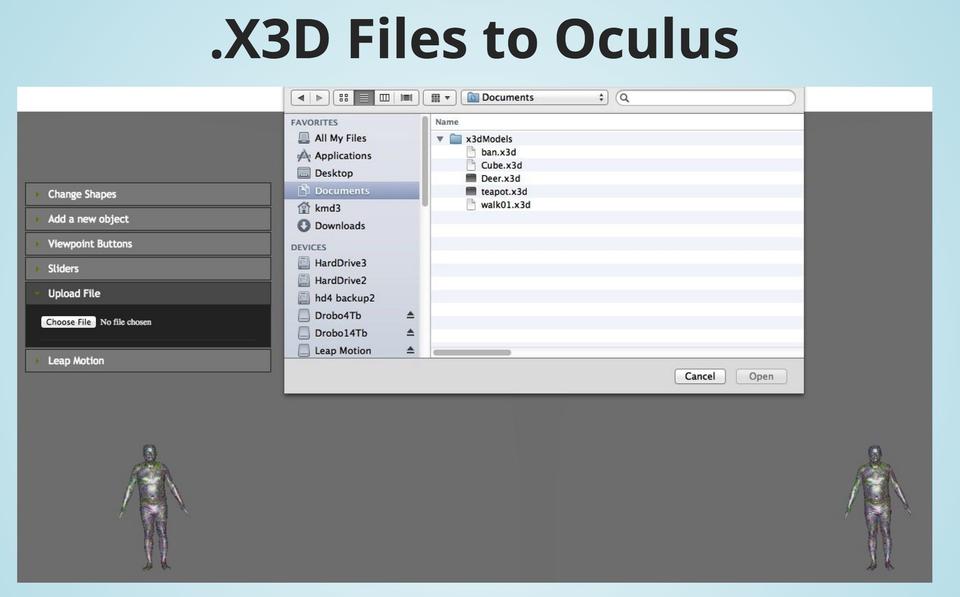

Much of our web-based 3D graphics over the past years has been using the X3D graphics standard. Given our desire to work with this material using an immersive head mounted display such as the Oculus Rift [1], our summer SURF student Kyle Davis created a tool to convert X3D files to files that are usable by the Rift. This tool is able to take X3D files of computer graphic scenes and make them viewable as stereo pairs with WebGL code that allows for the personal adjustment of stereo separation. It is available at: http://math.nist.gov/~SRessler/kmd3/Oculus/X3DToRiftTool.html.

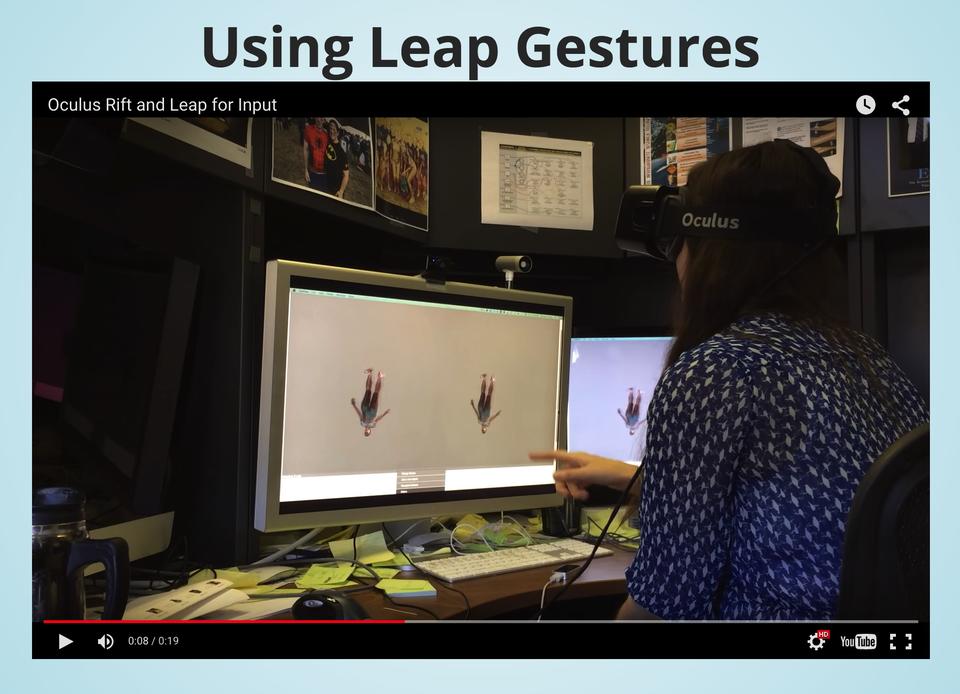

In addition, there are user interface issues when wearing a helmet. Your hand effectively disappears and keyboard actions become difficult. One solution is to use additional devices such as the Leap motion tracking device which "looks" at your hands and can interpret gestures. A talk by Kyle Davis on integrating the Leap with Oculus and 3D graphics is available at: http://math.nist.gov/~SRessler/kmd3/.

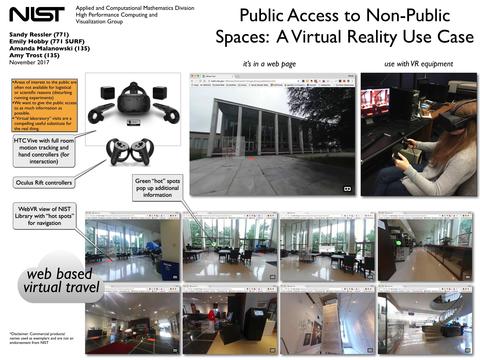

Public Access to Non-Public Locations

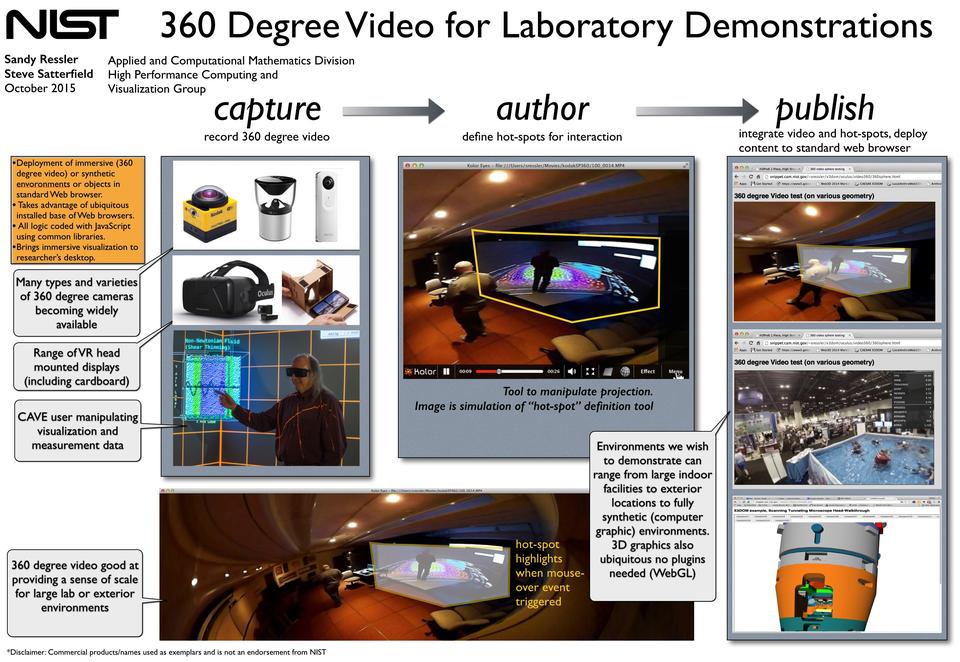

We are developing several processes to create 360 degree images and videos to allow the public to take "virtual tours" of NIST laboratories and spaces not normally open to the public for safety and security reasons.

We have performed test photography of the NIST NetZero house and NIST Fabrication Technology (shops) facilities. The images below illustrate the type of 360 degree images we are producing.

Credit:

Sandy Ressler/NIST

|

Credit:

Sandy Ressler

|

Development of useful WebVR requires the consideration of many usability and user interaction issues. We presented a talk at a SIGGRAPH BOF (Birds Of a Feather) session in 2017, available as "Pragmatic WebVR"[4].

Approaches to WebVR

Much of our work has historically been based on X3D (the successor to VRML). X3D content is typically displayed in a web browser with interactive control. A good example of this is the current version of the NIST Digital Library of Mathematical Function (for which we have advised for many years). Our Scanning Tunneling Microscope model was also originally in VRML and converted to X3D. We now want to view these models in a more immersive environment using VR. This required the development of tools to convert from X3D and other tools to improve the interaction. The illustrations below are from these tools.

|

X3D to Oculus tool |

Leap gestures in use |

As a guest scientist at CSIRO [2,3] for a full month in Brisbane Australia, we worked on immersive 3D web based video. We produced a prototype work flow and demonstrations of 360-degree videos using CSIRO equipment to photograph some interior scenes. Most of the work is encapsulated in a summary talk available at: http://math.nist.gov/~SRessler/w3d/CSIROwebvr.html.

Producing 360 degree video for embedding to web page.

A near term goal in the future is to create a set of tools and a "production path" to allow people to author 360-degree image based environments with interactive areas. Given appropriately authored "hot-spots" users will be able to obtain additional information about objects in the scene, all within a web page. The end user will simply go to a URL and (while wearing appropriate VR headset) look around the environment selecting points of interest. For example, an environment of a clean room for semiconductor manufacturing, (a place with difficult access) can be explored with the user clicking on pieces of equipment getting additional information, including supplementary video, about the items of interest.

Hypothetical production path for web-based immersive video content.

Publications

[1] S. Ressler "Using Oculus Rift with your Web Browser for Science", for SIGGRAPH 2015 BOF, August 9-13, Los Angeles CA.

[2] S. Ressler, T. Bednarz, "Image Based WebVR: Creating and Publishing Environments for Browsers and HMDs" seminar at CSIRO Canberra, Feb, 25, 2015.

[3] S. Ressler, WebVR or What's That 3D Environment doing in my Web Browser, at Computational and Simulation Sciences (CSIRO) conference, Feb 13, 2015.

[4] S. Ressler, et all. "Pragmatic WebVR" for SIGGRAPH 2017 BOF, August, Los Angeles CA.

Return to Visualization