Taking Measure

Just a Standard Blog

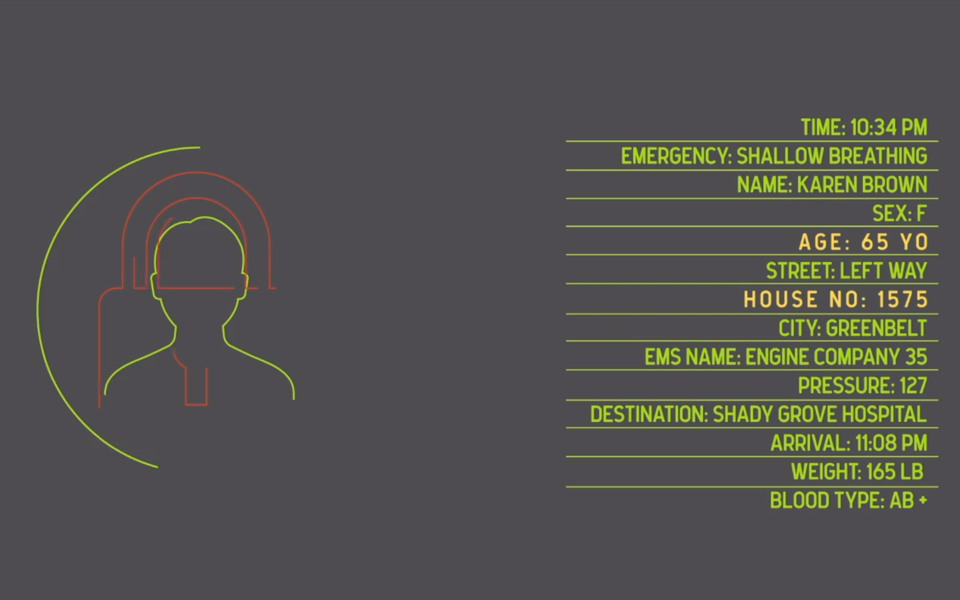

Emergency response generates a lot of data, including people's names, addresses, phone numbers, ages and sex, which is necessary for assessing and addressing the situation. Some of that data may also be useful for treating future emergencies, but right now there's no secure way to disentangle personally identifiable information from individuals from the data that could serve emergency response generally. Enter differential privacy. For today's Taking Measure, we sat down with NIST computer scientist Mary Theofanos to discuss differential privacy and how it could be used to expose valuable information without exposing our personal details.

What is differential privacy?

The concept of differential privacy is actually easy to understand.

Let’s say there’s a dataset that has your personal information in it and you don’t want your data to be identifiable in that dataset. The easy answer is that we just eliminate your row of data. But we can't do that for everybody because then we wouldn’t have any data. And even if we just eliminated rows of individuals who specifically opted out, there’s a good chance we wouldn’t have enough data to analyze, which means we would never discover the interesting trends or actionable information in the data.

With differential privacy, you take a dataset that has personal information in it and make it so that the personal information is not identifiable. You essentially add noise to the data, but in a very prescribed, mathematically rigorous way that preserves the properties of the overall data while hiding individual identities.

Can you give us an example of how differential privacy can be used to solve a problem?

Differential privacy can be used to evaluate different care strategies and patient outcomes. For instance, 911 call data could tell us a lot about patient outcomes, but it has a great deal of PII. If we de-ID that data, if we take out all the personally identifiable data, like name and street address, we can now answer questions like what are the outcomes relative to the length of and type of care the patient received on scene. Is it better to do more on the scene to try and aid the patient, which delays the trip to the hospital, or is it better to get to the ER quickly?

Is this a new concept?

Differential privacy has been around since 2006, but it’s largely been used in the academic space up to now. From a practical application perspective, government agencies and organizations are just now starting to use it.

Other techniques such as anonymizing the data have recently been shown to suffer from weaknesses that may allow reidentification of the data. Another reason it’s coming to the forefront is because of artificial intelligence. If artificial intelligence tools have good data going in, we’re going to see better results. Right now, we often have to use training data, or completely made-up data, because of privacy concerns. But if applying differential privacy techniques to real datasets allows you to use data that you otherwise couldn’t, you’re going to get better results.

Also, more organizations are now looking at their statistical databases and trying to figure out how they can make their data accessible to other organizations that may want to run statistical analyses and identify trends.

What is NIST’s role?

NIST is a measurement organization, and that’s the role we’re playing in this space.

Say you run a dataset through an algorithm, and now you have a differentially private dataset. We refer to that as a synthetic dataset. NIST’s role is to answer: How good is that synthetic dataset? How well does it represent the original dataset, even though you've added the noise? Because there's a utility curve here. You have to provide privacy, but you also have to keep the data as close as possible to the original data to ensure it’s useful and accurate.

So, our role is to help come up with metrics and measurement techniques to determine how good the algorithms are.

How is NIST doing that?

NIST just completed a Differential Privacy Synthetic Data Challenge run by our Public Safety Communications Research program. During the challenge, we evaluated a variety of differential privacy algorithms provided by academic institutions and private companies. We selected winners based on which algorithm performed better this time on this data, with respect to the performance metrics that we established.

But the question is, Are these the right performance metrics? And that could be debatable as well. That's why we need to continue to explore what are the right performance metrics.

We're considering a second challenge to continue our work.

Who stands to benefit the most from the use of differential privacy?

The stakeholders are varied, but it’s clear that the public safety community — first responders, law enforcement, fire, call dispatch centers — all have a great deal of data that would be useful for big data analysis. But all of that data is full of personally identifiable information (PII) that can’t be shared. Differential privacy is one solution for creating shareable PII-free data that can be analyzed for global or local trends.

The thing is, once we start to gather and evaluate the information from those datasets, then society as a whole will benefit. It could lead to better communication technologies, faster response times from first responders, and better decisions on what to put in buildings from an IoT perspective.

And public safety is just one domain where this is relevant. Differential privacy could be used to identify trends in a number of other arenas, including medicine, transportation, energy, agriculture, economics and financial services. There are just so many ways it could benefit everybody.

What made you want to get involved in the differential privacy space?

A couple of things. First, while I'm not a cybersecurity expert, I actually started my career in cybersecurity and worked for several agencies looking for vulnerabilities. Because of that, I'm very concerned about my personal privacy and I take a lot of measures to minimize my exposure from a privacy perspective.

But I also recognize the value of data today and that sharing data and merging large databases in many ways can improve all sorts of outcomes. I recently read a book called Invisible Women: Data Bias in a World Designed for Men, by Caroline Criado Perez, which talks about how data is missing on large segments of the population. If we're using incomplete data today to show trends and how things are moving forward, and we’re using that data to train AI tools, then we're propagating bad data.

So, given the decisions we make today may impact us for the next 30, 40 or 50 years, how do we fill in those gaps and ensure we have good data and the most data possible? Especially when some people don’t necessarily want to be part of those datasets if their PII is going to be exposed.

I realized that differential privacy is a solution to that issue.

What should we be concerned about when it comes to differential privacy?

There's obviously controversy with any new technique. Some people are concerned that differential privacy may hide some trends or that some of the techniques used could change the data in some way such that it's not reflective of the actual dataset. This is part of the measurement problem that NIST is trying to address. At what point are the trends hidden or does the deep analysis change because we applied differential privacy? And we don't really know the answers to all those questions yet.

About the author

Related Posts

Comments

Hi Mina!

The definition of differential privacy uses exp(ε) to bound privacy loss (rather than just ε). A big advantage of this formulation is that it fits nicely with commonly-used noise distributions.

For example, Laplace noise of scale 1/ε is often used to achieve differential privacy; the probability density function of the Laplace distribution with this scale is (ε/2)⋅exp(-ε|x|). The use of the exponential function in the definition of differential privacy means it is very easy to show that the Laplace mechanism satisfies differential privacy.

Best,

Joe

Would you please advice me about the reason of using exponential formula in DP.