Taking Measure

Just a Standard Blog

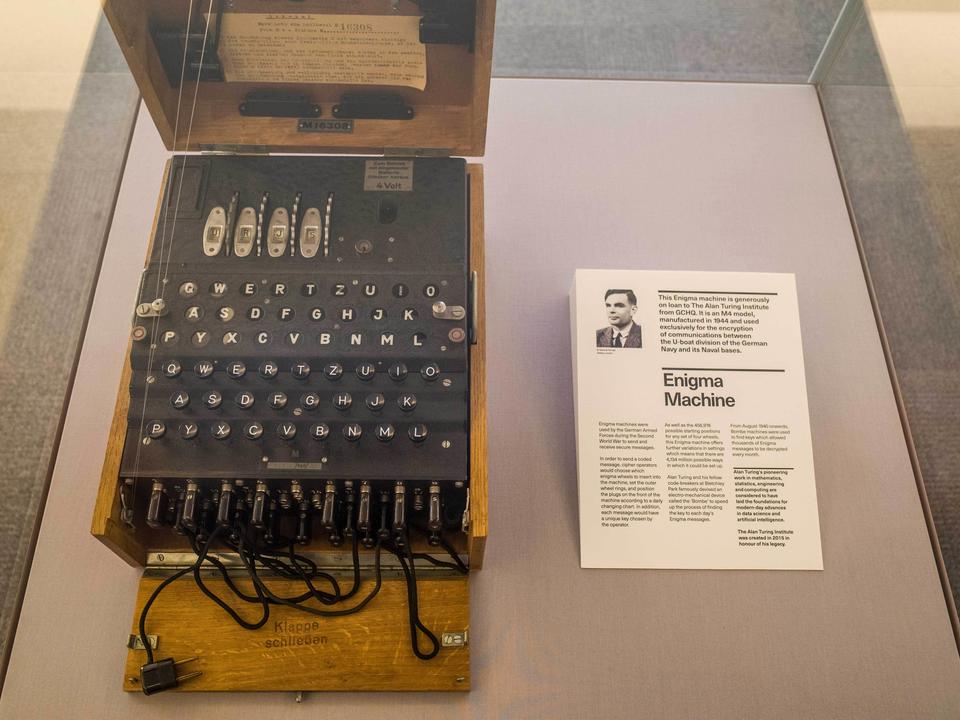

An enigma machine on display outside the Alan Turing Institute entrance inside the British Library, London.

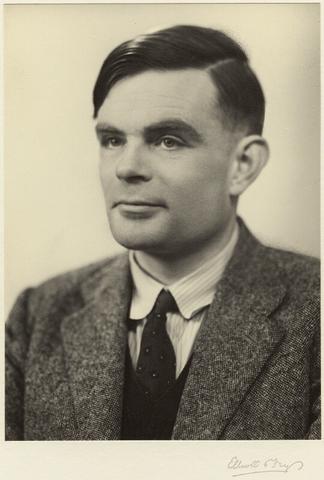

Suppose someone asked you to devise the most powerful computer possible. Alan Turing, whose reputation as a central figure in computer science and artificial intelligence has only grown since his untimely death in 1954, applied his genius to problems such as this one in an age before computers as we know them existed. His theoretical work on this problem and others remains a foundation of computing, AI and modern cryptographic standards, including those NIST recommends.

The road from devising the most powerful computer possible to cryptographic standards has a few twists and turns, as does Turing’s brief life.

In Turing’s time, mathematicians debated whether it was possible to build a single, all-purpose machine that could solve all problems that are computable. For example, we can compute a car’s most energy-efficient route to a destination, and (in principle) the most likely way in which a string of amino acids will fold into a three-dimensional protein. Another example of a computable problem, important to modern encryption, is whether or not bigger numbers can be expressed as the product of two smaller numbers. For example, 6 can be expressed as the product of 2 and 3, but 7 cannot be factored into smaller integers and is therefore a prime number.

Some prominent mathematicians proposed elaborate designs for universal computers that would operate by following very complicated mathematical rules. It seemed overwhelmingly difficult to build such machines. It took the genius of Turing to show that a very simple machine could in fact compute all that is computable.

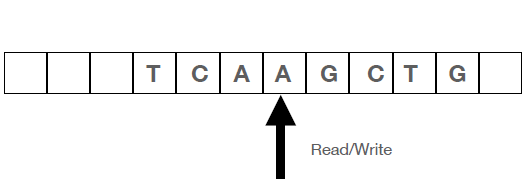

His hypothetical device is now known as a “Turing machine.” The centerpiece of the machine is a strip of tape, divided into individual boxes. Each box contains a symbol (such as A,C,T, G for the letters of genetic code) or a blank space. The strip of tape is analogous to today’s hard drives that store bits of data. Initially, the string of symbols on the tape corresponds to the input, containing the data for the problem to be solved. The string also serves as the memory of the computer. The Turing machine writes onto the tape data that it needs to access later in the computation.

The device reads an individual symbol on the tape and follows instructions on whether to change the symbol or leave it alone before moving to another symbol. The instructions depend on the current “state” of the machine. For example, if the machine needs to decide whether the tape contains the text string “TC” it can scan the tape in the forward direction while switching among the states “previous letter was T” and “previous letter was not C.” If while in state “previous letter was T” it reads a “C,” it goes to a state “found it” and halts. If it encounters the blank symbol at the end of the input, it goes to the state “did not find it” and halts. Nowadays we would recognize the set of instructions as the machine’s program.

It took some time, but eventually it became clear to everyone that Turing was right: The Turing machine could indeed compute all that seemed computable. No number of additions or extensions to this machine could extend its computing capability.

To understand what can be computed it is helpful to identify what cannot be computed. In a previous life as a university professor I had to teach programming a few times. Students often encounter the following problem: “My program has been running for a long time; is it stuck?” This is called the Halting Problem, and students often wondered why we simply couldn’t detect infinite loops without actually getting stuck in them. It turns out a program to do this is an impossibility. Turing showed that there does not exist a machine that detects whether or not another machine halts. From this seminal result followed many other impossibility results. For example, logicians and philosophers had to abandon the dream of an automated way of detecting whether an assertion (such as whether there are infinitely many prime numbers) is true or false, as that is uncomputable. If you could do this, then you could solve the Halting Problem simply by asking whether the statement “this machine halts” is true or false.

Turing went on to make fundamental contributions to AI, theoretical biology and cryptography. His involvement with this last subject brought him honor and fame during World War II, when he played a very important role in adapting and extending cryptanalytic techniques invented by Polish mathematicians. This work broke the German Enigma machine encryption, making a significant contribution to the war effort.

Turing was gay. After the war, in 1952, the British government convicted him for having sex with a man. He stayed out of jail only by submitting to what is now called “chemical castration.” He died in 1954 at age 41 by cyanide poisoning, which was initially ruled a suicide but may have been an accident according to subsequent analysis. More than 50 years would pass before the British government apologized and “pardoned” him (after years of campaigning by scientists around the world). Today, the highest honor in computer sciences is called the Turing Award.

Turing’s computability work provided the foundation for modern complexity theory. This theory tries to answer the question “Among those problems that can be solved by a computer, which ones can be solved efficiently?” Here, “efficiently” means not in billions of years but in milliseconds, seconds, hours or days, depending on the computational problem.

For example, much of the cryptography that currently safeguards our data and communications relies on the belief that certain problems, such as decomposing an integer number into its prime factors, cannot be solved before the Sun turns into a red giant and consumes the Earth (currently forecast for 4 billion to 5 billion years). NIST is responsible for cryptographic standards that are used throughout the world. We could not do this work without complexity theory.

Technology sometimes throws us a curve, such as the discovery that if a sufficiently big and reliable quantum computer is built it would be able to factor integers, thus breaking some of our cryptography. In this situation, NIST scientists must rely on the world’s experts (many of them in-house) in order to update our standards. There are deep reasons to believe that quantum computers will not be able to break the cryptography that NIST is about to roll out. Among these reasons is that Turing’s machine can simulate quantum computers. This implies that complexity theory gives us limits on what a powerful quantum computer can do.

But that is a topic for another day. For now, we can celebrate how Turing provided the keys to much of today’s computing technology and even gave us hints on how to solve looming technological problems.

More Taking Measure Posts About Historical Figures in Science

- What's in a Name? The Magnetic Weber

- What's in a Name? The Tesla

- Under Pressure: Blaise Pascal, the Barometer and Bike Tires

- Henry Moseley: A Patriotic Scientist Who Changed the Periodic Table — and Then Went Off to War

- Ada Lovelace: The World’s First Computer Programmer Who Predicted Artificial Intelligence

About the author

Related Posts

Comments

I am eternally grateful to Alan Turing. I grew up next door to Arthur C. Clarke (https://en.wikipedia.org/wiki/Arthur_C._Clarke) in Sri Lanka and I learned about Alan Turing at an early age thanks to Mr. Clarke.

Small correction to this one - "His involvement with this last subject brought him honor and fame during World War II, when he played a very important role in adapting and extending cryptanalytic techniques invented by Polish mathematicians. This work broke the German Enigma machine encryption, making a significant contribution to the war effort."

Polish mathematicians broke the Enigma machine. They have also developed the Bomb machine. Then work was taken over by Turing. Turing's contribution was significant as he was able to extend the Bomb concept along with further development of Enigma.

The "Polish mathematicians" mentioned in the article had their names: Marian Rejewski, Jerzy Różycki and Henryk Zygalski.

These guys actually broke Enigma.

The contribution of Turing was "industrializing" the technique, as we would say today.

Wasn't "an automated way of detecting whether an assertion ... is true or false" already proved impossible by Kurt Gödel's incompleteness theorem in 1931 or was Turing's result somehow distinct from that finding?

Mr Touring's deduction that the way to solve mathematical problems accurately and consistently was to use a machine became the foundation of computer science. He also worked with Tommy Flowers (Post Office engineer) to create the Colossus I and Colossus II. The latter was the first digital computer and helped break the encrypted messages of the German Geheimschreiber that used many rotors instead of four. We might also credit Ada Lovelace who in the 1800s came up with the concept of binary (digital) computation.

lol

This Website is useful!

Take the Enigma and turn a message into a string of characters, which are now appear to us random. In the next step use the alphabet of the language you used and pair (modular arithmetic) each of these characters with randomly selected characters from the alphabet and you have created a cipher that passes Claude Shannon's test required to claim perfect secrecy. Why does it happen? The first step turns the plaintext and it will appear random to us, but won't hold for the Turing test. The second step will create a cipher in which each character holds a N = 1 probability. Without any additional information all possible character combinations could be potentially the original plaintext. Naturally one doesn't use an alphabet but instead hex or binary code that can deal with all file formats including Unicode. Adding compression to it that even reduces already compressed files (down to 12% of the original compressed file) will make it impossible for any attacker to crack the cipher. Simple reason here too: The length of the cipher doesn't tell anything about the length of the plaintext. Key string for sender and recipient could be 16 hexadecimal characters or 8 bits, but that depends on the encryption they are using.

Good day! I appreciate you.

I watched The Imitation Game over the weekend, and at the same time, A Beautiful Mind. Similar storylines with smart scientific and mathematical monologues. I tried to create thematic programs, I provide screenshots with code and description.

"Little knowledge leads away from God, much knowledge leads to Him." Under the influence of Gödel's ideas, Turing began to develop an algorithmic method for studying the decidability of calculi. This led him to create a universal computational model of the Turing machine. It is appropriate to note that the Turing machine contained all the necessary elements for building a universal software computer.

Incompleteness theorems have become widely known and have many interpretations. In particular, it is assumed that the theorems imply the fundamental impossibility of formalizing all the laws of thinking, that is, thinking cannot be expressed in the form of some strict formula, precise instructions, an algorithm, using which it would be possible to calculate the results of the thought process or create "thinking" artificial systems.

In turn, this means a fundamental advantage of natural intelligence over machine intelligence, since the work of artificial systems is always based on some algorithm embedded in the system during its creation.

A "formal system" in the most general sense is a system of formulas given as axioms, using which new formulas can be obtained. It can also be said that a formal system is some abstract language in which, according to strict rules of combinations of letters and words, one can derive other statements from one another.

Gödel's theorems establish fundamental limitations of formal arithmetic and, as a consequence, of any sufficiently rich formal system in which one can define the basic arithmetic concepts: natural numbers, 0, 1, addition and multiplication.

Metaphorically speaking, A. Turing created a philosophical substrate, an environment, partly a discourse, but the concept of "artificial intelligence" was extracted from it by other researchers. A. Turing was able to substitute simple imaginary machines in Gödel's incompleteness theorem instead of arithmetic language and proved that with the help of the resulting "Turing machine" one can calculate everything that can be calculated by a certain algorithm.

The most important component of A. Turing's hypotheses about artificial intelligence is the rejection of the descriptive approach to thinking machines. If it is impossible to solve the problems of thinking and consciousness in a historically observable period, then we just need to remove the "machine" from the description.

A thought experiment is required, the essence of which is so simple that it is intuitively understandable to the observer, and the measure of thinking in this experiment is again a person: "if a person cannot distinguish between the intelligent behavior of a machine and a person, then can we say that the machine is endowed with thinking and intelligence?"

The imitation game, according to A. Turing, became the best form of conducting such an experiment. If a human observer cannot distinguish between a machine and a person, relying only on their activity, but not taking into account their appearance, then a computer (a thinking machine) can think.

A. Turing was able to apply the concepts of a universal machine and the computability of algorithms formulated in previous decades to the philosophical problems of consciousness and body (matter). The direct connection between mathematics and machine thinking is probably the strongest part of A. Turing's behaviorist approach to the set of problems that would later become the science of artificial intelligence.

A. Turing paved the way not only for philosophical functionalism, but also for a line of research in artificial intelligence whose followers argued that at some point in the future, computer programs could surpass human intelligence by simply writing a program that would reproduce inputs and outputs in the correct sequence.

Like any system, the inherent functional features of natural intelligence - and, as a result, limitations - mean that even qualitatively less complex artificial systems will be able to surpass it beyond these limits and solve individual human problems better than humans themselves.

For example, due to a more accurate match between the system's design and the task, or due to the volume or speed of simpler work performed by the system. As a result, no artificial intelligence will solve the entire complex of human problems better than humans themselves, but it can be better than humans in many individual problems.

Just as a lever can help lift a load, so a neural network can help solve a problem. In both cases, we can say that a person solves only part of the problem - creates a lever or a neural network, or that a lever lifts loads better than a person, and a neural network solves problems better than a person.

But in reality, a person only uses different devices in the context of his intellectual capabilities, so no knowledge, whether it is reflected in a theory, mechanism, neural network or any other system, will express the entire complexity of thinking in which it arises.

Acting at the level of specific tasks set by a person, artificial intelligence will never be able to generalize individual solutions into global strategies better than a person, always remaining a tactical means of human intelligence.

Thanks.