It's a staple of the TV-crime drama: a ballistics expert tries to match two bullets using a microscope with a split-screen display. One bullet was recovered from the victim's body and the other was test-fired from a suspect's gun. If the striations on the bullets line up—cue the sound of a cell door slamming shut—the bad guy is headed to jail.

In the real world, identifying the firearm used in a crime is more complicated. However, the basic setup is correct. Ballistics examiners match bullets visually, and they've been doing it this way for almost 100 years. But testimony based on visual examination leaves out something important.

"When an expert testifies that two bullets are a match, the jury wants to know, 'How good a match is it?'" said Xiaoyu Alan Zheng, a mechanical engineer who conducts forensic science research at the National Institute of Standards and Technology (NIST). "No forensic results have zero uncertainty.

"Researchers are developing statistical methods for quantifying that uncertainty, and the main obstacle they face is a lack of sufficient data. This month NIST released the largest open-access* database of its kind—the NIST Ballistics Toolmark Research Database—to help remove that obstacle.

Led by Zheng, this database effort is partly in response to a 2009 report from the National Academy of Sciences, which highlighted the need for statistical methods to estimate uncertainty when matching ballistic and other types of forensic pattern evidence. The development of the database was largely funded with a grant from the National Institute of Justice.

Sources of Uncertainty

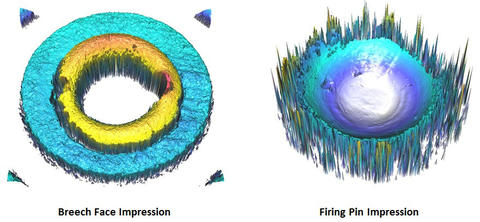

When matching a bullet to a gun, examiners look at striations that are carved into the bullet by rifling in the gun's barrel. If the cartridge case is left behind, they can also look at impressions left on it by the weapon's breech face and firing pin.

But these clues can sometimes be misleading. For instance, two gun barrels that are manufactured consecutively may produce bullets with very similar markings. That can lead to false matches. On the other hand, a gun might change over a short time due to wear on the parts or accumulation of debris in the barrel. If that happens, a single firearm might produce bullets that look like they were fired from different guns.

These confounding factors introduce uncertainty into examination results. Researchers would like to quantify this uncertainty using statistical methods, and to do that they need large databases of test-fired bullets and cartridge cases. The databases already in use for solving crimes, such as the National Integrated Ballistics Information Network (NIBIN), are proprietary and contain sensitive information. Researchers cannot download bulk data from them for use in statistical studies.

The NIST database, on the other hand, is open-access* and the data is freely available.

Standardized File Formats

To seed the database with data, Zheng went to forensics and law enforcement conferences asking agencies to test-fire every 9-mm firearm in their reference collection—9 mm being the caliber most commonly used in the commission of crimes.

After completing the test fires, labs sent the bullets and cartridge cases to Zheng at NIST, along with data on the gun that fired it. At the lab, technicians scanned these samples using a microscope that produces a high-resolution, 3-D topographic surface map—a virtual model of the physical object itself.

These surface maps produce more detailed comparison data than the two-dimensional images that are traditionally used to match bullets. For this reason, the field of forensic firearms identification is starting to make the transition to 3-D.

To facilitate the transition, NIST co-founded, with microscope manufacturer Cadre Forensics, the Open Forensic Metrology Consortium, or OpenFMC. This group, which includes members from industry, academia, and government, has agreed on a standard file format for 3-D topographic surface maps for use in ballistic imaging. NIST's new research database will use this open standard, which will allow researchers to easily share data, though the database will also accept traditional two-dimensional images.

The database currently has only about 1,600 test fires—a relatively small number. "But, it's like the first forensic DNA databases," Zheng said. "They started off small but filled up quickly."

Open-Source Methods

In the meantime, the data that is available is already proving useful. Eric Hare, a Ph.D. student in statistics at Iowa State University, is using the NIST research database to develop bullet-matching algorithms. His work is supported by CSAFE—the Center for Statistics and Applications in Forensic Evidence—which is funded by NIST.

Hare's algorithms are based on machine learning, which allows a computer to match patterns without being explicitly programmed how to do so. Hare "trains" his algorithms by feeding them pairs of bullets and telling the computer whether they match or not. The computer analyzes the physical features of those bullet pairs and develops a set of statistical rules for predicting whether or not a pair of bullets match.

"Once we've trained the algorithm, we give it data without telling it whether it's a match or a non-match, and we can see how well the algorithm performs," Hare said.

As the database grows in size the algorithms will become increasingly accurate. And as the database grows in variety to include different types of ammunition and weapons, the algorithms will become more broadly useful. The database already contains test fires from consecutively manufactured firearms, and Hare is testing whether the algorithms can reliably distinguish between them.

Perhaps most importantly, Hare's code is open source. That means other researchers can check for bias or error in the algorithms, and correct any that are found.

"In high-profile situations where there's a lot at stake, it would be good if everyone knew exactly what the algorithms were doing," Hare said.

The FBI recently agreed to contribute a large dataset of test fires from its reference collection of several thousand firearms, which will greatly increase both the size of the database and the diversity of firearms it covers. Zheng hopes that other forensic labs with 3-D microscopes will start uploading their data to the database as well. Because while the database is now available, the real work has just begun.

*this text was corrected from "open-source" to "open-access."