When your cell phone talks to your cable TV connection, the conversation can get ugly. In certain conditions, broadband 4G/LTE signals can cause significant interference with telecommunications equipment, resulting in problems ranging from occasional pixelated images to complete loss of connection to the service provider.

That's the conclusion of a suite of carefully controlled tests recently conducted by PML researchers Jason Coder and John Ladbury of PML's Electromagnetics Division, in conjunction with CableLabs, an industry R&D consortium. The goal was to explore ways to quantify how 4G broadband signals may interfere with cable modems, set-top boxes, and connections to and from them, and to suggest methods and identify issues of importance in defining a new set of standards for testing such devices.

The PML team found that, of the devices tested, nearly all set-top boxes and cable modems by themselves were able to handle 4G interference expected from typical cellular devices. But the cables, connectors, and signal splitters in a residential set-up can allow signals to leak into the signal path. Retail two-way splitters performed poorly, and half of the consumer-grade coaxial cable products tested failed – that is, had substantial signal errors – at or below the normal signal levels.

"These days, everybody's got a cell phone, and a vast number of them are 4G," says Coder of the Radio-Frequency Fields Group. "It's a common occurrence to check your phone or use your tablet while watching TV. This is a problem that cable companies and cell phone manufacturers hadn't really considered in the past, when current standards were written. After all, a cable TV signal is completely conducted, and cell phones are entirely wireless. So interference between them didn't seem to pose much of a threat. But now we have a situation where there are so many devices radiating either at the same time or in rapid succession that it starts to be noticeable to other devices."

That situation is poised to get worse. At present, the principal operating frequencies of 4G cell cphones are in the range of 700 MHz to 800 MHz. Next year, the Federal Communications Commission is scheduled to start auctioning off spectrum around 600 MHz. If cell phones move into the newly available lower frequencies, they will start to overlap with a much larger portion of the cable TV frequency range.

"More and more people," says Ladbury, "are beginning to think that the current electromagnetic compatibility (EMC) standard needs revision." One such standard, IEC61000-4-3, ratified in 2006 and amended most recently in 2010, specifies that devices should be tested in an anechoic chamber using a simple signal coming from only a few different angles.

"When that standard was written," he says, "a typical communications signal was much different. It was an AM signal, and they determined a test method suited to the analog cell phone standards of the day and the few wireless devices that there were. The devices experiencing interference today are much more complicated. In the early stages of the standard, they were concerned with interfering with hearing aids, RS-232 communication, and analog television. Now we have high-speed communication systems and digital television. And the characteristics of these signals are beginning to look more and more like LTE signals."

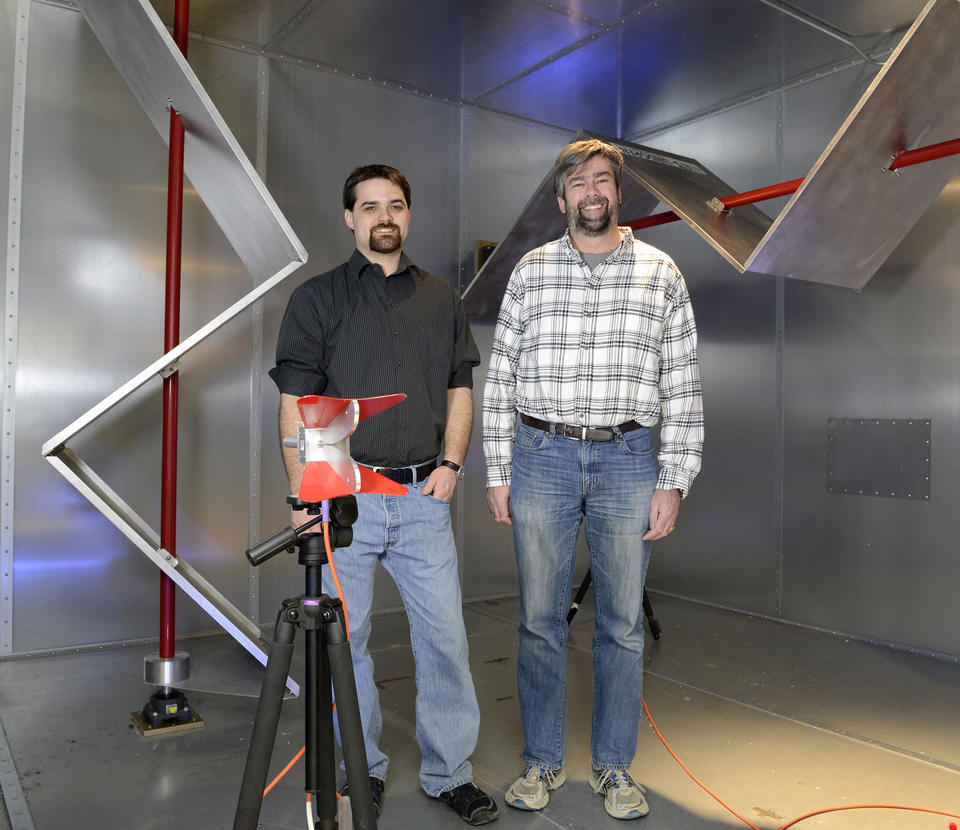

The PML team conducted its tests at several frequencies between 550 MHz and 900 MHz that represent a sample of current and future frequencies at which cable systems and cell phones must coexist. Instead of an anechoic chamber, they employed a reverberation chamber in which large horizontal and vertical "stirrers" (see photo above) are rotated to expose the devices under test to radiation from numerous angles of incidence and polarization. "It's a controlled space that provides a sort of worst-case environment where all the power that your cell phone transmits stays in the cavity and bounces around and eventually comes in contact with the cable modem or set-top box," says Ladbury. "In many respects, it's more like the real world than an anechoic chamber. But ultimately you need a variety of environments."

For the purposes of this study, the researchers defined device failure as occurring when a TV picture became pixelated or a cable model accumulated a number of uncorrectable packet errors. But they raise the question of whether that is the right criterion, noting that one might alternatively define failure as occurring at the point at which the user notices a problem. (But even then, how much data degradation is "too much"?) The researchers, who are in the process of publishing their results, expect to bring these and other questions to conferences and technical committees to provide a starting point for consideration of a new EMC standard.

Meanwhile, Ladbury says, improved consumer education and understanding could help minimize problems. "When the cable company installs cable in your house, they typically do it the right way and use quality components. The problem is that, once they leave, you have the freedom to change things – get a new TV, add a line splitter, run new cable, and so forth. And many consumers don't have the knowledge to make the right decisions in those circumstances.

"And even if they do, adequate products may not be easily available. For example, we tested several retail-grade cable splitters and all of them failed by our standards. So for many people, the only option is to go to a trade-grade or professional-grade splitter. And they don't sell those down at the mall."

"Another thing most people aren't aware of is the effect of a loose connector. We tested set-top boxes that manufacturers spent a lot of money designing. They had fantastic shielding, and top-quality cable attached. But we found that if you back off the connection threads even half a turn, the performance can degrade substantially."

Clearly, much more understanding is needed before, as Coder puts it, the emerging Internet of Everything becomes "the interference of everything."