Taking Measure

Just a Standard Blog

No statistician's office is complete without a whiteboard!

Now, if you’re familiar with either statistics or J.R.R. Tolkien, I know the title grabbed your attention. And if not, don’t worry, all will become clear in time, but I’ve always wanted to reference “The Lord of the Rings” in a title (and scientific papers provide little opportunity for that!).

Statistics is a way of learning and making decisions when faced with uncertainty—in other words, all the time. There are two primary types of statistical methods, Bayesian and frequentist, and some statisticians have strong opinions about both. I apologize if I have omitted someone’s preferred approach for dealing with uncertainty; I know there are others.

Despite the title above, I do not really think that one method is better than the other, and I think the world is better off by having both at our disposal. For those of you who have a different opinion, note that there is a comments section below. I’d like to hear your thoughts and if I am able to reply, I will. (Note also that I will be loose with terminology on purpose as this is not a technical paper, and it will make the examples a little easier to follow.)

A simplified version of the scientific method is 1) make an observation or ask a question; 2) form a hypothesis; 3) test the hypothesis through an experiment; 4) revise the hypothesis if necessary and go back to 3); 5) draw conclusions and publish your result; and, hopefully, 6) collect your Nobel Prize (when will they add a mathematics and statistics category, I wonder?). A well-designed experiment provides as much information as possible. But because real-world data are noisy, statistical techniques are important for comparing hypothesis to experiment. Did one measured property really increase while another decreased, or did the observed pattern just appear by chance?

Related techniques can be used for prediction, too. Think about warranties on consumer products, such as the TV I just bought. A TV company would probably not profit, or even stay in business, if it warrantied all the televisions it made for 50 years. (But who knows? Perhaps today’s televisions are that good.) Instead, the company will conduct experiments and use statistical models to make statements such as, “90 percent of Model XYZ televisions will work perfectly for two years.” This is an area of statistics known as reliability.

So, all that said, what are frequentism and Bayesianism? From a philosophical point of view, they are just different ways to interpret the probability of something happening. A frequentist philosopher interprets probability as how often an event will occur in the long run. For example, you generally can flip a coin over and over, and if you did you could determine what percentages of the flips would be heads or tails over time. But wait, I can’t be quite that cliché. I work at the National Institute of Standards and Technology (NIST), so let’s talk about Standard Reference Materials (SRMs) instead.

An SRM is a material that NIST scientists have carefully measured and certified as containing specific amounts of certain substances or having certain measurable properties. Industry and government organizations can use these materials to calibrate or perform quality-control checks on their measurement equipment.

For instance, if a food manufacturer wanted to know how much fat was in the peanut butter that they are producing, something they need to know in order to fill out the nutrition label accurately, they could buy our peanut butter SRM, which has a certified value for the amount of fat to test their measurement equipment. If they get a significantly different value than the one on the certificate when they measure the SRM, they know that something is wrong with their instrument or measurement process that will likely affect the measurement of their own product as well.

If you look at an SRM certificate, which lists all the certified values, you might find a phrase like this, “[T]he expanded uncertainty corresponds to a 95 percent coverage interval.” But what does “95 percent coverage interval” mean? A frequentist philosopher might say it means that “if we conduct the same measurement experiment 100 times and analyze the data produced in the same way, we expect that 95 out of the 100 experiments will produce intervals that include the true value of the amount of substance we are interested in knowing about.”

The Bayesian philosopher might reply, “That is a reasonable interpretation, so long as the measurement experiment can be perfectly repeated. What about events that are not repeatable? For example, NASA’s InSight spacecraft will land on Mars only once. But beforehand, engineers selected a target in which to land and quantified the uncertainty about hitting that target with a certain probability. A pure frequency interpretation is not rich enough to cover such situations.” Indeed, there are many situations like this where it is necessary to express uncertainty in the prediction of a one-off outcome beforehand. The Bayesian philosopher might say, “It is preferable to think of probability as a way for a person to express uncertainty in his or her knowledge of some true state of nature or of a future outcome.”

Resolving these differences is not the point of this post, nor do my thoughts dwell there very often. No matter how you interpret probability, its mathematical, axiomatic foundations are without question. Both frequentist and Bayesian philosophies base their techniques on the same foundations, and as we’ll see, the techniques, from a practical perspective, are quite similar. Thus, they often lead to the same conclusions.

The main ingredient in a frequentist analysis is the likelihood, which is a function of unknown parameters that represent scientific quantities of interest. For example, coming back to the peanut butter SRM, one of the parameters of the likelihood will be the true fat concentration of the peanut butter. Another might be the true variability of the measurements of fat concentration due to uncontrollable influences such as minor temperature variations in the laboratory.

We interpret the likelihood as the probability of getting the data you have observed (measurements of fat concentration) given specific values of the parameters, in this case, the actual concentration of fat and measurement variability. A single best estimate and interval for the parameters of the likelihood are typical outputs of a frequentist analysis, e.g., there are 51.6 grams plus or minus 1.4 grams of fat per 100 grams of peanut butter being measured. These would correspond to the certified value and coverage interval on an SRM certificate.

There are two primary components to a Bayesian analysis. The first is the same likelihood that appeared in the frequentist analysis. The Bayesian data analyst interprets the likelihood as the probability of the observed data for specific parameter values, just as the frequentist, even though they may interpret probability differently.

The second ingredient in a Bayesian approach is the prior probability distribution, which is commonly interpreted as the probability of belief in specific parameter values BEFORE observing data. In the peanut butter SRM example, BEFORE any measurements of fat concentration are made, the prior distribution would describe the true fat concentration of the peanut butter. This is perfectly sensible because the ingredients comprising the peanut butter as well as their relative amounts are known when the peanut butter is made. The prior distribution can be used to make statements like, “the probability that the fat concentration is between 45 and 55 grams per 100 grams is 99 percent,” for example. The likelihood and prior probability distribution are combined using Bayes’ rule to produce the posterior probability distribution, which gives the probability of the analyst’s belief in specific parameter values AFTER observing data. When summarizing the results of a Bayesian analysis, it’s typical to provide a single best estimate and an interval estimate, just as for the frequentist case.

How is it possible that Bayesians and frequentists can arrive at the same scientific conclusions? After all, frequentists do not choose a prior probability distribution. It turns out that if the observed data provide strong information relative to the information conveyed by the prior probability distribution, both approaches yield similar results. So, why might the observed data provide stronger information than the prior distribution? Recall how to interpret the prior probability distribution. It is the probability of specific parameter values BEFORE observing data. A common cautious approach is to spread prior probability over many parameter values, assigning zero or very low probabilities only to implausible values.

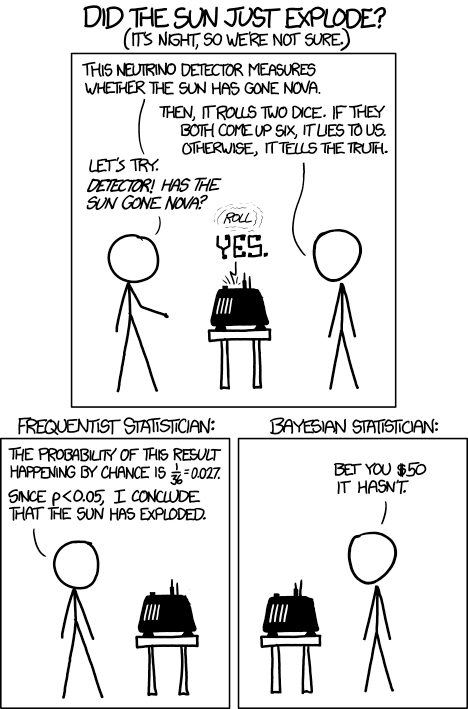

Why then should someone go to the trouble of choosing a prior distribution if it’s inconsequential? The answer, of course, is that it’s not always inconsequential! As with many scientific debates, Randall Munroe has weighed in via his XKCD webcomic “Frequentists vs. Bayesians” (#1132) shown below. The observed data, in this case, is the detector answering “yes,” which could either mean that the machine rolled two sixes and is lying, a little less than a 3 percent chance, or the Sun really has exploded. Because it’s so unlikely that the machine is lying, the frequentist cueball concludes that the sun has indeed gone nova. Bayesian cueball, however, acting upon prior knowledge about the sun’s projected lifespan and the fact that both statisticians remain in perfect health, has attached prior probabilities to the competing hypotheses, with the hypothesis that the sun has not exploded receiving a very large share of the prior probability. Upon combining the information contained in the yes answer with the information contained in the prior, Bayesian cueball decides to disagree with frequentist cueball. And is willing to bet on it!

Randall’s cartoon is obviously an exaggeration to make his point and be funny. A frequentist’s hypothesis test would never be constructed this way. However, there are many real problems for which the prior probability distribution can have a large positive impact as opposed to the negative impact staunchly frequentist statisticians may fear.

SRMs, again, provide a good context for discussion. For some SRMs, information from two or more measurement methods is combined to calculate the certificate value and coverage interval. Think of a ruler and a tape measure, both of which can be used to measure the length of something. The prior distribution can be used to quantify the idea that these methods should produce similar measurements. They are targeting the same quantity being measured, they have been vetted by NIST scientists and found to be reliable, etc. Using the prior distribution to quantify those ideas can have an important positive impact.

Frequentist and Bayesian data analysis methods often lead to equivalent scientific conclusions. When they don’t, I think the focus should be on understanding why the prior probability distribution has such a large influence, and if that influence is sensible. In the XKCD cartoon, the prior distribution saved the day (or at least earned the Bayesian $50). In other cases, it could lead you astray.

In the endeavor of learning about our universe through observation, experimentation and analysis of the data we collect, it does not seem wise to me to limit the set of tools that you are willing to employ. My mindset is to broaden my toolbox as much as I can and to the best of my ability to understand the strengths and weaknesses of each of the tools available for my use.

About the author

Related Posts

Comments

Hello. I was looking at a jar of peanut butter " Peter Pan " and I read the ingredients label. The word I found was RapeSeed. Than I search how its getting popular in the news and more stories / articles online. What made you use that as an example of this paper? How could I ask you more questions? Or have a conversation with a observation/experimental purpose? Thank you. Email me if you read this. [email protected]

Cynthia,

The ingredient you mention is now known more commonly as "Canola" (as in canola oil, which is becoming more popular because because it has more monounsaturated fats (which is considered healthier by many)).

Hello Adam,

Since that You have mentioned flipping coins inside article,

quote :"For example, you generally can flip a coin over and over, and if you did you could determine what percentages of the flips would be heads or tails over time."

I am interested is it possible to throw a coin under certain identical physical conditions so that the throw out result is always the same., head or tail.

All the best,

Željko Perić

Hi Zeljko,

I've discussed the idea with colleagues in casual conversation, but I'm not aware of any formal work with that goal. If you are aware of any, I'd like to know about it. In the same spirit, someone might ask more generally if anything is truly random. Said another way, you might ask, with enough information, is everything completely predictable? I think that physicists (maybe quantum physicists) have wrestled with the more general question. But I don't feel confident to comment one way or the other.

Thanks for answer

Dr. Pintar, Thanks for the clear and cogent comparison. I'm a "practical" statistician, which means I've used stats methods to solve industrial problems (make decisions) with less reliance on theory. I don't place myself in either camp; personally, I dislike divisiveness among scientists (I enjoy discourse, though).

As we're seeing in the science literature, reliance on p-values has more potential for confusion and poor decision-making than originally thought. As an experimentalist in chemistry & engineering, I've always tried to use some "Bayesian thinking" as the leavening in whatever my (mostly Frequentist) software provides me for guidance.

It's good to see Bayesian methods and thinking getting a fairer shake than in the decades since Bayes/Laplace. Whether there's a true fusion of the philosophies, I cannot say, but I've always found using a larger toolset to be valuable in practice. Maybe, like the Nova Detector, there's a way to test the hypothesis about this possible fusion of ways? Hhhmmmm....

Adam, great article, and anyone taking a class in Bayesian methods should read this first! In my opinion, universities should offer more courses on Bayesian methods. Certainly there are situations where utilizing prior information can be beneficial.

Nice article Adam, sorry I took a while to get around to reading it.

In many industrial measurement uncertainty problems there is a wealth of prior estimates in the form of expert opinion of credible values for the measureand. It seems wasteful to ignore these, but assigning a distribution is difficult because there are no unbiased experts. In my experience, the approach is typically to exclude these from the metrologist's uncertainty estimate and instead use them in the subsequent interpretation of the "measurement". Whilst comforting for the metrologist, this approach always seems to be passing the buck a little. I know this has been a topic of debate amongst the GUM author community but would welcome your thoughts.

Thank you, Pete!

I agree with you that there often exists prior information, but that

it may be difficult to encode into a probability distribution. There

does exist literature and tools on prior elicitation to help.

Relatedly, there is a large body of literature on default prior

distributions, which may also be called non informative or weakly

informative.

I would not recommend using subject matter expertise only when

interpreting a measurement. Data are noisy. If we can improve a

measurement by combining by combining data with subject matter

expertise, I think we should. However, your point about biased

experts is very good. I would phrase it slightly differently. I

would say that people are often more confident about what they know

than they should be. Because of that, in part, an analysis is never

complete without a careful model assessment, which for a Bayesian

analysis includes the prior. A model assessment should at least

compare predictions to hold out data, which is often referred to as

cross validation.

I am not part of the GUM author community, but I feel like the GUM

fully embraces subject matter expertise, and thus prior distributions.

The GUM lists two classes of methods for estimating uncertainty

components, Type A and B. Type A methods for estimating an

uncertainty component are based on data, but I think a Bayesian method

can still be a Type A method. Type B method are wholly based on

subject matter expertise.

Looking at this as a physicist I would say that the Bayesian paradigm is the most general one, of which the frequentist paradigm is a special case (where repeated experiments can exist or be imagined).

That does not put the two on the same level, but still makes clear that there is a use for either one.

Just like in physics you will apply classical mechanics where conditions are such that more advanced theories are not necessary. That also means that if both paradigms were applied and gave different answers, I would always go for the Bayesian answer.

Hi Charles,

Thanks for the comment.

I understand your point about generalization, and I sometimes think about things in that way too. However, I would not necessarily side with the Bayesian answer when there is a disagreement. As I said in the post, each approach is based upon a different set of assumptions, perhaps just slightly different, but different still. The sets may not be nested. To chose one result above another, you need to argue in favor of one set of assumptions over another.

I am still not clear the difference. However, I chuckle at your eaxmple of the ruler and the tape measure.

Mainly because I use them both frequently, and they are "close enough" for my work that I do not have to tale into account the uncertanty principal. That is always good for discussion, over pizza and beer preferably.

Peace,

Mike G.