Taking Measure

Just a Standard Blog

In the lab testing new crystals for generating quantum entanglement. Problems with an experiment are common, such as the alignment of a laser in the experimental setup.

I am a scientist. I am often wrong, and that’s okay.

You may have heard about major errors in science and engineering that made the news headlines, like the collapse of the Tacoma Narrows Bridge, aka “Galloping Gertie,” or the 1999 crash of the Mars Climate Orbiter. Or maybe you’ve seen the recent video from SpaceX, “How Not to Land an Orbital Rocket Booster.” You may not realize how often scientists are wrong, but being wrong is actually part of the process of doing science. The trick is to catch errors before they leave the lab, and certainly before they make the front-page news, though, obviously, that doesn’t always happen.

Thomas Edison famously said, “Genius is one percent inspiration and 99 percent perspiration.” Why so much perspiration? Because of all the effort you put into testing your inspirations, having them be wrong and not work, and then trying again. This is brilliantly illustrated by something Edison supposedly also said about making lightbulbs: “I have not failed 700 times. I’ve succeeded in proving 700 ways how not to build a lightbulb.”

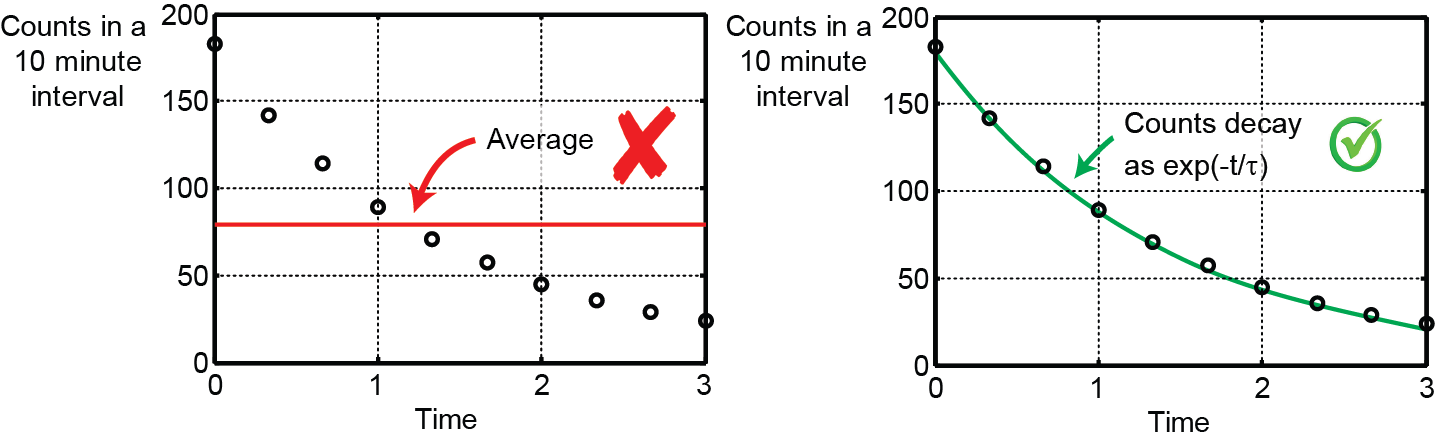

I have had many moments of being wrong in my scientific career. One of my most memorable moments was during a hands-on exam in school when I was given equipment to observe and measure the radioactive decay of a certain isotope. I remember thinking that I needed to repeat the measurement several times to find the average decay rate. I diligently recorded the number of clicks read by the Geiger counter during a fixed time interval and then I averaged the results. In the process of doing this averaging, I completely overlooked the fact that the rate of radioactive decay decreases over time. I had incorrectly assumed that this experiment would have the same property as most science experiments: that the results (in this case, the decay rate) wouldn’t change over time. After hearing the chatter of the other students after the exam, I immediately realized my mistake, but it was too late. My answers to the exam were completely wrong. I was mortified.

Looking back, this experience taught me several lessons. First, I learned that science can be humbling. I shouldn’t be overly confident in my conclusions because there’s always a chance I might be wrong, something that Mother Nature will no doubt reveal to me (or my colleagues) at some point. More importantly, though, the experience taught me that it’s okay to be wrong if you are willing to accept that possibility and make corrections. In this case, I had followed the scientific method, but I ran out of time before I could correct myself. In other words, I hypothesized that the rate of radioactive decay did not change over time. I tested the hypothesis by observing and recording the rates. I analyzed the data, but I failed to notice that there was a downward trend. With more time, I probably would have caught my error and revised my hypothesis and data analysis, accordingly.

In my current work, I often follow the same basic framework. I have a hypothesis that I want to test. I do experiments and analyze the data to look for evidence that will confirm or disprove that hypothesis. Many times, the trend I find does not match my expectations, so I go back and re-examine my hypothesis and/or check whether I’m doing the experiment correctly. Problems with an experiment are common because it’s easy to overlook factors like the temperature stability or uniformity inside an oven, or the alignment of a laser in the experimental setup. A lot of effort in the laboratory is spent troubleshooting and repeating experiments before arriving at a conclusion.

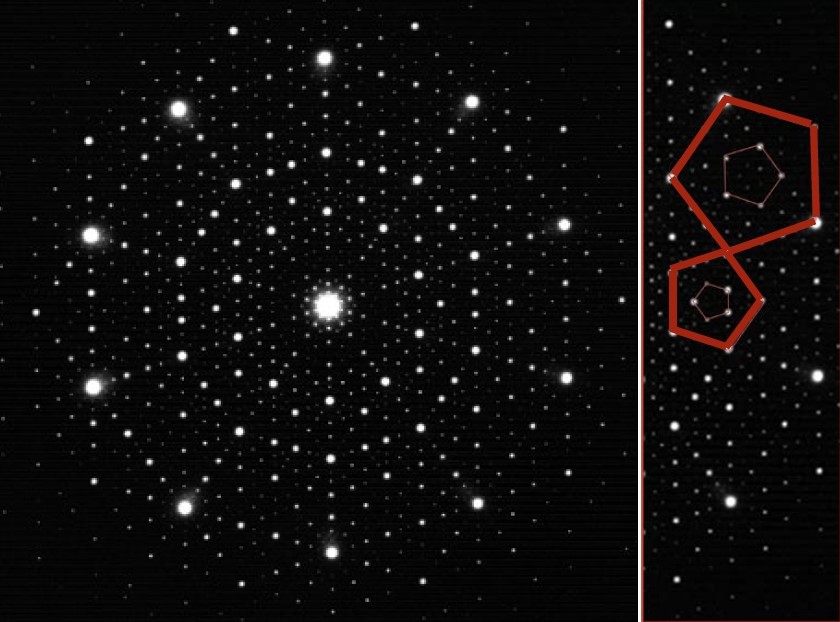

This vigilance against errors is the key ingredient to making advances in science. One of the greatest discoveries made at NIST was of quasicrystals by Dan Shechtman, who earned the Nobel Prize in Chemistry in 2011 for this work. While studying the electron diffraction patterns from a rapidly solidified aluminum alloy in 1982, Dan saw symmetries that—according to the existing theory of crystal structure—were impossible. His observations and hypothesis were opposed by both the prevailing theory and two-time Nobel Prize-winner Linus Pauling, one of the world’s most famous scientists. Shechtman spent more than two years gathering data and debating with colleagues before he was able to publish his work. To do this, Dan had to painstakingly eliminate all the other possible explanations for his measurements, including experimental errors. Dan’s determination—his perspiration—proved the conventional wisdom about crystal symmetry—and a double-Nobel-Prize-winner—wrong.

See? Even world-renowned experts can be wrong sometimes! (Pauling, however, never conceded.)

As this example shows, being wrong is not the same as being incompetent. Whereas incompetence involves being both wrong and lacking the conceptual tools to discover that you’re wrong, it’s okay to be wrong if you are able to realize your error and take steps to both correct and learn from it. Thinking like a scientist involves recognizing that you will occasionally (or more than occasionally) be wrong and knowing how to find out why. Science is a journey, and part of that journey is making errors and being empowered to make changes based on lessons learned.

Even the news-making science errors have had lasting, positive impacts. From the Tacoma Narrows bridge collapse, scientists learned the importance of wind and aerodynamics for bridges. After the Mars orbiter crash, NASA made changes that enabled the success of the two Mars rovers, Spirit and Opportunity. Making errors in science is just part of the process and allows scientists to learn and broaden what we know. It’s only by being wrong that we ever learn what’s right.

So, to all you scientists and non-scientists, go forth and be wrong! You’ll probably discover something new on your journey.

About the author

Related Posts

Comments

Thanks, Kate!

And I'll share with my daughter who is studying civil engineering at UMASS Amherst. She hates to be wrong!

I'm glad to hear that! Making errors is part of the process of being a scientist or an engineer.

The latent print community in forensics certainly learned a great deal from the Madrid error. Thanks for a fresh perspective and a well articulated commentary.

I hadn't heard the story about the Madrid error. Thanks for sharing!

Fantastic, Paulina!

Merci Paulina for your wisdom, but let us apply it to Theoretical Physics and to the present Standard Model, widely based on a beautiful theory, Relativity, which unfortunately came up 20 years before de Broglie discovery of duality. So relativity works very well but cannot picture truly reality because it is not a quantum theory.

How about also quantum entaglement which could have a semi-classical interpretation?

We have to distinguish between facts and interpretations of observations of the invisible sub atomic or cosmic world. and especially to open physics and astrophysics to competing ideas .

Competition is healthy in Sports, Business, Politics . Theoretical Science needs it.

Because subtle Errors can pop up for instance, not from mistakes, just when one forgets OKHAM's principle of parcimony.

How to believe a String Theory based on a Universe with 11 dimensions?? !!!

Example: it takes two physicists to open a Bordeaux wine bottle: one to hold the cork screw and the second one to turn the Universe.

Cordialement .

Claude

P.S. What do you expect to do with entanglement? What do you think about the quantum computer?

[email protected]

Thank you for sharing your experience.

Firstly, two recent big failures came to my mind while reading: Faster-than-light neutrino (OPERA) experiment and the crash of the Schiaparelli EDM lander (on Mars).

Secondly, I failed an exam just 3 days ago because of my luck of knowledge about scientific calculators (couldn't change the thing to calculate in degrees, in spite of radians - realising it towards the end of the exam and re-doing all that calculations manually just ate my time and points). Not exactly the same situation, but still, your article gave me a fresh air.

Good luck! (or, is this a proper good-wish for a scientist?..I don't know:)

Refreshing to hear such honesty - well done.

Matin Durrani, Editor, Physics World magazine

Thank you Paulina,

I routinely cite this blog in my course "Science as a Process" at the University of Oklahoma. One of my students first brought it to my attention. The article is useful in making the point that all hypotheses are tentative. It also emphasizes that the changes in policy concerning SARS-CoV-2, covid-19 are normal and signs of progress--not as an instrument of obfuscation!

Jim Estes

Thanks for the comment! That's a great observation that our journey with covid-19 is another great example of scientists testing hypotheses, learning and corrected course.

LOVE this! I am going to share it with my students at UDC :-)