Taking Measure

Just a Standard Blog

The influence of artificial intelligence (AI) is everywhere in our lives. It helps us pick movies to stream, recommends old friends to reconnect with, and autocompletes our most pressing questions in search engines.

Modern AIs are powerful machines, ingesting reams of data and outputting useful relationships between data points. If you manage a baseball team, you can create an AI model that will take factors like the weather, the opposing pitcher and other aspects of the game situation to determine which batter to substitute to improve your probability of winning. The AI won’t tell you why it selected batter A over batter B over batter C, only that batter A provides the best chance for an optimal outcome like hitting a home run. But, if you had to select the next batter from the stands, its recommendations would be useless.

These are both known problems in modern AIs: They can’t tell you the “why” underlying the relationships they discover and are notoriously poor at predicting outcomes they haven’t seen before. For this reason, scientists often find it hard to trust AI systems because scientists constantly seek to understand the “why” of our observations.

The inverse of a modern AI is the traditional scientific theory, in which physical laws are condensed into elegant mathematical models that can be applied to a wide variety of similar problems. The same classical or “Newtonian” equations that describe the path a baseball takes can be used to describe the motion of the heavens. Moreover, if you know the initial velocity of any object (baseball, football, ham sandwich), you can predict its path without needing to measure something similar first.

However, if you tried to use the same set of equations to describe the path of an electron in a molecule, which obeys the bizarre rules of quantum physics, then the results would be less than satisfactory. This is a known limitation of traditional science: Different phenomena can be dominated by different sets of rules, and sometimes the connective tissue between physical regimes isn’t well known. For instance, we don’t understand how physics transformed into biology or how biology leads to the rise and fall of civilizations.

Moreover, there are often multiple potential conflicting explanations for an observation. Did the ball curve left because of its spin or because of a turbulent gust of wind? In some sense, science is brittle: The rules work until something unexpected happens, and a great deal of research effort is devoted to teasing out what exactly caused the ball to curve the way it did.

Traditional Science vs. AI

This is the origin of the current tension between traditional AI and science: AI can fit any dataset with exquisite accuracy but without any causality, while traditional science is built around rules that teach us why but can be broken. Truthfully, however, the tension is the result of a flawed decision to view the use of these techniques as mutually exclusive. Instead, a more fruitful approach is to combine the strengths of AI (flexible correlation masters) with the strengths of traditional scientific models (explainers of why) to create a symbiotic relationship.

Think of it this way: With a good understanding of Newton’s laws and AI models that correlate the weather, the pitcher’s arm motion, and how the bat is swung to the likelihood of a batter hitting a home run, you could create a hybrid model that could power a national championship. As an added benefit, knowing the scientific underpinnings would allow confident extrapolation to any batter/pitcher combination (maybe even someone from the stands if they took a few practice swings), and the AI could provide statistical tools to differentiate the effect of ball spin versus a gust of wind.

These exact ideas are currently driving the emerging field of scientific AI. In scientific AI, physical laws are incorporated into AI algorithms, creating a whole that is greater than the sum of its parts. By putting the “why” into our AI, we can generate predictions that allow us to see what influenced how the predictions were made or maybe even explain how the predictions were made in a human-understandable way. This would enable us as scientists to trust those predictions enough to try them out, even if they challenge our worldview.

Here, a new problem emerges. Scientific AI is so powerful, flexible and curious that testing its new ideas and separating genuine insights from extrapolation error is now the work of many lifetimes. A scientific AI doesn’t care if it’s wrong; each “error” just means the next set of predictions is better. But as human scientists, we don’t have many lifetimes to accomplish our work. We also like to sleep, eat and spend time relaxing at home, but the AI will update its model and make predictions as fast as we can provide it with fresh data.

Robots to the Rescue

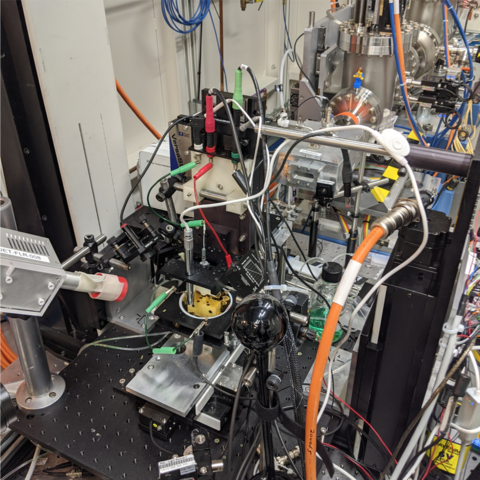

To keep up with the AI, our team has been designing robots that automatically perform the experiments recommended by our scientific AIs with minimal human intervention. The idea is simple. If you build a robot that can control the parameters that affect your experiment and understands the physical rules that lead to your final observation, then you can arrive at your desired outcome (new optimum or new insight) in days instead of decades. In the process, we get to know when our model is venturing away from solid ground if a series of observations are not explainable by any known models, while at the same time potentially finding and learning something about the blind spots in the conventional wisdom.

Our robots don’t look anything like C-3PO. They more closely resemble a collection of syringes, tubes, electrodes and stages stitched together with parts generated at your local maker studio. Our robots are powered by an AI that controls every aspect of our experiments. In our case, this means that our robot identifies a corrosion-resistant coating to test through an understanding of every previous experiment performed in the lab. It then mixes the ingredients, coats them onto a surface, tests the coating properties, and then decides upon the next coating to make based on the results, all without the humans needing to touch a single beaker. Why corrosion-resistant coatings? That is a topic for a different blog post, but suffice it to say that since corrosion mitigation and remediation costs 3.1% of the U.S. GDP, it is a big-time problem.

Using AIs, we have already demonstrated a hundredfold increase in the rate of the discovery of new coating materials, including a coating that could be implemented to increase the lifetime of medical stents.

Building and testing scientific AI with robots is exciting! At this moment, dozens of labs across the world are spinning up different and competing AI-driven robotic platforms to discover new, potentially more conductive solar cell coatings, safer electrolytes for lithium-ion batteries, and stronger 3D-printed structures, for example.

As scientists begin to develop interpretable and trustworthy scientific AIs, we have to remember that our models will be influenced by the uncertainty and errors contained in our measurements in ways that are not yet clearly understood. Uncertainty comes from inaccuracy and imprecision either in our observations or in how we make measurements. For instance, a radar gun in need of calibration may measure pitch speed as 100 mph versus 95 mph. Uncertainties tend to get carried through calculations in unexpected ways, and so the radar gun uncertainty could result in a model that predicts the ball will travel 193 meters (643 feet) plus or minus 193 m, meaning we have no idea where the ball will go.

As a neutral and nonregulatory agency, NIST has an important role to play in this new field by providing guidance for how to include and measure the impact of uncertainty and errors on scientific AI’s predictions. We do this by generating reference datasets that are designed to put a new algorithm’s ability to handle and explain sources of uncertainty or possible erroneous data through its paces. We also make our robots directly available to U.S. businesses and educational institutes to help them see the benefits of adopting best practices in robot and scientific-AI design.

Success in this area promises immense payoffs: A community consensus for generating AIs that are interpretable, explainable and trustworthy! More scientific discoveries! A better understanding of our world! And the freedom to ask high-risk questions whose answers could be revolutionary! It is awesome to know when I walk into the lab every morning that our work here at NIST has the potential to transform scientific AI into a trustworthy and sustainable tool for maintaining American technological competitiveness.

About the author

Related Posts

Comments

I hope you truly enjoyed the read. The intersection of science and machine learning (or if you prefer AI) is of great interest to us. I was truly surprised at the overlap there between statistical mechanics (my favorite undergraduate class) and Bayesian Statistics. As you continue through your PhD, please keep in mind that there are a great number of people here at NIST who are working in and around this field. Also, that we are always looking for talented and inquisitive young scientists to come work with us. (https://sites.nationalacademies.org/PGA/RAP/index.htm)

This is a troubling article that makes excessive claims such as "AI can fit any dataset with exquisite accuracy". However, the long story of AI failures, robot inflicted deaths, and self-driving car crashes need to be recognized as warning signs that more care and oversight is needed. The sycophantic techno-phrasing of "robots to the rescue" should warn readers that this researcher needs to be more cautious. High-throughput testing has been common practice, so adding further automation is useful when done with care, but human researchers are still the ones who retain control and put their names on the published papers.

how is this AI different from the combination of Hardware assembling and Software Embedding onto it? can anyone throw some light on this,

Great question. There are a number of differences but probably the most important is that one needs to actually create science-aware AI. Your common out of the box AI/ML tools are good at exploiting correlations between features in your data set to an output, but are not aided in any way by the knowledge that we have worked hard to generate over the history of science.

Addressing this should improve model quality and allow us to pursue more interesting scientific questions. Unfortunately, accomplishing this in a general way is hard, and is an active (and emerging?) area of research.

Please note that this involves algorithm development followed by software implementation.

For us, the robot is (1) a means for validating and invalidating different methods of combining science and AI, (2) a framework for investigating scientific model validity and finding the where models (unexpectedly) break down, and (3) a platform through which we can interact with and help educate stakeholders. This last bit is important. In principle the AI-driven robots illustrates for them the merits of developing and refining SciAI (e.g. new and useful materials or insights faster), promotes informed skepticism, trust, and know-how (e.g. when to know things are going smoothly, when they are going awry, how to build the right model for the question asked), and provides them with bench marking data or a bench marking tool for their new SciAI algorithms.

--The “Scientific Ai For mAteRials scIence” (SAFARI) Team

Today's scientists don't ask themselves why an experiment hadn't proven their theses often enough. They just continue to rely on the same flawed plan, presuming that it will magically work later rather than study the implications of their previous experiments & the reasons why they hadn't performed to the scientist's liking. That line of thinking, in my view, is flawed & too conservative.

AI can be used to do so many things to keep society going, but we need to understand that a slow & steady progression will do a better job of preserving life. The tortoise beat the hare when the hare thought that he had had it in the bag & squandered his lead. We can't afford to sell ourselves short.

AI is often confused with robotics by those who read what gets fed to us by those who don't want us to learn the truth or by other readers who have been left just as ill-informed as ourselves. This isn't about the, "Six Million Dollar Man. " It's about programming chips that enter a CPU (brain) programmed by man ( imperfection. )

Yes, in theory, the best programmer can get a robot to give him the response that he needs at the given time, but it won't foresee the complications that batter a, b or c will face from the outfield manned by the opposing team. We accomplish our most strident results when we use the ancient arts of teamwork, inquisition & communication.

If we let the power slip into the hands of those who don't care where it takes us, then we won't have ourselves left to blame.

This is an excellent, and eloquently stated, illustration of how and why traditional science and artificial intelligence (AI) are often misconstrued as mutually exclusive and why AI can truly benefit from. Particularity considering that AI wouldn't exists in the absence of the scientific method applied to probabilistic theory, the underpinnings of AI. As a Ph.D. candidate researching AI, I find it increasingly important to review some of the traditional sciences i.e., classical and quantum physics, chemistry, biology, etc., including several of the social science disciplines. Albeit the mathematics may differ; however, there are volumes of "lessons learned" that may be relative to some of the challenges encountered, currently or in the near-term, in building the innovation relatively novel fields of AI goals and objectives.