Cybersecurity Insights

a NIST blog

Workloads of Counting Queries: Enabling Rich Statistical Analyses with Differential Privacy

We are excited to introduce our first guest author in this blog series, Ryan McKenna, at University of Massachusetts at Amherst, whose research represents the state of the art in the subject of this blog post: answering workloads of statistical queries with differential privacy. - Joseph Near and David Darais

To date, this series focused on relatively simple data analyses, such as learning one summary statistic about our data at a time. In reality, we’re often interested in a slightly more sophisticated analysis, so we can learn multiple trends and takeaways at once and paint a richer picture of our data.

In this post, we will look at answering a collection of counting queries—which we call a workload—under differential privacy. This has been the subject of considerable research effort because it captures several interesting and important statistical tasks. By analyzing the specific workload queries carefully, we can design very effective mechanisms for this task that achieve low error.

What is a workload?

As discussed in a previous post, predicate counting queries have a particular form, such as: “How many people purchased only pumpkin spice lattes this month”. The property of “purchased only pumpkin spice lattes” can, in general, be replaced with another property of interest. Counting queries are particularly compatible with differential privacy, because it requires little noise to release the answer to a single counting query with differential privacy.

The answer to one counting query can have value, but we’re often interested in learning much more about the dataset than a single summary statistic. A collection of predicate counting queries, called the workload, can support richer analysis of the dataset, including many common statistical summaries such as histograms, marginals, cumulative distributions, the sufficient statistics of some machine learning models, and even statistics derived from U.S. Census data.

One simple 3-query workload could be:

1. How many people purchased only pumpkin spice lattes this month?

2. How many people purchased only caramel lattes this month?

3. How many people purchased any lattes this month?

In this workload, the first two queries are non-overlapping, while the third overlaps with the first two. Overlapping queries pose an interesting challenge, as they tend to require more noise to answer than non-overlapping queries. In the extreme case, all of the queries could overlap, as shown in this example workload based on Census publications:

1. How many people are 16 years and over?

2. How many people are 18 years and over?....

29. How many people are 62 years and over?

30. How many people are 65 years and over?

This workload encodes 30 queries necessary to compute an empirical cumulative distribution function (CDF) of ages over the individuals in the dataset. Despite its simplicity, this workload can be challenging to answer with suitably low noise under differential privacy using natural baselines like the Laplace mechanism, and serves as a good example of the potential benefits of using advanced mechanisms that intelligently adapt to the workload.

Mechanisms for answering workloads

Our goal is to design a mechanism that answers every query in the workload, while providing low (ideally, minimal) expected error (i.e., noise) on those queries. This is challenging, as the space of possible mechanisms for this task is infinitely large.

One simple mechanism is to repeatedly apply the Laplace mechanism for each query in the workload—a reasonable approach that may even work well for small workloads. For the example CDF workload, this approach would require dividing the privacy loss budget 30 ways, which means adding noise with magnitude 30/epsilon (30 times more than the usual 1/epsilon) to each query. It is often possible to do much better than this.

Another simple mechanism to consider is to first compute the histogram of ages (discretized into 30 bins), then estimate the cumulative counts by adding up adjacent cells of the noisy histogram. Since these queries are non-overlapping, we can answer them all using Laplace noise with magnitude 1/epsilon. This might seem like a much better approach, but unfortunately, the drawback is that the noise introduced to each histogram cell accumulates when estimating cumulative counts. For example, the count of people who are 16 and over requires adding up 30 counts, each with noise 1/epsilon, for a total noise of about sqrt(30)/epsilon. This is an improvement over the baseline mechanism above (at least for this example workload), but it is still a fairly naive baseline, and we’d like to do better.

An improved approach is conceptually a middle ground between the two extremes above called the matrix mechanism [1,2]. The idea is to invoke the Laplace mechanism on a carefully selected set of queries (different from the workload), then use the noisy answers to those queries to estimate answers to the workload queries. Finding a good strategy—or set of queries to answer with Laplace noise—is a technical challenge, but good strategies are known for simple and well-studied workloads like the CDF workload. The two baseline mechanisms above can be seen as instantiations of the matrix mechanism with different strategies: the workload queries and the histogram queries each being a different strategy. Identifying the best strategy is an optimization problem, where the optimization variables are simply the queries in the strategy, and the optimization objective is to minimize the expected error of the mechanism (on the workload queries). While it is challenging to solve this optimization problem in practice, effective algorithms exist when the workload is sufficiently small, or has certain special structure [2].

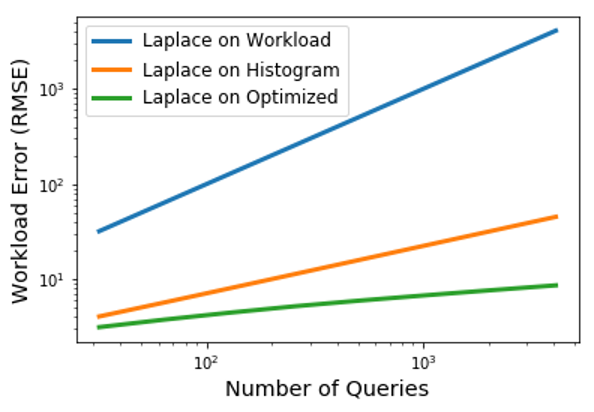

While it is out of scope for this post to delve into these technical details, we’ll demonstrate the potential benefit from this approach in the figure below. We consider a generalization of the CDF workload with varying number of queries, corresponding to different discretization granularities. As we can see, the first baseline mechanism (Laplace on Workload) is the worst in terms of root mean squared error (RMSE), the second baseline mechanism (Laplace on Histogram) is an improvement, but the matrix mechanism (Laplace on Optimized) is the best. The improvement is up to 5.2 times better for the largest workload considered, highlighting the benefit of the matrix mechanism: substantially lower error at no cost to privacy just by using better strategies. This improvement is for one simple workload—in general, the magnitude of improvement will be different for other workloads and can be much larger.

We’ve focused on the matrix mechanism to answer a workload of predicate counting queries, but it’s by no means the only mechanism for this task. Other mechanisms have been proposed, although many have been shown to actually be special cases of the matrix mechanism. The matrix mechanism is a state-of-the-art approach for this task, at least when the amount of data or the privacy loss budget is sufficiently large. Other approaches may do better in the low data regime—see [4] for an overview of other mechanisms and the factors that influence their relative performance.

Limitations

We saw that lower expected error can be achieved when using better mechanisms for workload answering, but it is important to highlight the assumptions and limitations on the structure of the workload for this mechanism and others:.

- Most mechanisms for this task expect the data domain to be finite and reasonably small. The data domain is the set of all possible rows, and for multi-dimensional data, the product of the domains of all attributes (i.e., columns in the dataset), which grows exponentially with the number of attributes. Some mechanisms are able to scale to domains as large as 10^9, while others may struggle to scale to domains this large, and may only work for even smaller domains.

- Workloads with special structure can enable improved scalability. If the domain size is sufficiently small (say, less than 10^4), then no structural constraints on the workload queries are necessary, and the conditions in the counting queries can be completely arbitrary. For larger domain sizes common with high-dimensional data, [2] requires that the conditions are conjunctions. For example, the query “How many individuals in the database have Income <= 50K and age = 30” would be allowed, but the query “How many individuals in the database have (Income <= 50K and age = 30) or age >= 50” would not be allowed. While this may seem like a strict assumption many real-world queries are conjunctions, and often more complicated queries can be broken up into conjunctions. In fact, the queries needed to release U.S. Census statistics have this special structure.

Still, it is often possible to work around these limitations by introducing certain heuristics or other assumptions; however, this may require input from a domain expert and analysis on a case-by-case basis.

Open Source Tools

DPComp [4] is a web-based tool designed for practitioners and researchers to assess mechanisms for workload answering. They primarily focus on low-dimensional data (1D and 2D) and range query workloads (like the CDF workload). The tool is easy to use (even for non-experts) and offers visualizations that demonstrate the behavior of different mechanisms in a variety of settings.

εktelo [5] is a framework for designing new mechanisms from building blocks, and includes implementations of a variety of mechanisms from literature. It requires expertise in differential privacy to use, but provides utilities for efficiently constructing and representing workloads.

εktelo is indexed in NIST’s Privacy Engineering Collaboration Space, where you are invited to share feedback or use cases related to these tools.

Coming Up Next

So far, we've focused on queries over a single database table. However, many databases—like those storing information about pumpkin spice lattes—have multiple tables, and queries combine data from these tables to compute results by using joins. As we will explore in our next post, the join operation has significant implications for privacy, and it turns out to be extremely challenging to answer multi-table queries under differential privacy with good accuracy. Our next post will describe these challenges, summarize the state-of-the-art approaches for overcoming them, and describe the tools available for implementing these approaches.

This post is part of a series on differential privacy. Learn more and browse all the posts published to date on the differential privacy blog series page in NIST’s Privacy Engineering Collaboration Space.

References

[1] Li, Chao, et al. "Optimizing linear counting queries under differential privacy." Proceedings of the twenty-ninth ACM SIGMOD-SIGACT-SIGART symposium on Principles of database systems. 2010.

[2] McKenna, Ryan, et al. "Optimizing error of high-dimensional statistical queries under differential privacy." Proceedings of the VLDB Endowment 11.10 (2018): 1206-1219.

[3] Hay, Michael, et al. "Boosting the Accuracy of Differentially Private Histograms Through Consistency." Proceedings of the VLDB Endowment 3.1 (2010).

[4] Hay, Michael, et al. "Principled evaluation of differentially private algorithms using dpbench." Proceedings of the 2016 International Conference on Management of Data. 2016.

[5] Zhang, Dan, et al. "Ektelo: A framework for defining differentially-private computations." Proceedings of the 2018 International Conference on Management of Data. 2018.