Summary

Machine Learning (ML) has many applications, which include monitoring and control of automated systems such as factories and warehouses. These Industrial Internet of Things (IIoT) systems generate massive amounts of data that must be processed and used to adaptively control system operations, while making the most efficient use of the system's available communications, computing, and energy resources. NIST is investigating how ML systems interact with IIoT systems and is developing tools for measuring the performance of these systems, which will support the continued evolution of the manufacturing, energy, and transportation sectors of the U.S. economy.

Description

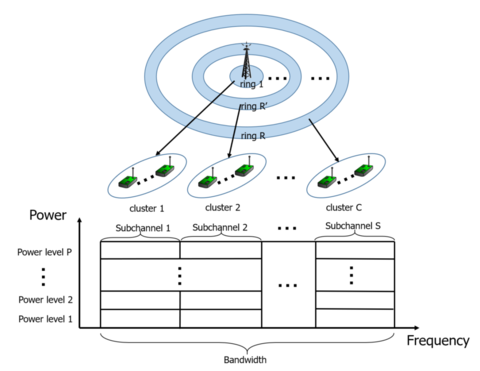

Resource Allocation in IIoT Systems

As with any large an complex system, Industrial Internet of Things (IIoT) deployments require the system operator to efficiently allocate the available bandwidth, computing, and energy resources. This is challenging because IIoT systems, especially large ones, can be complex with rapidly changing operational conditions, which makes using traditional optimization techniques difficult. ML techniques can be helpful in such situations. One such technique, reinforcement learning, can create ML systems that are exceptionally good at playing strategy games, such as Chess and Go, but it is applicable to many other situations that can be modeled as a kind of game, where there is a goal or set of goals that can be associated with quantitative rewards (or points, to use a gaming term). Reinforcement learning involves letting the ML system "play the game" over and over, assessing its reward each time, until it develops a set of strategies that tell it what to do when the system is in a certain state. In this project, we have used a variant of reinforcement learning known as Deep Q-learning to demonstrate how this approach can be used in IIoT systems.

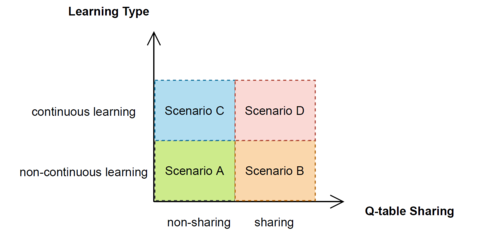

Continuous Learning for IIoT Systems

Because IIoT systems can change in significant ways, a "once and done" approach to training ML systems for IIoT applications may not suffice. This project has examined online continuous reinforcement learning, which uses system monitoring to trigger retraining of the controller and update its models. The work compared four scenarios for continuous learning: non-continuous learning without learning model sharing, non-continuous learning with learning model sharing, continuous learning without learning model sharing, and continuous learning without learning model sharing. Each scenario involved designing, implementing, and evaluating a reinforcement learning algorithm. The results showed that online continuous reinforcement learning can reduce retraining overhead, which is promising for IIoT applications where the operating environment changes over time.

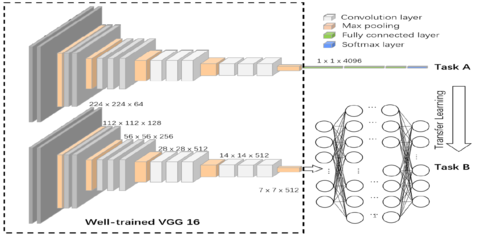

Transfer Learning for IIoT Systems

Sometimes, it isn't feasible to train ML systems "from scratch" when using them in a new environment. However, a ML system can be trained using a generic set of data and then "tweaked" to work with its deployment environment. This approach, known as transfer learning, can be efficient because it reduces the amount of computational effort to deploy ML systems. This project examined transfer learning for IIoT by considering a computer vision system that does component recognition in four scenarios: centralized transfer learning with large datasets, distributed transfer learning with large datasets, centralized transfer learning with small datasets, and distributed transfer learning with small datasets.