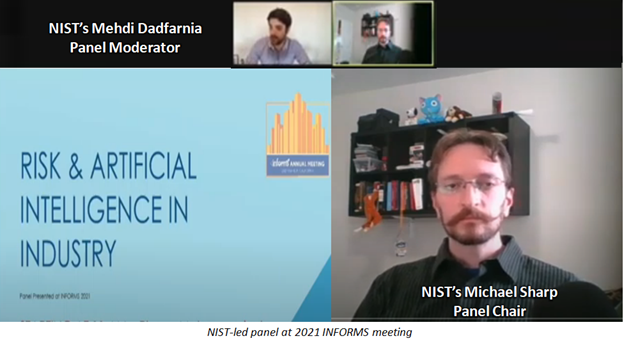

NIST-led Panel Assesses Test and Evaluation of Industrial AI, Risk Awareness, and Barriers to Use

At its 2021 meeting, the Institute for Operations Research and the Management Sciences held a panel on Testing and Evaluating Industrial AI and Risk Management, chaired by NIST's Michael Sharp and moderated by NIST’s Mehdi Dadfarnia. The panel included experts in industrial AI and sought the knowns and unknowns in this fast-changing field. The following are some key points.

Need for Testing and Evaluating industrial AI: Many AI systems are producing good results, but some are not. Determining the good and bad depends on testing and evaluating AI systems, which some do not know how to do, or do not have the resources to accomplish. In some cases, the testing does not exist.

Determining Risks in Industrial AI: There are risks in applying an AI to an industrial system, as well as risks within the AI and industrial system separately. These standalone risks along with their possible interactions must be considered in testing and evaluation. Test Scenarios for AI Must Reflect Real-World Uses: In practice, this is hard to do, as not everything can be tested. Testing requires controlled and bounded scenarios. However, even with bounded limits, it is infeasible to predict and evaluate every possibility for many systems. This is particularly true in systems where the most pertinent scenarios are rare by design, such as predicting failures in safety-critical machines.

Determining an AI system's Acceptable and Unacceptable Risks: These come from bounding test scenarios. Scenarios determined to be acceptable in their risks and consequences may not need to be tested. Conversely, identified, unacceptable risks should be in testing scenarios and evaluated for mitigation. The level of acceptable risk will vary with an AI system's needed reliability.

Determining How Much Testing and Evaluation Is Worth It: This depends on a business case, showing that an AI system will bring so much money by decreasing a degree of risk with low-level testing, then matching the resources put into testing to what is justified by the investment and expected return. This balance of testing versus return should qualify relevant metrics and scenarios to the point where the users trust that anything unaccounted for falls within their acceptable risk threshold.

Barriers to Industrial AI Adoption: Many industry stakeholders are resistant to invest in AI applications where there is a lack of trust or an unclear return from using the technology. However, those are not the only concerns. Many companies are hesitant to invest in AI tools that could be negated by changes in regulations, or even by rapid changes in the technology itself.