Summary

The activities described here support the application of high-dose, high-energy ionizing radiation for a variety of industrial processes. The largest application is the sterilization of disposable, single-use medical products (e.g., syringes, bandages, surgical gloves); more than 40 % of such products are sterilized with radiation. Other applications include the radiation-induced materials modifications that improve the properties of plastic films and packaging, as well as the protective insulation on wire and cables. Inks on commercial packaging can be cured through a pollution-free process that avoids the use of volatile organic compounds (VOCs). Also, the irradiation of blood prevents Graft-Versus-Host-Disease in transfusions. Food irradiation, as a pasteurization process, reduces pathogens (e-coli, salmonella, listeria, etc.). Irradiation is used in agricultural pest control.

The high-dose dosimetry program supports radiation-processing applications by assuring that the absorbed dose to the product, often prescribed or limited by regulatory agencies, is traceable to NIST standards. In addition, our most accurate measurements using small alanine-pellet dosimeters for these high-dose processes show promise to provide traceability to national measurement standards in clinical applications for the small-field radiation beams increasingly being used in radiation therapy.

High-dose dosimetry service descriptions can be found in Procedures 11 and 12 of the RBPD Quality System.

Description

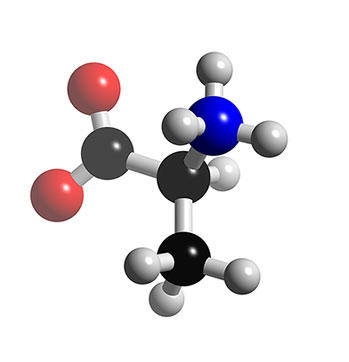

NIST developed the alanine dosimetry system in the early 1990's to replace radiochromic dye film dosimeters. Later in the decade the alanine system was firmly established as a transfer service for high-dose radiation dosimetry and an integral part of the internal calibration scheme supporting these services.

Detailed descriptions of the NIST high-dose irradiation facilities and the alanine dosimetry system can be found in the Accomplishments section of this web page below. Service descriptions and price schedule for NIST high-dose services are found in this link.

The intention of the following topics and supporting information is that it be used as a resource for users of these high dose services. This document provides additional information that is either newly developed or unpublished but pertinent to the application or interpretation of service information (e.g., NIST certificates).

The influence of absorbed dose rate:

Details of the dose rate effect (DRE) can be found here. This previously unknown rate effect for the alanine dosimetry system (Desrosiers et al., 2008) has serious consequences. A follow-up study (Desrosiers and Puhl, 2009) investigated the influence of irradiation temperature on the DRE. The DRE has resulted in a partial suspension of irradiation calibration services for alanine dosimeters.

Temperature coefficient studies:

The response of high-dose-range chemical dosimeters is dependent on the dosimeter temperature during irradiation. Typically, irradiation temperatures are estimated by measurements, calculations, or some combination of the two. Then using the temperature coefficient for the dosimetry system, the dosimeter response is adjusted or corrected to be consistent with the irradiation temperature for the calibration curve. Consequently, the estimation of irradiation temperature and the response correction via the temperature coefficient are sources of uncertainty in industrial dosimetry.

Studies to characterize the temperature coefficient for irradiation of dosimeters below ambient temperatures was completed several years ago (Desrosiers et al., 2004) on the only commercial dosimeter available at that time. A study of the influence of irradiation temperature on modern commercial alanine dosimeters is now complete (Desrosiers et al., 2012).

Conversion of absorbed-dose(water) to absorbed-dose(silicon):

Upon request from customers ordering NIST calibration of dosimeters for radiation hardness studies, NIST will convert its default absorbed-dose(water) to absorbed-dose(silicon). This is accomplished by applying a conversion factor of 0.916 to the dosimeter response prior to calculating the absorbed dose. The conversion factor is calculated as the ratio of two integrals over the weighted photon fluence spectrum in the NIST 60Co Gammacell irradiator; the numerator is weighted with the photon mass energy-absorption coefficient for silicon, the denominator with that for water. The fluence spectrum is derived by Monte Carlo simulation of the NIST Gammacell geometry; as such the 0.916 factor is specific to the NIST irradiator.

Uncertainties:

Uncertainty tables for the high-dose services are listed on a separate page.

Major Accomplishments

A list of major program/service facilities and activities:

Irradiation Facilities

For industrial high-dose dosimetry calibration services, the measurement quantity of interest is absorbed dose (to water, primarily), reported in units of gray (Gy). At NIST, absorbed dose is realized by a water calorimeter in a gamma-ray field produced by a Vertical Beam 60Co source. The technical specifications for this source were described previously. Because the dose rate for this source is relatively low, a high-dose-rate source is needed to perform customer calibrations. The bulk of the services are provided through three Gammacell 220 60Co irradiators (Nordion, Canada). The Pool 60Co source was de-commissioned in 2018.

Calibration of the gamma sources within the NIST high-dose calibration facility are performed by measuring the ratio of the alanine dosimeter response for the source being calibrated to that of a reference source. The absorbed dose for these internal calibrations is 1 kGy or less. This approach simplifies the source comparison to a measurement of two quantities, dosimeter response and time. Absorbed dose is not computed for this calibration exercise because these added steps will introduce additional uncertainties inherent in the calibration curve, and it avoids any issues that might arise from non-linearity in the dosimetry system dose response. Moreover, the very fact that this response-per- time calibration scheme was able to reveal the subtle rate-dependence described here is strong support for the validity of the method.

The three 60Co sources are used for NIST high-dose calibration services. Prior to 2004, the Pool source and the Gammacells were each calibrated by a direct dosimeter response ratio to the Vertical Beam 60Co source (Humphreys et al., 1998a). Between 2004 and 2018, the Pool source was used as an intermediary for traceability. In 2018, the weakest Gammacell, GC45, is now being used for traceability. The Vertical Beam 60Co source dose rate has decayed to a level that requires excessive periods of time (>24 h) to perform comparisons at the absorbed doses (≥1 kGy) routinely used, therefore an intermediary source is needed. Since the Vertical Beam 60Co source irradiations are performed under water, with the water surface in the vessel exposed to the room environment, there were concerns that a variation in the water level would contribute significantly to the uncertainty of the measurement, as it would be difficult to keep the water level constant for a prolonged period. To address the increasingly longer Vertical Beam irradiation times, modifications to the calibration scheme were developed so that the Vertical Beam 60Co source would be compared only to GC45. To improve several aspects of the measurement, the absorbed dose for this comparison was adjusted lower (140 Gy) for the GC45/Vertical-Beam source comparison. The two other Gammacells are calibrated by comparison to the GC45 source.

The calibration scheme begins with the known dose rate of the Vertical Beam 60Co source. To transfer that dose rate, eight alanine pellets are irradiated in the calorimeter water tank. The pellets are stacked in a watertight polystyrene cylinder whose axis is fixed perpendicular to the Vertical Beam 60Co source at a scale distance of 58.8 cm. The water surface is set at a scale distance of 53.8 cm. This design differs from the published scheme (Humphreys et al., 1998a). In the published scheme, this irradiation was done in a polystyrene phantom and a scaling theorem was used to correct for differences in photon interaction cross sections between polystyrene and water. This direct underwater measurement was an improvement as it eliminated scaling theorem uncertainties. In the current calibration scheme the dosimeters are irradiated at the appropriate distance underwater, and no additional corrections are applied to the measured data.

Concurrent to the Vertical Beam irradiation, alanine dosimeters are irradiated to the same absorbed dose (described in Humphreys et al., 1998b) in GC45. This comparison may be repeated as necessary to achieve an acceptable precision of 0.5 %. The dosimeters are measured using EPR, and the dosimeter response is divided by the irradiation time to convert to units of response/s. Once the measurements are converted to these common units, the established dose rate in the Vertical Beam source can be used to determine the dose rate in GC45.

Similarly, a series of comparisons are made between the dose rates at the center positions of GC45 and the two other Gammacells (GC232 and GC207) with the alanine transfer vial placed on a polystyrene pedestal set to position the dosimeters in the absolute center of the isodose region of the gamma field. For these comparisons a higher dose is used (e.g., 1 kGy) to reduce the contribution of uncertainty in the timer settings for the highest dose-rate Gammacell. In the GC232 and GC207, irradiations are performed on a pedestal either in a stainless-steel dewar or without a dewar; the dewar is used to improve temperature control at the extremes of the irradiation temperature ranges. The dosimeters are measured, and the response/s is determined. The center-position dose rate for GC232 and GC207 is determined by comparison to GC45 dose rate.

It should be noted that the equivalent transit time, the time subtracted from the timer setting that accounts for the absorbed dose received by the dosimeters during the delivery of the dosimeters to and from the irradiation position, is determined for each source. To measure the equivalent transit time, alanine dosimeters are irradiated for a series of very short times. Typically, these times are 5 s, 10 s, 20 s, 30 s, 40 s, and 50 s. The dosimeter response is measured and plotted versus irradiation time. A linear regression of these data is extrapolated to the x axis. The absolute value of the x intercept is the equivalent transit time.

Customer-supplied dosimeters for calibration are routinely irradiated in one of three calibration geometries: ampoule, Perspex, and film block. The rates for each of these positions in the Gammacells (though not all positions are used in each Gammacell), with and without a dewar present, are determined by comparison of the response/s for dosimeters irradiated in these positions to the response/s for dosimeters irradiated in the center position of the respective Gammacell. This final portion of the calibration scheme remains unchanged from that previously published (Humphreys et al., 1998a) and (Desrosiers et al., 2008). As a final check of the dose rates, all irradiation geometries are compared to confirm an equivalent measurement response for dosimeters irradiated to 1 kGy.

EPR Facilities

The Electron Paramagnetic Resonance (EPR) dosimetry facility and the gamma-ray irradiators that support the measurement system are unique in design and capabilities. The research efforts in radiation metrology provided by this facility have enabled U.S. industries to advance several new technologies, especially in health-related areas, and improve U.S. manufacturing efficiency.

The alanine dosimetry system has offered great benefits and flexibility to industrial dosimetry and the supporting calibration services. Alanine has a dose range that spans most industrial applications. The alanine system is mostly energy independent (above 100 keV) and radiation quality issues are not significant for electrons and photons. While estimates by NPL researchers of an electron-photon dose discrepancy was thought to lie within the uncertainty of the high-dose certification service, a review of literature from the last 15 years on scaling from Co-60 to electron beams revealed a conversion factor of 1.014 (standard uncertainty = 0.5 %) (McEwen et al., 2020).

Irradiation temperature and post-irradiation temporal effects have been extensively studied and are minimal (Desrosiers et al., 2006 and Nagy and Desrosiers, 1996). Relative humidity effects (Sleptchonok et al., 2000) on the alanine-EPR measurement are compensated for through the use of an internal EPR reference material (Nagy et al., 2000). These attributes, together with a rugged commercially manufactured dosimeter and a high-accuracy/precision EPR spectrometer system, enable NIST to operate a postal-based transfer dosimetry system with an expanded uncertainty of less than 2 % (coverage factor, k = 2).

The alanine pellet dosimeters currently used in the NIST calibration services are manufactured by Gamma Services (Germany) and distributed through Far West Technology (Goleta, CA). These dosimeters have been used by NIST since the inception of the alanine-based services. The current transfer dosimetry service protocol for the alanine absorbed dose measurement is found in Procedure 12 in the Radiation Physics Division (RPD) Quality Manual. For the internal NIST source dose-rate calibrations, the absorbed dose is not computed. As described in the protocol, the ratio of the alanine EPR signal amplitude to the ruby reference EPR signal amplitude is normalized to dosimeter mass and averaged for two measurement angles; these dosimeter values, referred to as the dosimeter response, are used to calibrate the dose rate for a fixed irradiation geometry for each radiation source.

Transfer Service Dosimetry System Calibration.

The alanine dosimetry system is calibrated approximately annually or whenever the EPR measurement configuration changes and/or a new dosimeter lot is used. The details of the calibration procedure are described in the RPD Quality Manual.

Comparisons

The third and most recent international comparison is underway. The second comparison is described elsewhere (See Comparison of Co-60 Absorbed Dose for High-Dose Dosimetry) and published here Burns et al. 2011. The first comparison was conducted in the 1990's and was published in 2006.